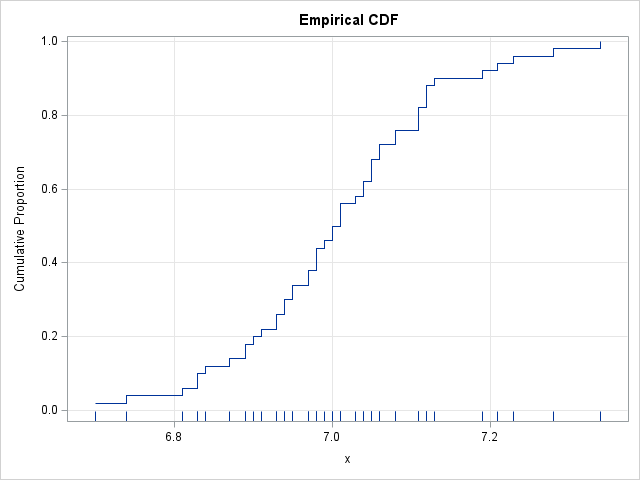

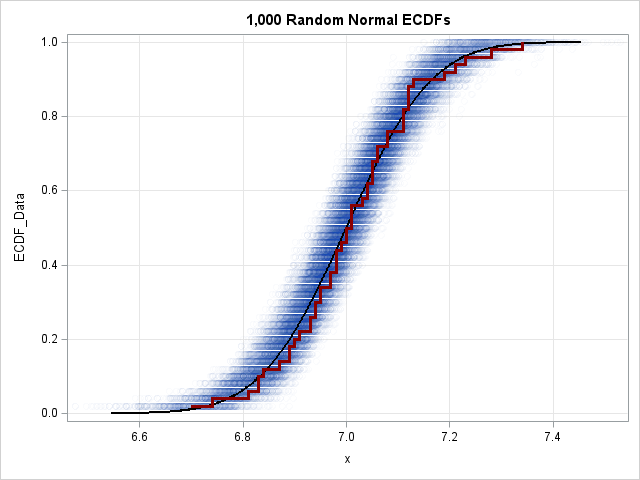

A previous article shows how to construct an empirical cumulative distribution function (ECDF) in SAS. The most common way is by using PROC UNIVARIATE, but you can also call the ECDF function in the SAS IML language. The ECDF is a tool for visualizing the distribution of a univariate sample