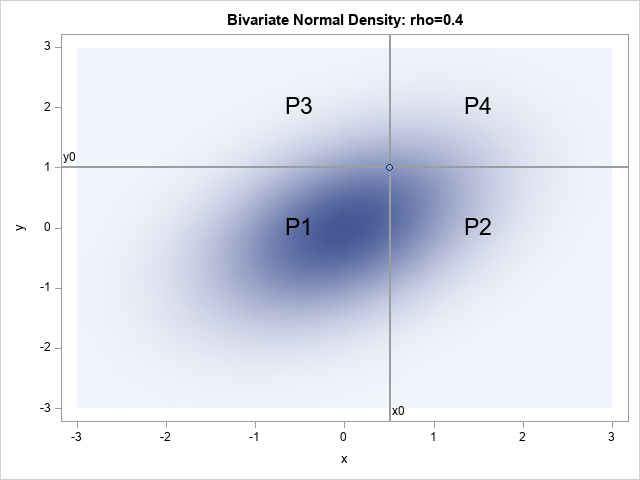

In general, it is very difficult to compute a probability for a multivariate continuous distribution. For all continuous distributions, the probability requires solving a complicated multiple integral. For example, the probability for a bivariate normal distribution requires integrating the bivariate normal density over a two-dimensional (2-D) area. The probability for