The SAS Data Science Blog

Advanced analytics from SAS data scientists

From fraud detection to fraud resolution: Building an AI voice agent with SAS Viya and LLMs

Learn how an AI voice agent built with SAS Viya and LLMs can transform fraud detection into real-time, automated fraud resolution through customer interaction and governed decisioning.

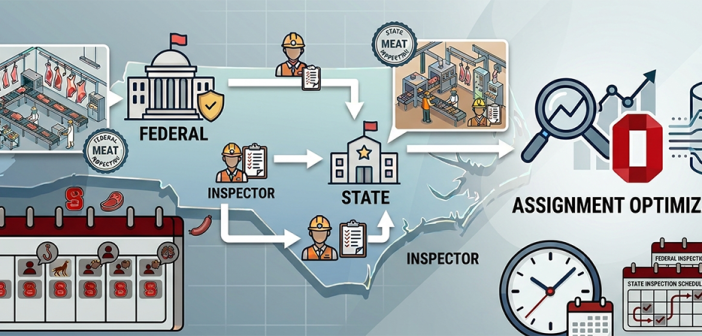

Optimizing inspector assignments using SAS Optimization in federal and state meat processing facilities

Shahrzad Azizzadeh and team discuss optimizing inspector assignments using SAS Optimization in federal and state meat processing facilities.

Using network analytics to analyze social interactions among needle sharers

Demonstrates how network analytics can transform a simple link list into actionable insights for public health decision-making.