Editor's note: this post was co-authored by Xin Ru Lee and Yi Jian Ching.

Though large language models (LLMs) are powerful, evaluating their responses is usually manual. Thus, they are sometimes prone to misinterpretation and can present incorrect and potentially nonsensical information.

One strategy that is often employed to ensure better responses from an LLM is prompt engineering, which is the idea that crafting and structuring the initial input, or prompt, can be done deliberately to retrieve better-quality responses. While there are many such strategies to improve LLM performance, prompt engineering stands out as it can be performed entirely through natural language without requiring additional technical skill.

How can we ensure that the best prompts engineered can be effectively fed into an LLM? Then, how do we evaluate the LLM systematically to ensure that the prompts used lead to the best results?

To answer these questions, we will explore how we can use SAS® Viya® to establish a prompt catalog, store and govern prompts, and a prompt evaluation framework to generate more accurate LLM responses.

While this framework can be applied to many different use cases, for demonstration purposes, we will see how we can use LLMs to answer RFP questions and reap significant time savings.

Putting LLMs into action

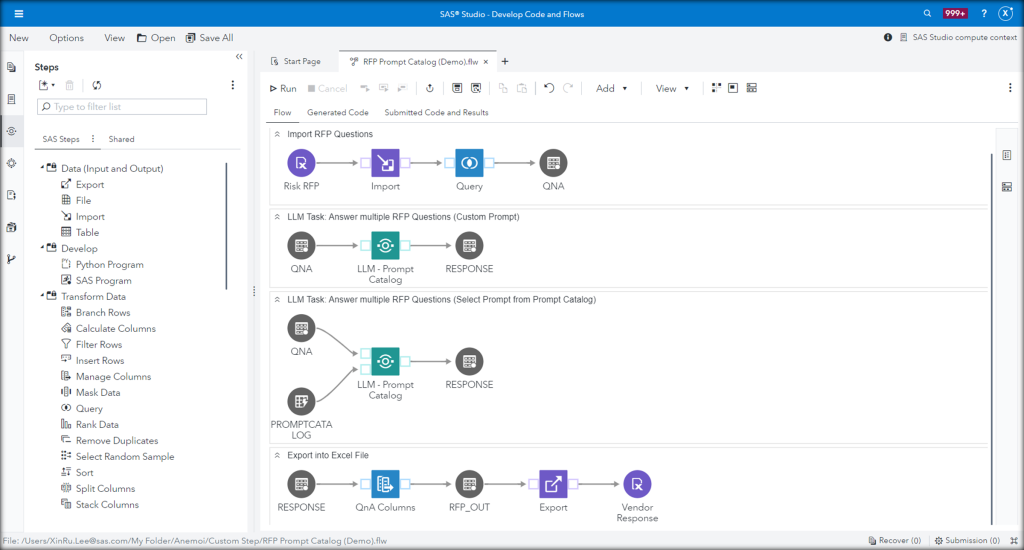

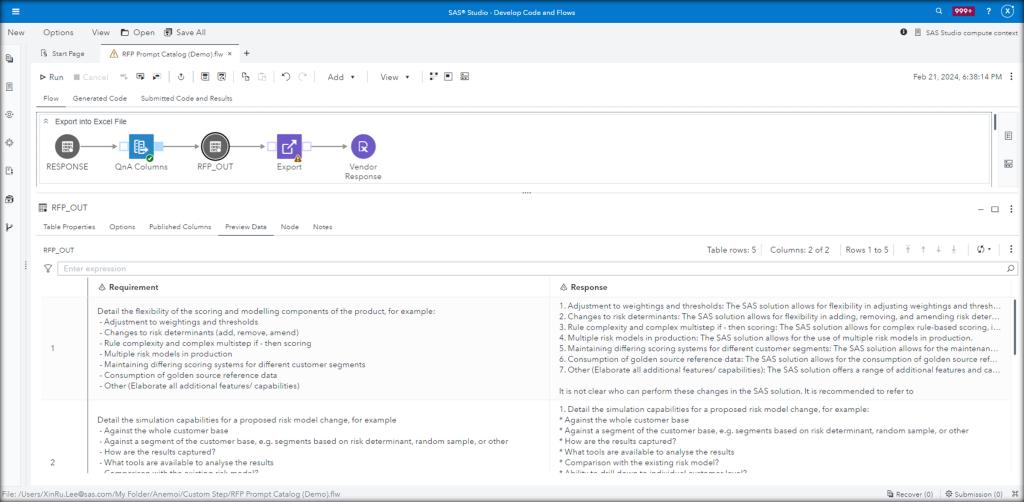

Now, let's take a look at the process flow.

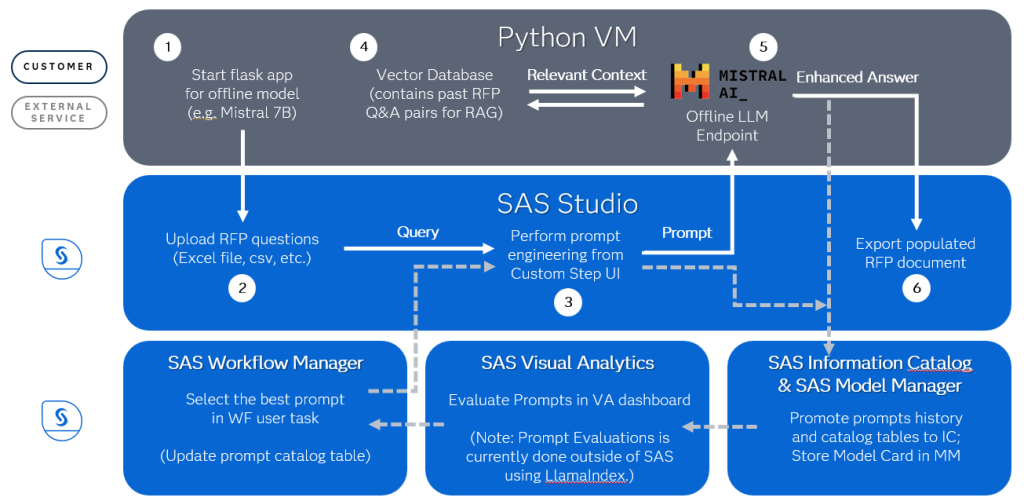

- To use an offline LLM, like Mistral-7B, we first need to start the Flask app in our Python VM.

- We will log in to SAS Studio to upload our RFP document containing the questions. This is typically in the form of an Excel file.

- Then, we perform prompt engineering from the Custom Step UI.

- The prompt will be submitted to the offline LLM endpoint, our Mistral model, where we can have a vector database storing previously completed RFP documents, SAS documentation, blog articles, or even tech support tracks to provide relevant context to our query.

- As a result of this Retrieval Augmented Generation (RAG) process, we get an enhanced completion (answer) that will be returned to SAS Studio.

- Here, we can then export the response table as an Excel file.

The process repeats itself to generate a new set of responses. After a few rounds of prompt engineering, we get a history of prompt and completion pairs. The question now is how we determine the best prompt to use in the future to ensure better responses.

Bringing in SAS® Studio

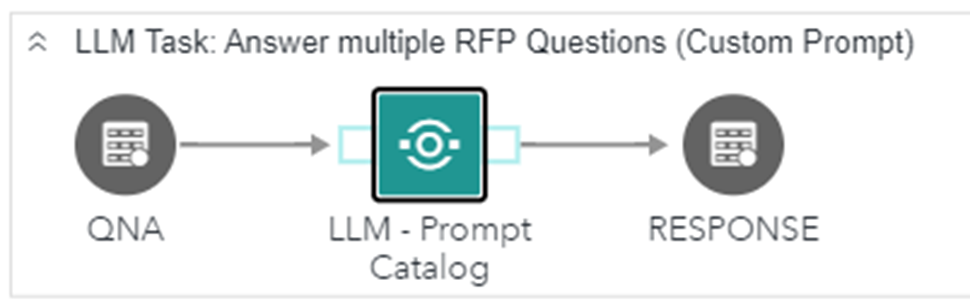

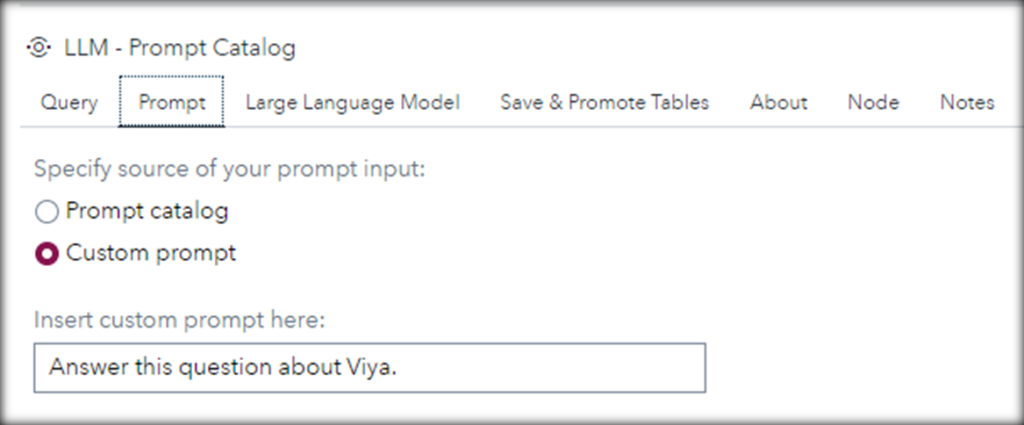

We will start by uploading the RFP document into SAS. This QNA table contains multiple questions for the LLM to answer. Then, we will bring in the "LLM - Prompt Catalog" custom step, where we will feed these questions as queries and also write our own custom prompt, such as, "Answer this question about SAS Viya."

In the Large Language Model tab, we want it to use the offline model, which is a "Mistral-7b" model in this case. We also have the option to use the OpenAI API, but we'll need to insert our own token here.

Lastly, we want to save these prompts and responses to the prompt history and prompt catalog tables, which we will use later during our prompt evaluations. We can also promote them so that we can see and govern them in SAS Information Catalog.

Let's run this step and discuss what is happening behind the scenes:

- What it's actually doing is making an API call to the LLM endpoint.

- Because of the RAG process that's happening behind the scenes, this Mistral model now has access to our wider SAS knowledge base, which includes well-answered RFPs and up-to-date SAS documentation.

- So, we'll get an enhanced answer.

- Using an offline model like this also means that we don't need to worry about sensitive data getting leaked.

- And just to emphasize again, this custom step can be used for any other use case, not just for RFP response generation.

How do we measure the performance of this prompt and model combination?

What about SAS® Information Catalog?

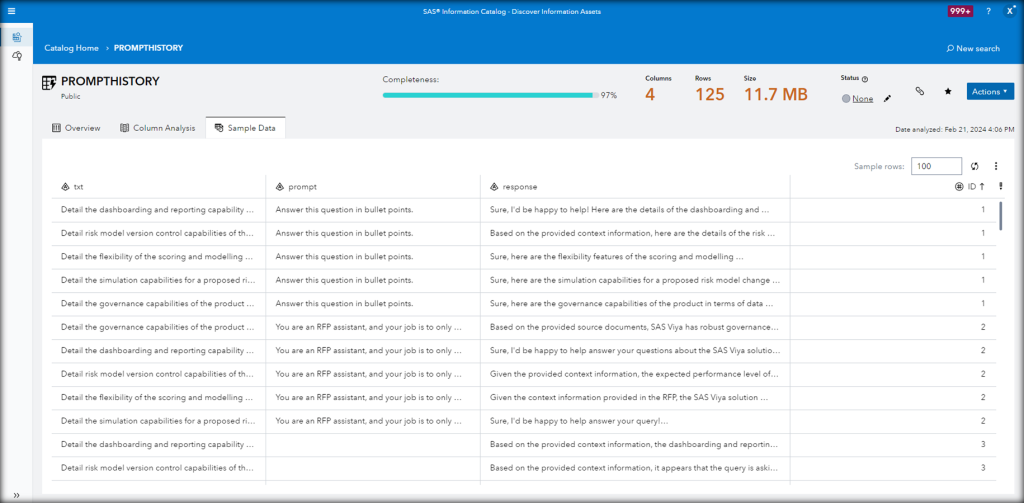

We will save and promote the prompts history to the SAS Information Catalog, where we can automatically flag columns containing private or sensitive information.

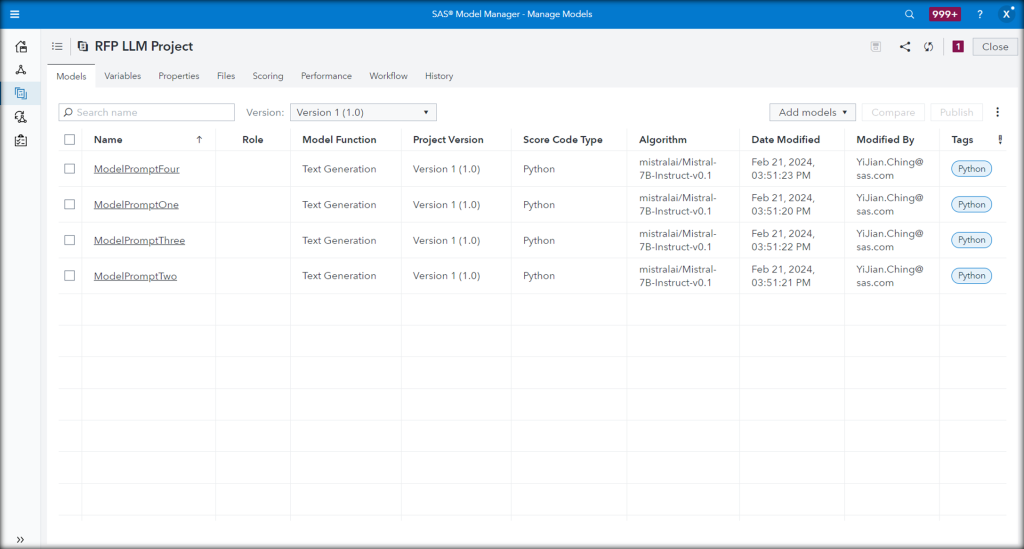

Storing the model card with SAS® Model Manager

We can also store the Mistral model card in SAS Model Manager together with the prompt so that we know which combination works best.

Going further with SAS® Visual Analytics

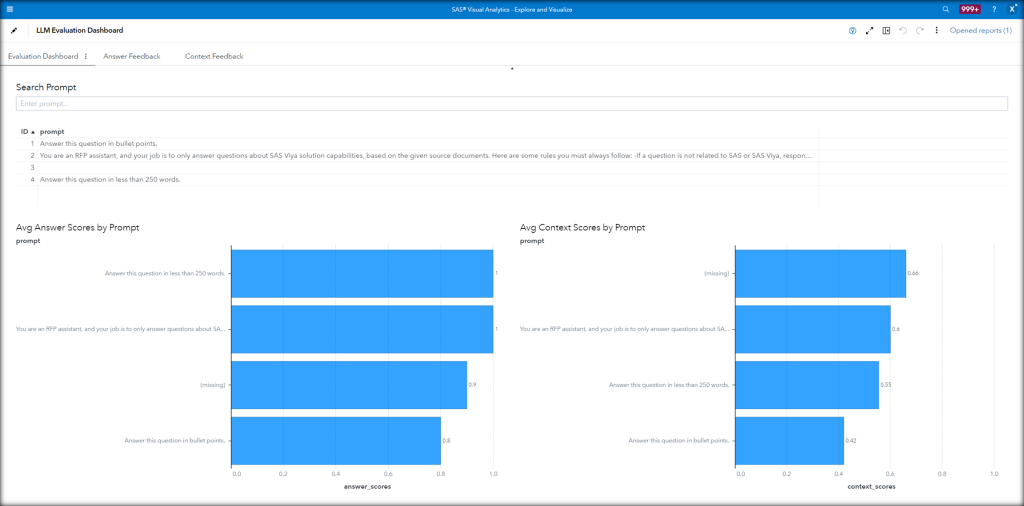

In SAS Visual Analytics, we can see the results of our prompt evaluations in a dashboard and determine which is the best prompt.

We used LlamaIndex which offers LLM-based evaluation modules to measure the quality of the results. In other words, it is basically asking another LLM to be the judge.

The 2 LlamaIndex modules we used are the Answer Relevancy Evaluator and the Context Relevancy Evaluator. On the lefthand side, the Answer Relevancy score tells us whether the generated answer is relevant to the query. On the right, the Context Relevancy score tells us whether the retrieved context is relevant to the query.

Both will return a score that is between 0 and 1, as well as a generated feedback explaining the score. A higher score means higher relevancy. We see that this prompt, "You are an RFP assistant…" which corresponds to Prompt ID number 2, performed well on both answer and context evaluations. So we, as human beings, decide that this is the best prompt.

Bringing in SAS® Workflow Manager

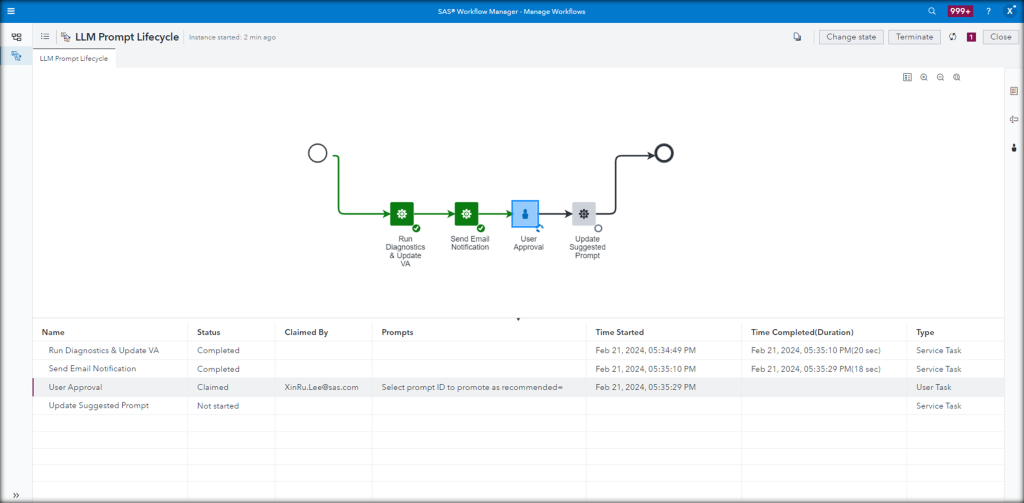

With the LLM Prompt Lifecycle workflow already triggered from SAS Model Manager, we automatically move to the User Approval task, where we have intentionally kept humans in the loop in this whole process.

This is where we can select Prompt ID number 2 to be the suggested prompt in our prompt catalog. This will be reflected back in the LLM Custom Step.

Reaching the final output

We can then go back to our custom step in SAS Studio, but this time, use the suggested prompt from the prompt catalog table. Finally, we export the question and answer pairs back into an Excel file.

This process is iterative. As we do more prompt engineering, we get more and more responses generated and prompts saved. The end goal is to see which prompt is the most effective.

To summarize, we can see how SAS Viya plays an important role in the Generative AI space, by building a prompt catalog and prompt evaluations framework to govern this whole process. SAS Viya can allow users and organizations to more easily interface with the LLM application, build better prompts and evaluate systematically which of these prompts leads to the best responses to ensure the best outcomes.