The DO Loop

Statistical programming in SAS with an emphasis on SAS/IML programs

I have previously written about how to efficiently generate points uniformly at random inside a sphere (often called a ball by mathematicians). The method uses a mathematical fact from multivariate statistics: If X is drawn from the uncorrelated multivariate normal distribution in dimensiond, then S = r*X / ||X|| has

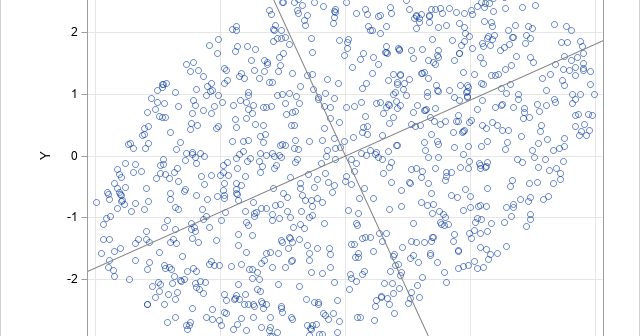

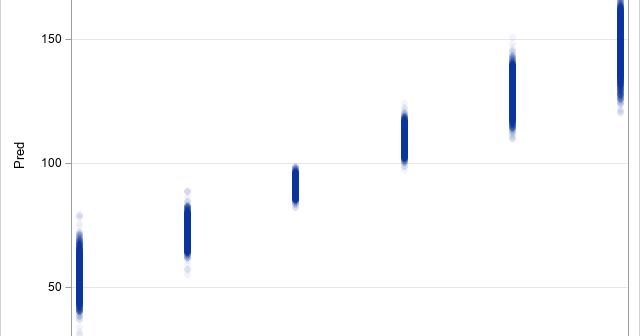

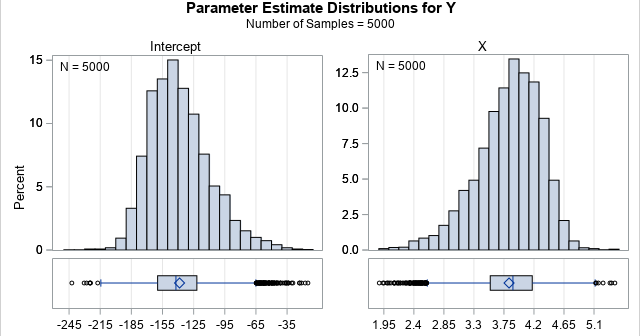

A previous article shows how to use the MODELAVERAGE statement in PROC GLMSELECT in SAS to perform a basic bootstrap analysis of the regression coefficients and fit statistics. A colleague asked whether PROC GLMSELECT can construct bootstrap confidence intervals for the predicted mean in a regression model, as described in

I've written many articles about bootstrapping in SAS, including several about bootstrapping in regression models. Many of the articles use a very general bootstrap method that can bootstrap almost any statistic that SAS can compute. The method uses PROC SURVEYSELECT to generate B bootstrap samples from the data, uses the