The real reason companies cleanse the historical demand is that traditional forecasting solutions were unable to predict sales promotions or correct the data automatically for shortages, or outliers. To address the short comings of traditional technology, companies embedded a cleansing process of adjusting the demand history for shortages, outliers, and

Search Results: data cleansing (127)

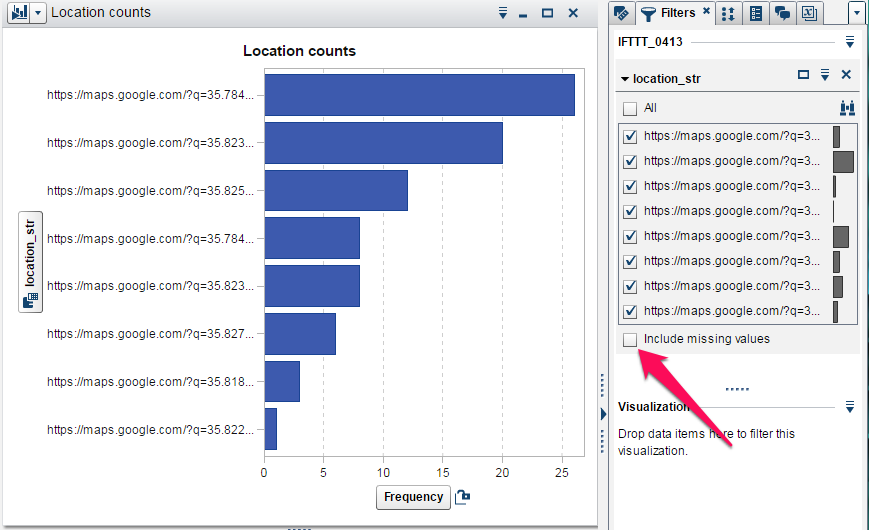

In part 1 of this series we looked at how to acquire personal data from the Internet of Things for our own exploration. But we found that the data was not yet ready for analysis, as is usually the case. In this part, we will look at how we can use SAS

Trustworthy AI is dependent on a solid foundation of data. If you bake a cake with missing, expired or otherwise low-quality ingredients, it will result in a subpar dessert. The same holds for developing AI systems to handle large amounts of data. Data is at the heart of every AI

Data quality is a cornerstone for integrating large language models (LLMs) into organizations. The adage "garbage in, garbage out" holds particularly true here. High-quality data is the lifeblood that ensures the accuracy, relevance, and reliability of the model's outputs. In a business context, this translates to insights and decisions that

Jim Harris takes a deep dive into data lakes and how they relate to the cloud.

Editor's note: Jack Liu is a member of SAS Analytics Explorers, a SAS community that is dedicated to exploring analytics, sharing knowledge, having fun and helping SAS users in their careers. Members were recently invited to share their analytics journeys, and Jack responded with his impressive story. If you're a

Note from Udo Sglavo: In our peace of mind blog series, we documented areas of analytics that are either evolving or not necessarily in the standard toolset of data scientists. We looked at causal modeling, network analytics, and econometrics, to name a few. With this blog post, we would like

Jim Harris shows how data, analytics and humans work together to form the "insight equation."

Data management has never been the shiny object that caught the imagination of the mainstream. And let’s be honest, it's not nearly as interesting as analytics, machine learning or artificial intelligence. In fact, entire movies get created about analytics, and people actually pay to see them! Data management? Not so

Put simply, data literacy is the ability to derive meaning from data. That seems like a straightforward proposition, but, in truth, finding relationships in data can be fraught with complexities, including: Understanding where the data came from, including the lineage or source of that data. Ensuring that the data meet compliance

Taking a decision is easy. However, living with the outcome might be problematic because every decision is a choice that leads us to an action that will affect others. And trust – what about this elemental human feeling? As children, we trust our parents. This kind of trust forms the

In the first post of a two-part series, Phil Simon plays point-counterpoint with himself.

Jim Harris shares examples of how and why AI applications are dependent on high-quality data.

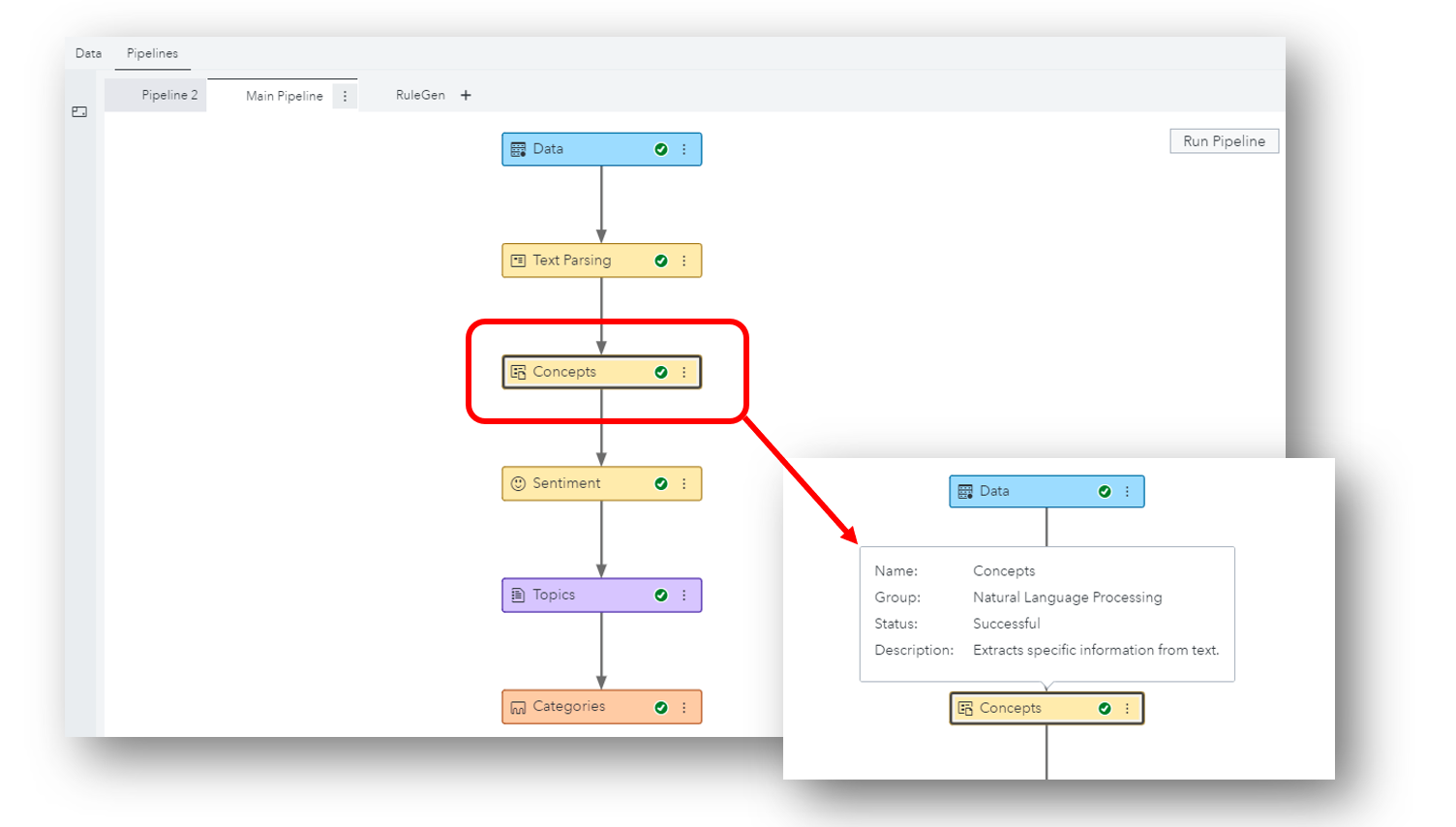

There is tremendous value buried text sources such as call center and chat dialogues, survey comments, product reviews, technical notes, legal contracts... How can we extract the signal we want amidst all the noise?

Phil Simon chimes in with some tips on how to set these folks loose.

How should a data trust process work? David Loshin elaborates.

Jim Harris warns against allowing your data lake to become a poorly managed and ungoverned data dumping ground.

If you need more than just well-mixed data, take a look at data preparation from SAS.

David Loshin says entity resolution isn't a bandage to fix errors – it should be part of your data strategy.

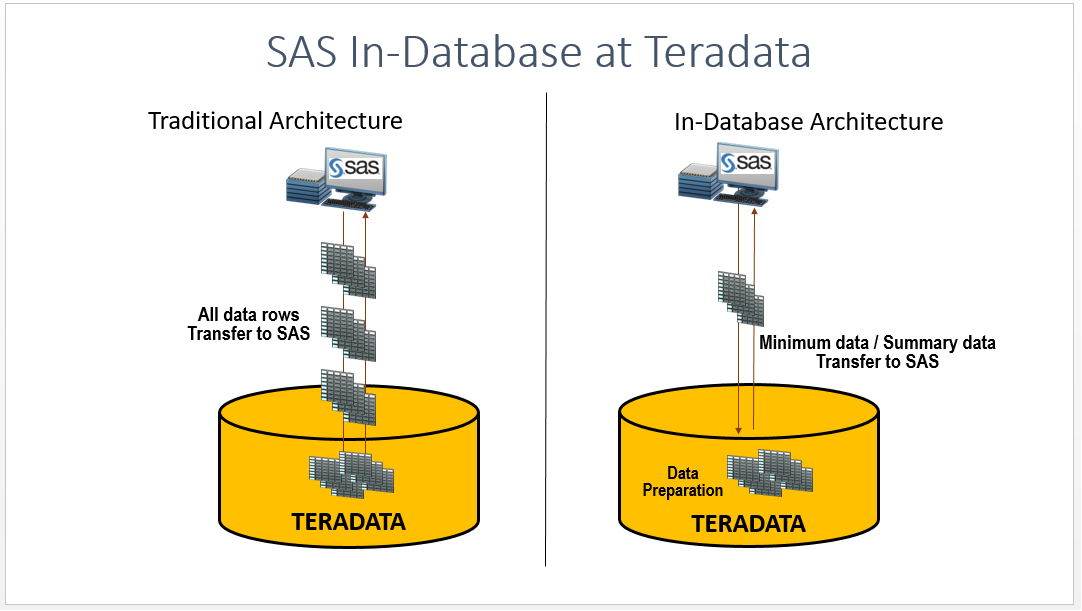

When using conventional methods to access and analyze data sets from Teradata tables, SAS brings all the rows from a Teradata table to SAS Workspace Server. As the number of rows in the table grows over time, it adds to the network latency to fetch the data from a database

What will 2018 unveil for the data management market? I searched expert opinions on technology trends for 2018 and matched them against my own to uncover the five major trends that I think we’ll see in data management this year: 1. Data movement becomes more important. Cloud providers have proven

To get full value from analytics programs, Todd Wright says be sure you can first access, integrate, cleanse and govern your data.

Jim Harris says more reusable data quality processes mean less reliance on IT and higher productivity across the board.

Get faster value out of your data by empowering business users to work with data on their own.

Joyce Norris-Montanari explains why it's so important to pick the right tools to manage your big data.

Helmut Plinke explains why modernizing your data management is essential to supporting your analytics platform.

Dylan Jones says spend time setting a vision of how to transform your data landscape – not debating definitions.

Data governance seems to be the hottest topic at data-related conferences this year, and the question I get asked most often is, “where do we start?” Followed closely by how do we do it, what skills do we need, how do we convince the rest of the organisation to get

Historically, before data was managed it was moved to a central location. For a long time that central location was the staging area for an enterprise data warehouse (EDW). While EDWs and their staging areas are still in use – especially for structured, transactional and internally generated data – big

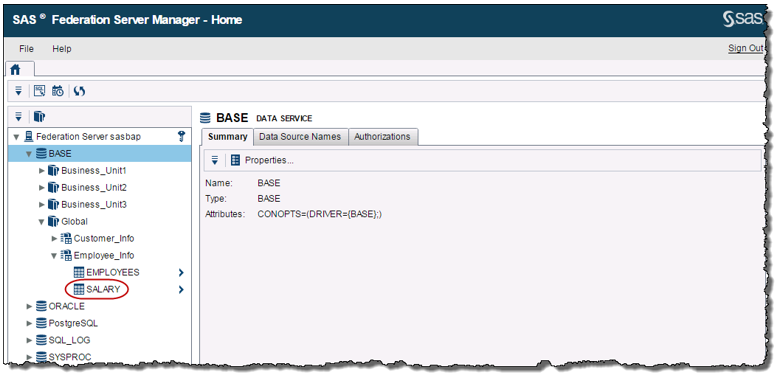

Data virtualization is an agile way to provide virtual views of data from multiple sources without moving the data. Think of data virtualization as an another arrow in your quiver in terms of how you approach combining data from different sources to augment your existing Extract, Transform and Load ETL batch