Learn how Bayesian optimization works through a simple demo.

Learn how Bayesian optimization works through a simple demo.

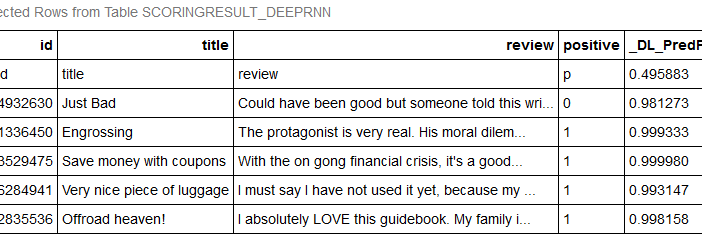

In this blog, I use a Recurrent Neural Network (RNN) to predict whether opinions for a given review will be positive or negative. This prediction is treated as a text classification example. The Sentiment Classification Model is trained using deepRNN algorithms and the resulting model is used to predict if new reviews are positive or negative.

There are four widely recognized styles of machine learning: supervised, unsupervised, semi-supervised and reinforcement learning. These styles have been discussed in great depth in the literature and are included in most introductory lectures on machine learning algorithms. As a recap, the table below summarizes these styles. For a comprehensive mapping

This is the first in a series of posts about machine learning concepts, where we'll cover everything from learning styles to new dimensions in machine learning research. What makes machine learning so successful? The answer lies in the core concept of machine learning: a machine can learn from examples and

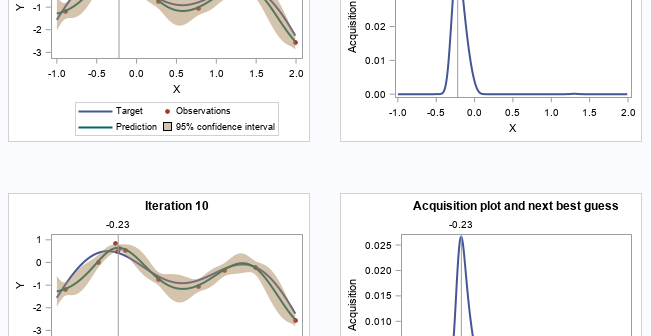

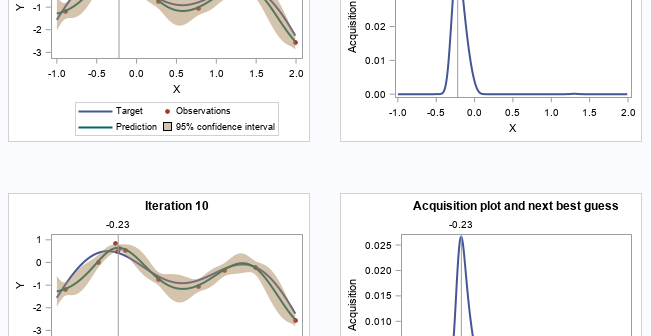

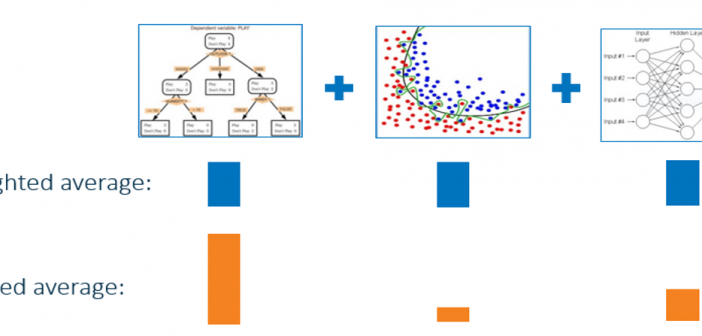

This is the fifth post in my series of machine learning best practices. Hyperparameters are the algorithm options one "turns and tunes" when building a learning model. Hyperparameters cannot be learned using that algorithm. So, these parameters need to be assigned before training of the model. A lot of manual

This is the fourth post in my series of 10 machine learning best practices. It’s common to build models on historical training data and then apply the model to new data to make decisions. This process is called model deployment or scoring. I often hear data scientists say, “It took

This is the third post in my series of machine learning techniques and best practices. If you missed the earlier posts, read the first one now, or review the whole machine learning best practices series. Data scientists commonly use machine learning algorithms, such as gradient boosting and decision forests, that automatically build

This is the second post in my series of machine learning best practices. If you missed it, read the first post, Machine learning best practices: the basics. As we go along, all ten tips will be archived at this machine learning best practices page. Machine learning commonly requires the use of

I started my training in machine learning at the University of Tennessee in the late 1980s. Of course, we didn’t call it machine learning then, and we didn’t call ourselves data scientists yet either. We used terms like statistics, analytics, data mining and data modeling. Regardless of what you call

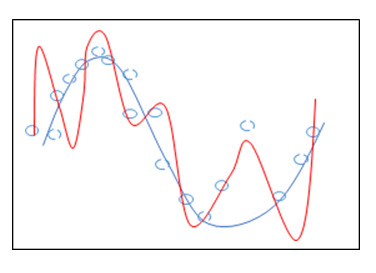

When building models, data scientists and statisticians often talk about penalty, regularization and shrinkage. What do these terms mean and why are they important? According to Wikipedia, regularization "refers to a process of introducing additional information in order to solve an ill-posed problem or to prevent overfitting. This information usually