Augusta Zhang and Jacek Dobrowolski also contributed to this article.

Automated computer vision technology is helping intelligence analysts stay one step ahead of gangs, terrorist groups, and other suspicious organizations.

Automation is at the heart of artificial intelligence. By helping humans automate repetitive tasks and make the most of their time, AI has resulted in remarkable successes that range from optimizing manufacturing supply chains to saving lives through improved cancer treatment strategies. Automation is embedded in many everyday processes to accelerate how we handle different tasks. A lot of research is conducted in the fields of AutoML (automated machine learning), AutoDL (automated deep learning), autoNLP (automated language processing), autoSpeech (automated speech classification), and autoCV (automated computer vision) to determine how machines can help us quickly solve challenges by interpreting our language, identifying images, and more.

At the core, these fields all share a similar process of learning from data. Machines can analyze data in depth thanks to hidden layers in neural networks, which find structures and regularities in the data and set weights so that an algorithm can acquire the skill to classify information or predict outcomes.

The challenge? Collecting high-quality data, and a lot of it.

Although AI is incredibly useful for extracting insights from our data, humans are still essential to set up the systems and providing the right foundation for machines to do the work. One of the biggest challenges is providing enough unbiased, correct, labeled data for analysis.

For example, in order to train an object detection model that uses computer vision to find objects in images, a user must first provide many sample images. Traditionally, a user manually labels hundreds or thousands of images and stores information such as the position, size and class of the objects of interest. This task requires lots of time and tedious effort, which is a huge investment before getting started. As data scientists, our question is, can we automate the image annotation process?

Well, in some cases, yes!

Jacek and I were recently approached to develop a solution to detect logos of interest in the area of security intelligence. Intelligence analysts piece together information from a variety of sources in order to assess threats and protect national security and economic wellbeing, including millions of images collected from social media. When a new, suspicious organization comes on their radar, it is important to keep track of the content they share, including images that may be identified thanks to the presence of a specific logo. This is a perfect use case for object detection, but it’s a huge effort to collect and label enough data to train a computer vision model to recognize a logo. Plus, the process must be repeated many times because new logos of interest can potentially come up every day. How can we develop models fast enough to stay ahead of these quickly evolving threats?

Our solution and how it works

We knew that the fastest way we could get accurate models into production was to automate as much of the process as possible. We developed an automated pipeline that accelerates the creation of logo detection models by executing the following steps:

1. Create an artificial training set

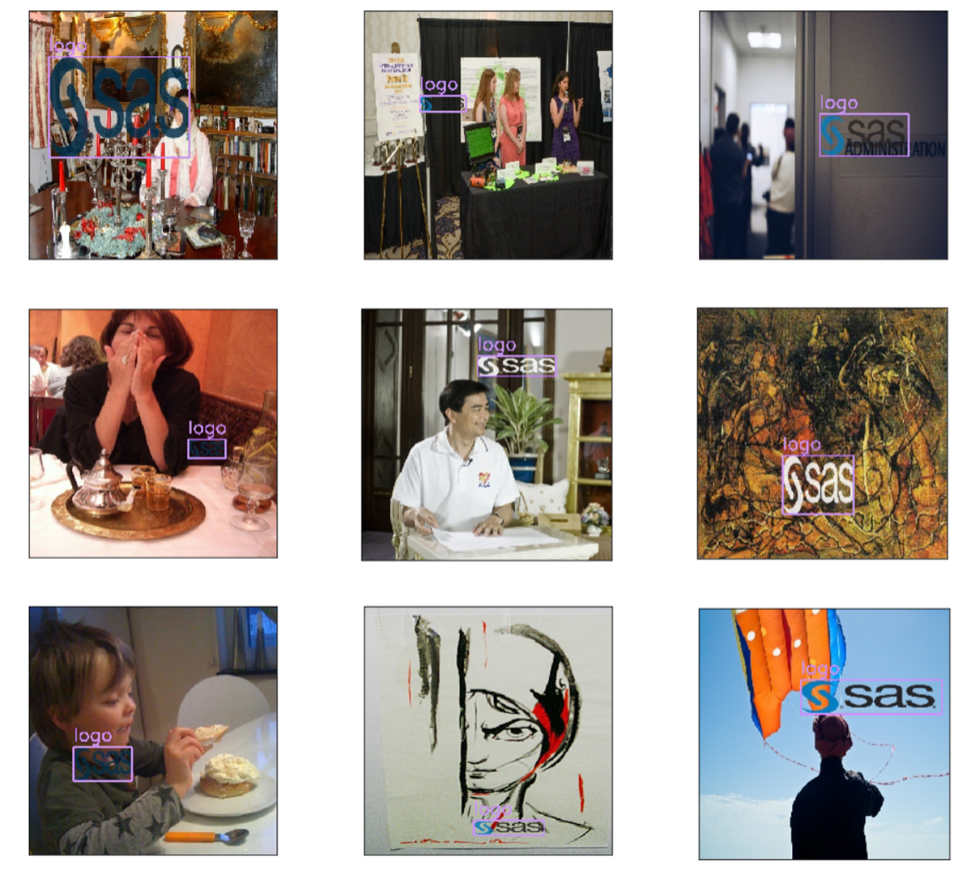

We ask the analyst to provide the logo of interest and any variations of it, and we overlay the logo on a set of background images. We randomly choose the size, position, rescaling factor, and opacity of the logo in each image to introduce some variation, and we keep track of where the logos are located through generated bounding boxes. The background images can be taken from publicly available datasets, like ImageNet, or can be more specific to the domain. In our case, they were images collected from social media. By creating an artificial training set this way, we can take a single logo and generate hundreds or thousands of images to train a model automatically! Here is an example of artificially generated images that contain a few variations of the SAS logo:

2. Train an object detection model on the artificial training set

For this task, we used a Tiny-YOLO model, since it showed a good balance between model size and accuracy. We followed the example available on the SAS Deep Learning Python (DLPy) GitHub page. DLPy is a Python library that allows you to quickly create deep learning models in SAS, with pre-trained weights provided, so it was perfect for accelerating our modeling process!

The results

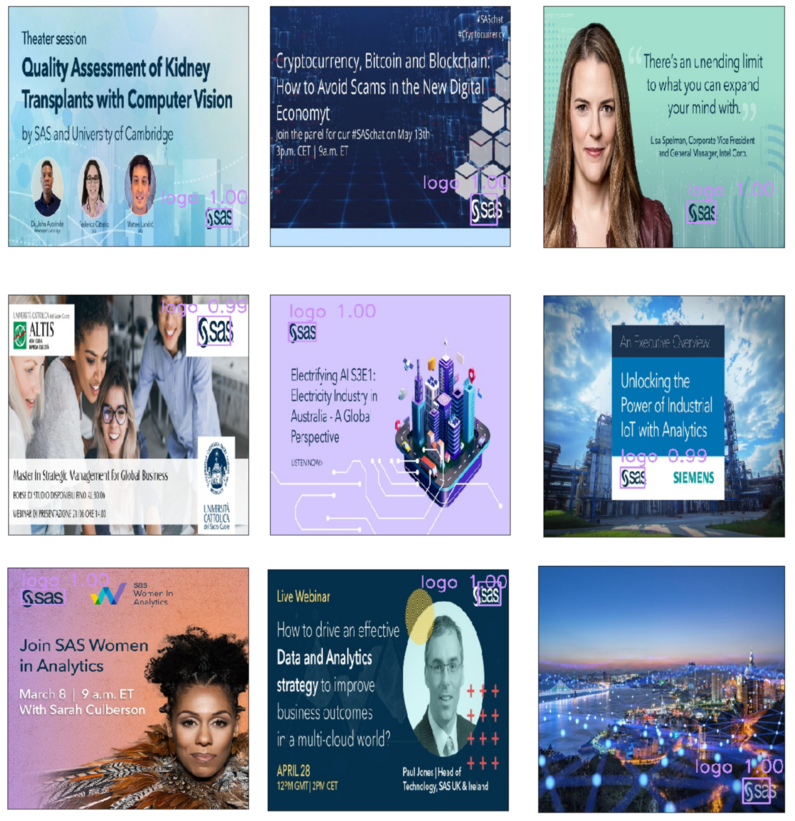

The idea is pretty simple, but it proved to be effective. To test it, we generated an artificial training set using the SAS logo. We were able to use a single image of the SAS logo to generate hundreds of new images in just a few minutes. Then, we trained an object detection model using the training set, and we scored it on new images, which weren’t used during model training. These test images came from SAS social media accounts. After training for just 13 minutes, we were able to achieve a very accurate model, with a recall of 93% and a precision of 93%. Here, you can some sample results. Each purple bounding box shows where the model predicts a SAS logo to be present, along with a confidence score between 0.00 and 1.00:

Surprisingly, the model proved to work really well not only on the logos found on neat marketing images, but also on photographs of real objects that include a SAS logo. For example, here we have business cards, a water bottle, a glass, a pen, and more.

On this dataset, both recall and precision are at about 94%.

Using SAS Viya in combination with open-source capabilities, we were able to develop an automated solution for logo detection that does not require any manual data labeling. The input is just a single image of the logo of interest, and the output is a powerful computer vision model that can be used to identify new images, whether you’re analyzing instances of a corporate brand or tracking the logos of suspicious organizations. In this way, we can speed up the analysis immensely and let the experts focus on what matters most--discovering hidden connections in the data to solve analytics challenges and keep all of us safe.

Want to learn more?

Check out this article to see how two data scientists at SAS built a multi-stage computer vision model to locate their canine friend Dr. Taco!