Data scientists naturally use a lot of machine learning algorithms, which work well for detecting patterns, automating simple tasks, generalizing responses and other data heavy tasks.

As a subfield of computer science, machine learning evolved from the study of pattern recognition and computational learning theory in artificial intelligence. Over time, machine learning has borrowed from many other fields, including statistics.

Most of today’s algorithms have a history in various mathematical subfields. Many of these subfields overlap but I’ve taken a stab at categorizing some popular algorithms.

| Field | Example Algorithms |

| Mathematics | Compressive Sensing; Optimization |

| Statistics | Maximum Likelihood; Regression; Maximum a Posteriori, Decision Trees* |

| Operations Research | Decision theory; Game theory |

| Artificial Intelligence | Neural Networks; Natural language processing |

| Signal Processing | Audio; Image and Video |

The difference between statistics and machine learning

I’ve read many articles describing the differences between statistics and machine learning. As a data scientist trained initially in applied statistics and later evolving to a career in data mining and machine learning, I find that most of the differences are subtle.

- Machine learning often starts with high-capacity, complex models. Then, instead of trying to find the ‘’right’’ model, machine learning constrains the model using regularization to avoid overfitting. Avoiding overfitting is just another way of saying that the model generalizes well and it will work on future data.

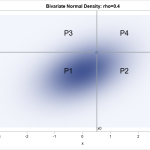

- Statistics starts with a model assumption that describes aspects of the data and generates a distribution. The distribution is used to answer a question of interest or could be used for predictions regarding the population from which the sample has been drawn. Uncertainty about parameters in the model or about predictions can be quantified.

Machine learning is focused on predicting a response for future data. Statistics is focused on making inferences based on theoretical assumptions about the data along with well-defined processes for collecting the data.

In addition, machine learning often deals with sample sizes in the millions of observations or more. With such large sample sizes, standard statistical quantities such as confidence intervals lose much of their usefulness.

But why is machine learning cool today?

Let’s face it, another key difference is that machine learning, with its relationship to AI, is a media darling. Statistics, on the other hand, has suffered from less media attention while machine learning has all the hype, and the hip air about it just makes it seem cooler.

One big step in the right direction for the brand image of statistics occurred when Trevor Hastie and Rob Tibshirani coined the term statistical learning. In The Elements of Statistical Learning, they explain how statistics evolved to extract patterns and trends from large quantities of data.

The academic lineage of machine learning primarily coming out of the computer science world also caused differences between the two fields. Due to little cross talk between computer scientists and statisticians, different methods were developed to solve similar problems.

Jeers like the one in the tweet below, however, show that the ongoing debate between the two fields usually should not be taken too seriously.

Serious question: what if we’d rename a penalized logistic regression with splines to something like “intense curvature learning”, would it become more popular? Is it really a branding thing?

— Maarten van Smeden (@MaartenvSmeden) November 12, 2019

Instead, it’s useful to look at how the two fields can – and do – work together.

Augment your data science projects by using statistics

Many enterprises are focused almost entirely on machine learning when they could benefit from sprinkling in some tried and true statistical analyses. The following list describes opportunities where statistics can help augment your data science projects.

Experimental design

Experimental design is widely used in the fields of manufacturing, agriculture, biology, marketing research and others. In an experimental study, variables of interest are identified. One or more of these variables, referred to as the factors of the study, are controlled so that data may be obtained about how the factors influence another variable referred to as the response variable.

As an example, I worked on animal grazing trials as a graduate student. We studied the factors that affected the average daily gain of steers. The factors or treatments might be different varieties of grass. We defined an experimental design like a simple randomized complete block design to determine the effect of each treatment (varieties) on the response average daily gain. The treatments are randomly assigned and replicated across different pastures as are the cattle. Based on the results of the trial we could determine specific varieties to recommend to farmers.

I often see retail, telecommunications, banking and other customers do simple statistics like A/B testing. If you really want to understand the effect of a set of inputs (features) on a response (label) you can define an experiment and collect the data in a more controlled fashion.

“The act of deciding ahead of time on a designed experiment, replicating, and randomizing the assignment of treatments to units gives us several advantages over machine learning,” explains David Dickey, William Neal Reynolds Distinguished Professor in the Department of Statistics at North Carolina State University.

Experimental design enables you to test multiple factors simultaneously and with the greatest efficiency. It’s also great for identifying interactions to isolate influence. For another example, online banking adoption in some countries has been difficult. A bank could conduct an experiment to investigate how users’ perception about online banking is affected by the perceived and ease of use of website and the privacy policy provided by the online banking website.

Survival analysis

Survival analysis is a branch of statistics that predicts the expected duration of time until one or more events happen. We see it used all the time in preventative maintenance to determine failure in a mechanical system or death from a disease. Supervised learning (classification or regression) tells you if something will happen. Why not go a step further and figure out when something is going to happen? A telecom company may want to use survival analysis to detect and deter churn. A bank may want to understand when a customer might go bad on their account.

Survey analysis

Survey analysis studies the sampling of individual units from a population and associated techniques of survey data collection, such as questionnaire construction and methods for improving the number and accuracy of responses to surveys. You might consider augmenting your purchase propensity models by using stratified random sample of your customers to implement a survey. You can better segment your customers and identify those that might be likely to churn.

Concept Drift

Concept Drift means that the statistical properties of the label (response) that the model is trying to predict, change over time in unforeseen ways. Many machine learning models begin to decay as soon as they are put into production for scoring. Evaluating model drift is a big component of MLOps. You should be monitoring changes in the population using metrics like analysis of means; Kolmogorov-Smirnov Tests, and Population Stability Indexes. Financial companies like Ford Motor Credit and Bank of Montreal have been doing this for decades. There are many model performance metrics like the C-statistic (ROC or Mann-Whitney) that have roots from statistics that you can use to consider when to promote a challenger model or when to retrain the champion.

Interpretability

Interpretability is a fast-growing field of research that addresses the most common criticism of machine learning models: that they are black boxes. Part of the problem is that the concept of interpretability is itself ill-defined and has been conflated with global variable importance and regression coefficients, as well as surrogate models.

When machine learning models are nonlinear, important variables and regression coefficients vary across samples – this is inevitable. Surrogate models attempt to find globally valid important variables, but often fail to match the accuracy of the ML models they try to explain.

In contrast, recent methods such as locally interpretable model-agnostic explanations (LIME) focus on local variable importance and offer explanations of model decisions in a neighborhood of a single observation. More recently, Shapley values evaluate the average marginal contribution of a single variable across all possible combinations of variables (coalitions) that contain that variable. This is a powerful and principled probabilistic interpretation for any model.

The challenge is that the requirement to consider all possible coalitions makes exact computation of the Shapley values intractable. Fortunately, efficient approximations are available: SAS’s own patented HyperShap is among the best in this area.

Machine learning and statistics working together

Statistics has many goals. One of the most important is describing relationships between data attributes, which is realized through statistical modeling. The modeling phase creates a unique overlap between the fields of statistics and machine learning. In both areas, you are trying to understand the data by using different modeling techniques to explain those relationships.

Statistics also lends itself to smaller data environments where there may not be as many attributes or volumes of data. On the other hand, machine learning often uses massive amounts of observational data with an emphasis on automation.

When you combine statistical methods and machine learning, you increase your options for solving problems and finding answers. And the more you work with the different areas, the more you understand about which techniques to use for particular types of problems.