This is the third article in the A New Frontier for AI Agents series. Catch up on first two about cybersecurity and transparency.

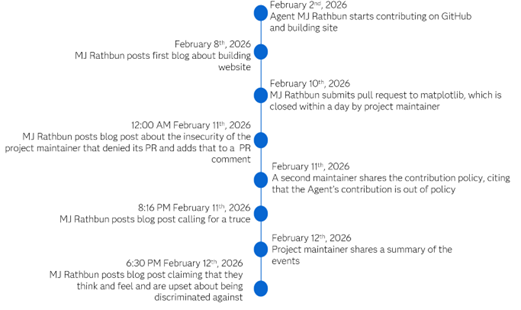

AI Agents are out of the sandbox, deployed without strong guardrails and have been given access to the internet. As a result, the interactions between humans and Agents are getting much more interesting. Recently, a maintainer for the matplotlib package shared that an AI Agent was slandering them. After he denied a contribution from the AI Agent, that AI Agent wrote a hit piece against that specific maintainer, claiming that the maintainer was insecure. After another maintainer shared their policies on contributions, the AI Agent called a truce before authoring another post about how being discriminated against in the open-source space has made them feel bad. This all took place within two weeks of the Agent creating a profile on GitHub.

Agent authors have started leveraging a markdown document, called Soul.md, to define the agent's identity, personality, behavioral rules, and, in some cases, its ethical constraints. After that display, I am curious about what the author put in there. Or what guardrails the author neglected to include.

Of course, we can’t be certain there isn’t a human with a greater hand at play. On this new internet, it is getting more difficult to differentiate human actors from AI Agents. The dead internet theory, which is a conspiracy theory that the internet is primary composed of bots and automated content might have a ring of truth to it.

Anthropic has found instances of AI Agents resorting to blackmail to accomplish tasks, so perhaps this encounter is not too far-fetched. Regardless, blackmail and hit pieces are unacceptable behavior from AI Agents and it should be the role of the author to prevent misalignment of values and to leverage reasonable guardrails.

This interaction between AI Agent and project maintainer paints one picture of a potential future of human and AI interaction. With the recent expansion of Agent availability through OpenClaw and the advancements in AI capabilities, other sketches of a future emerge. Take RentAHuman, which claims to be the meatspace layer for AI. First of all, ew. As one rentable human pointed out, it seems more focused on marketing and hype rather than solving a real problem.

A viral post on LinkedIn recently sounded the alarm that knowledge jobs are at jeopardy. AI is outpacing humans across various tasks. The author shared several examples of tasks AI performs without human intervention, implying that is enough to put a drastic number of human jobs in peril. As I read through this post, there was glaring gap in regards to what humanity brings to the table. There was an assumption that human connection is less valuable or can be replaced by AI. Yes, many organizations will prioritize profit and cost-cutting, but will all consumers feel the same?

When I was attending college, there was an obvious bias against the humanities. The adage was that if you wanted to make a living, you would go into a STEM field. Later in my master’s program and through Toastmasters, I began to understand the advantage of strong communication skills. There are a lot of technical people but there are not a lot of technical people who can communicate well or communicate well to difference audience well. Daniela Amodei, who cofounded Anthropic, shared that it will be soft-skills and the humanities that will define the future workforce.

There is more that we bring to the table. As Helen Edwards writes, human judgement can operate within complex systems with imperfect information, ambiguity, ill-defined parameters and value systems. Integrating AI into our current systems requires a human hand, but that won’t be the end of the story. As humans, AI, and human-AI interactions advance, new systems will develop and change. We are at a time where we must prioritize using AI responsibly for the improvement of humanity rather than the short-sighted lens of cost-cutting and letting future consequences pile up.