Much of my recent work has been along the theme of modernization. Analytics is not new for many of our customers, but standing still in this market is akin to falling behind. In order to continue to innovative and remain competitive, organizations need to be prepared to embrace new technologies and ways of working. For example, one of my current banking customers is looking to transition their many different analytical systems into a modern, consolidated environment. This is to reduce infrastructure costs, share resources and provide a better understanding of how data are transformed in the organization for the purposes of compliance and regulation.

Much of my recent work has been along the theme of modernization. Analytics is not new for many of our customers, but standing still in this market is akin to falling behind. In order to continue to innovative and remain competitive, organizations need to be prepared to embrace new technologies and ways of working. For example, one of my current banking customers is looking to transition their many different analytical systems into a modern, consolidated environment. This is to reduce infrastructure costs, share resources and provide a better understanding of how data are transformed in the organization for the purposes of compliance and regulation.

Modernization can be a complex endeavor, especially for an organization with a lot of legacy infrastructure - that might be the topic of another blog. In this article, I'll start by outlining some of the characteristics that underpin a modern platform for analytics.

-

The platform is scalable and can grow

When designing an analytics platform you should future proof your investment by designing it with scalability in mind, at all tiers of the architecture. You have to assume that data volumes will increase, even if only through organic growth. The types of data sources you need to consider may also increase - for example - structured data, unstructured data, image files and streaming data from the Internet of Things. The need to process data efficiently will increase with data volumes and, as analytics becomes more pervasive and further democratized, the number of users and consumers also continues to grow.

Scaling to address these growing demands can be achieved in two ways: scaling vertically means increasing the power of the machine that you are using; scaling horizontally means having the ability to add more machines to the environment and distributing the workload. Modern analytics environments tend to be distributed architectures to support massively parallel processing (MPP) and have the ability to scale horizontally build into the design.

-

The environment provides access to a variety of data sources

Clive Humby discussed Data as the New Oil back in 2006 and since then a number of articles have discussed how insight into data is the fuel for innovation in many industries. Where is the next ‘data well’ in your enterprise that you need to explore and mine? Sometimes we don’t know how useful data is going to be until we do some of that exploratory work and a modern platform accounts for this. It provides access to a variety of data sources that may be in different formats and provides a framework for bringing this data together for analysis.

-

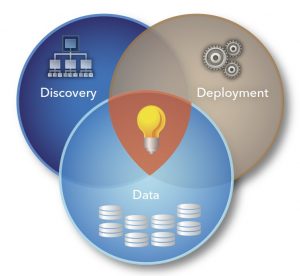

The platform supports the analytical lifecycle through data, discovery and deployment.

There are three key phases of the analytical lifecycle beginning with the preparation of the data. The previous trait focused on getting access to the data; here we are talking about data ingestion, cleaning, manipulation and transformation, so that the data are fit for purpose. Of course, we may not know the data very well in the first place and that’s why data exploration tools can be very useful in this phase. The discovery phase provides data scientists with the freedom within the environment to delve deep into the data and generate analytics insight and models that explain the data and make predictions. Finally, deployment is about taking the analytics from the discovery phase and deploying this into a production service for operational analytics. The story doesn’t end there though; this is very much an iterative cycle across the phases of data, discovery and deployment. Learning from the results and continuing to improve and refine the insight that the platform provides is an essential characteristic of the analytical lifecycle.

There are three key phases of the analytical lifecycle beginning with the preparation of the data. The previous trait focused on getting access to the data; here we are talking about data ingestion, cleaning, manipulation and transformation, so that the data are fit for purpose. Of course, we may not know the data very well in the first place and that’s why data exploration tools can be very useful in this phase. The discovery phase provides data scientists with the freedom within the environment to delve deep into the data and generate analytics insight and models that explain the data and make predictions. Finally, deployment is about taking the analytics from the discovery phase and deploying this into a production service for operational analytics. The story doesn’t end there though; this is very much an iterative cycle across the phases of data, discovery and deployment. Learning from the results and continuing to improve and refine the insight that the platform provides is an essential characteristic of the analytical lifecycle.

-

There are a variety of analytical tools available to support a range of end user needs

There are different roles to be filled as part of the analytics lifecycle and there are different profiles for how someone may want to interact with the data. A modern environment supports a continuum of users and expertise with the appropriate tools and interfaces. Some users may only be consumers of information and reports. Others many need a quick and dirty way of graphically interacting with the data to perform exploration and visualization. Then, there is the community who may develop and leverage complex algorithms on the platform using advanced analytics techniques such as machine learning. It’s worth highlighting that with the right modern visual interfaces you don’t need to be an expert in analytics to find insight and business value in data. The range of tooling on the platform should cater for the business across the entire analytical lifecycle.

-

The environment supports a shared services operating model

Many of the customers that I visit have quite fragmented data and application landscapes that have evolved over years, if not decades. The problem with this organic evolution is that it can be quite difficult and costly to maintain all of the infrastructure, software and data. One of the trends that I’ve observed with my customers (and indeed I often recommend) is a move to a consolidated analytics platform that is shared across a variety of business areas i.e. a shared service. There are many benefits of operating under a shared services framework to both the business and IT. Sharing resources (i.e. infrastructure, software, people) generally means you can sweat your assets harder and provide a more efficient and agile service to the business by virtue of only having one platform to maintain.

-

A modern environment is a governed environment

Everyone knows that we need governance but often there isn’t a clear definition of what this means or what to do to get it. There are three key areas that an analytics platform needs to consider with respect to governance:

- IT governance is about the alignment of IT to the business objectives and ensuring that the decision making, accountability and processes are in place to make this happen.

- Data governance ensures compliance of an organization’s information assets with standards, policies and procedures.

- Analytic governance provides control and monitoring over analytics assets such as algorithms and models running in production.

Notwithstanding the compliance and the regulation of data in some industries, a modern platform has a framework in place for addressing governance.

-

There are different engines for processing data in different ways such as in-memory, in-database and real-time streaming

We’ve discussed access to different data sources but a modern analytics environment should also cater for processing data in a variety of different ways. In-memory solutions load data into the memory of a computer. With data in-memory, processing speeds are greatly enhanced and end users experience excellent performance across a range of analytic and visualization functions. With in-database technology, the processing is passed down to where the data is stored. This reduces data movement allowing calculations to perform in the language which is native to the database. Real-time streaming data processing allow you to process a continuous stream of events. Examples of this might be social media feeds, stock market information or sensor data. Your analytics environment won’t necessarily need to process data in all these different ways but it should be able to accommodate the best ways to meet your business needs.

SAS® Viya™ is the new analytics platform from SAS announced at SAS Global Forum 2016. With innovation being the key to the success of our customers, we have applied that same philosophy to the development of our software leading to the SAS Viya Platform. Along with our SAS 9 technology, SAS provides a modern analytics platform that addresses all of the traits (and more) that we have discussed here. What do you think of the traits outlined above? Let me know in the comments below.

2 Comments

I think you have hit the nail on the head here with these 7 traits. I think one area which could have been touched on more is openness and collaboration to compliment trait #4. SAS Viya most certainly supports openness and collaboration which is key for driving innovation and rapid development cycles.

Hi Jonathan, thanks for the feedback and I do agree with your comments. The integration with open source is clearly very important and I like your reference to rapid development cycles. This is interesting from a DevOps and agile development perspective which represent 'modern' ways of working.