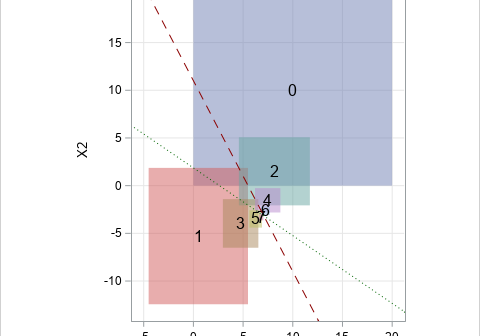

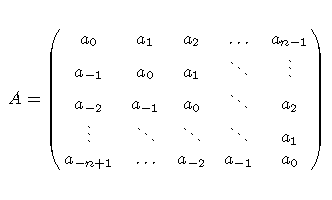

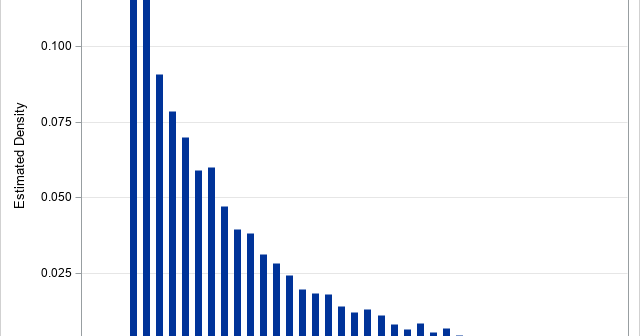

The first-order autoregressive (AR(1)) correlation structure is important for applications in time series modeling and for repeated measures analysis. The AR(1) model provides a simple situations where measurements (on the same subject) that are closer in time are correlated more strongly than measurements recorded far apart. The AR(1) model uses