Do you want to spend less time on the tedious task of preparing your data? I want to tell you about a magical and revolutionary SAS macro called %TK_codebook. Not only does this macro create an amazing codebook showcasing your data, it also automatically performs quality control checks on each variable. You will easily uncover potential problems lurking in your data including variables that have:

- Incomplete formats

- Out of range values

- No variation in response values

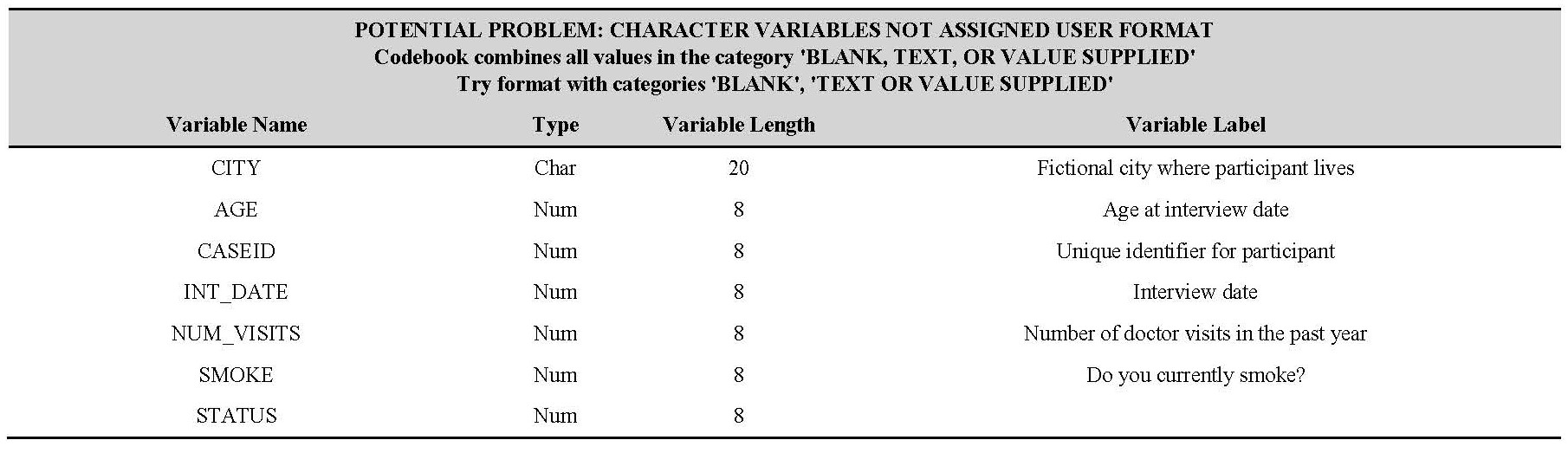

- Variables missing an assigned user-defined format

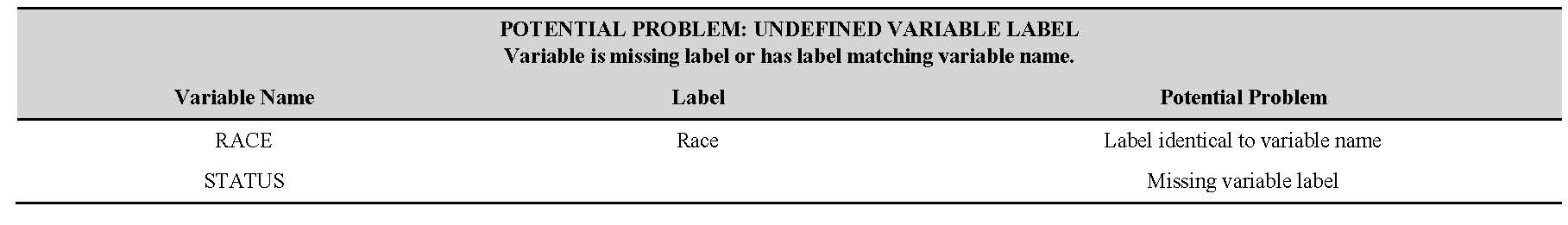

- Variables that are missing labels

All you need is a SAS data set with labels and formats assigned to each variable and the accompanying format catalogue. Not only will this macro change the way you clean and prepare your data, but it also gives you an effortless way to evaluate the quality of data you obtain from others before you start your analysis. Look how easy it is to create a codebook if you have a SAS data set with labels and formats:

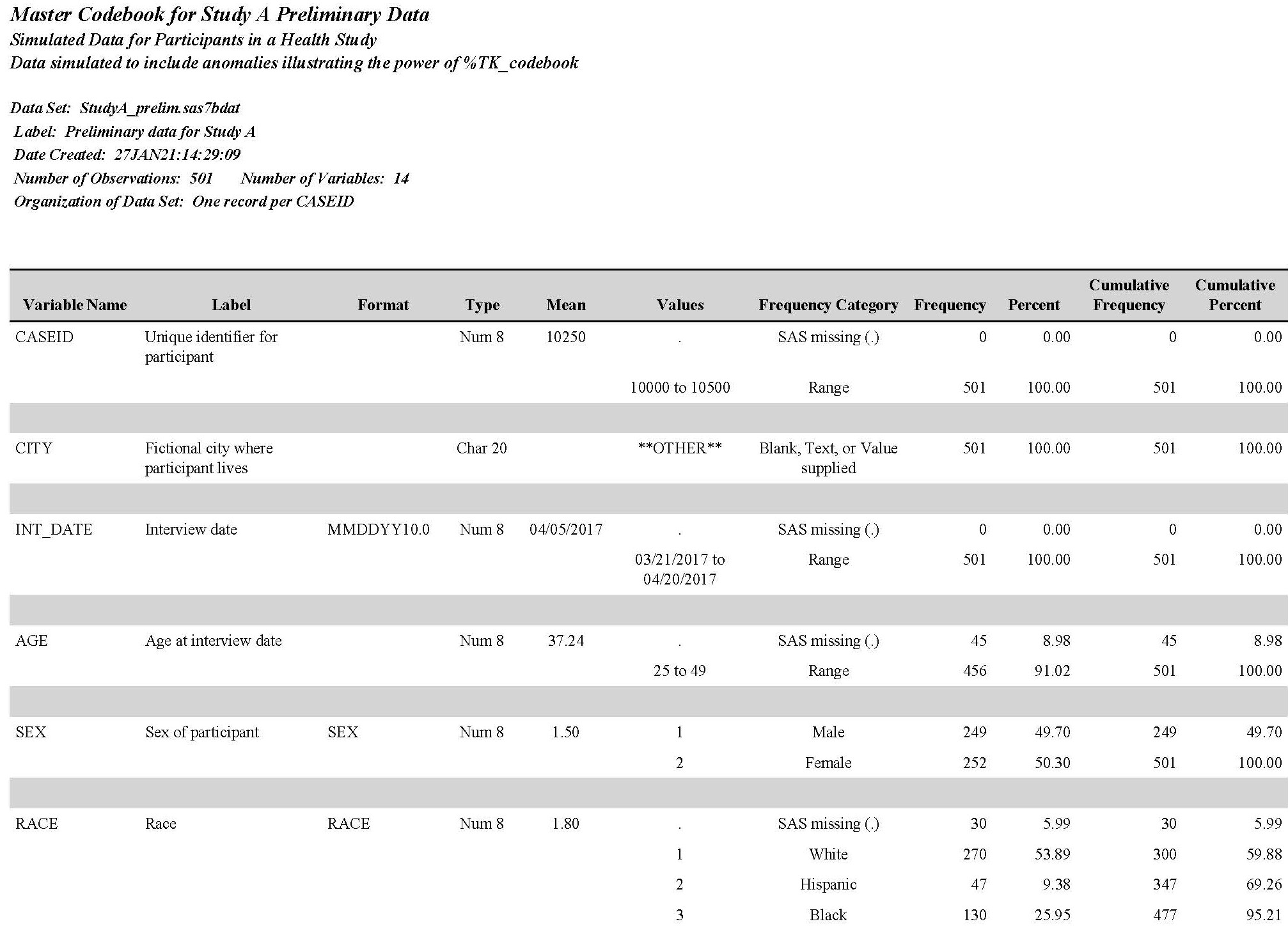

title height=12pt 'Master Codebook for Study A Preliminary Data'; title2 height=10pt 'Simulated Data for Participants in a Health Study'; title3 height=10pt 'Data simulated to include anomalies illustrating the power of %TK_codebook'; libname library "/Data_Detective/Formats/Blog_1_Codebooks"; %TK_codebook(lib=work, file1=STUDYA_PRELIM, fmtlib=LIBRARY, cb_type=RTF, cb_file=/Data_Detective/Book/Blog/SAS_Programs/My_Codebook.rtf, var_order=INTERNAL, organization = One record per CASEID, include_warn=YES; run; |

Six steps create your codebook

After creating titles for your codebook, this simple program provides %TK_codebook with the following instructions:

- Create a codebook for SAS data set STUDYA_PRELIM located in the temporary Work library automatically defined by SAS

- Find the formats assigned to the STUDYA_PRELIM in a format catalogue located in the folder assigned to the libref LIBRARY

- Write your codebook in a file named /Data_Detective/Book/Blog/SAS_Programs/My_Codebook.rtf

- List variables in the codebook by their INTERNAL order (order stored in the data set)

- Add “One record per CASEID” indicating which variable(s) uniquely identify each observation to codebook header

- Include reports identifying potential problems in the data

Just these few lines of code will create the unbelievably useful codebook shown below.

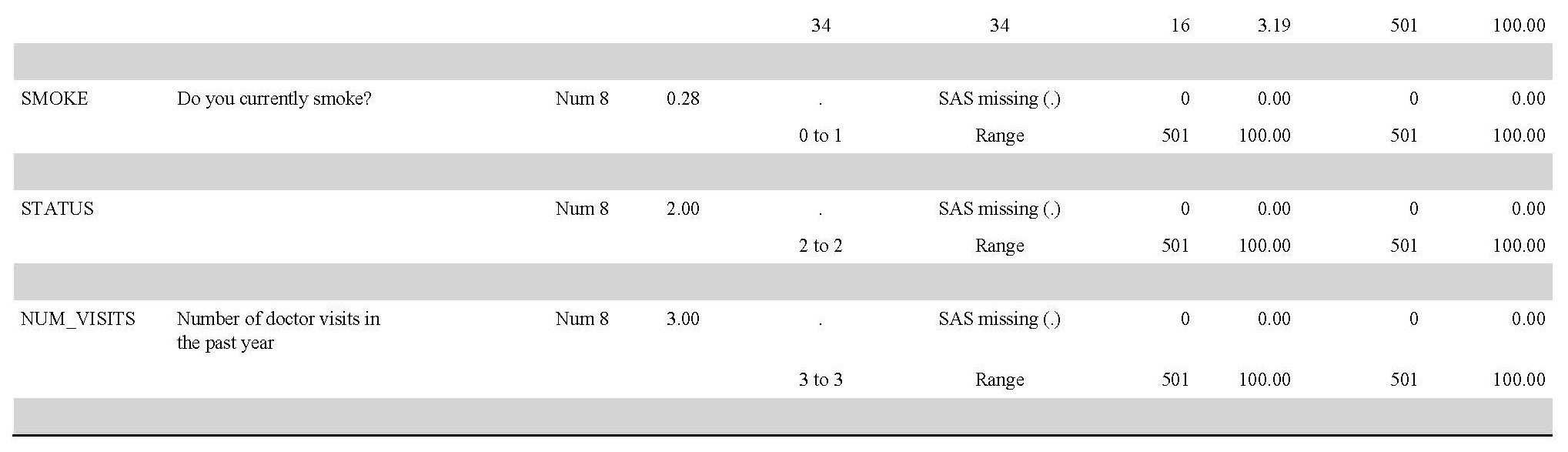

The data set used has many problems that can interfere with analysis. %TK_codebook creates reports showing a concise summary of only those problem variables needing close examination. These reports save you an incredible amount of time.

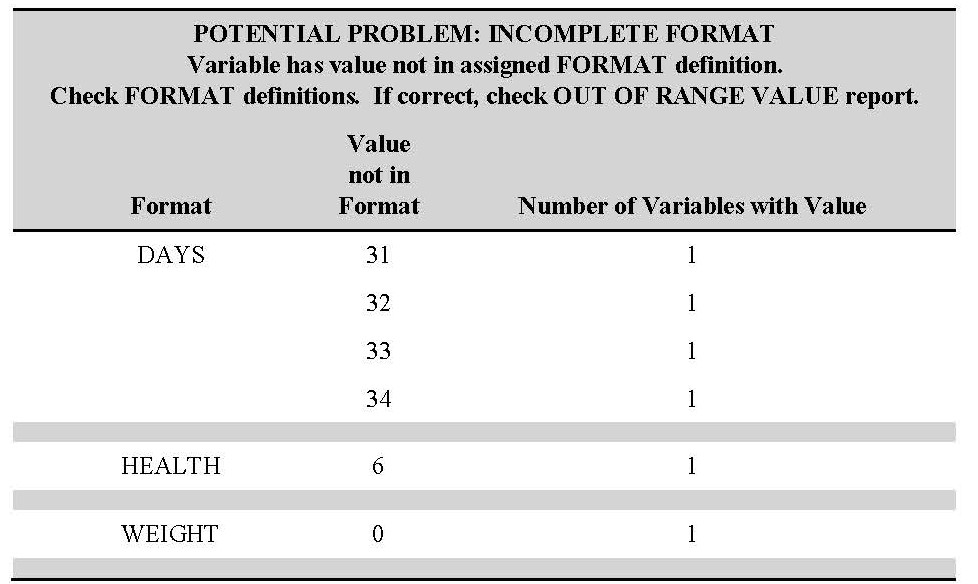

Using assigned formats, %TK_codebook identifies unexpected values occurring in each variable and provides a summary in the first two reports.

Values occurring outside those defined by the assigned format indicate two possible problems:

- A value was omitted from the format definition (Report 1 – Incomplete formats)

- The variable has unexpected values needing mitigation before the data is analyzed (Report 2 – Out of Range Value)

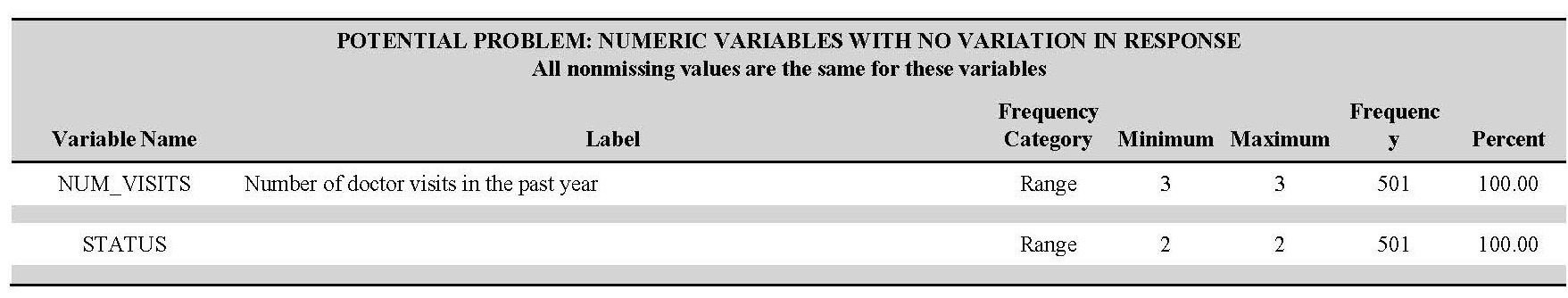

The next report lists numeric variables that have no variation in their values.

These variables need examining to uncover problems with preparing the data set.

The next two reports warn you about variables missing an assigned user-defined format. These variables will be excluded from screening for out-of-range values and incomplete format definitions.

The last report informs you about variables that are missing a label or have a label that matches the variable name.

It is easy to use %TK_codebook to resolve problems in your data and create an awesome codebook. Instead of spending your time preparing your data, you will be using your data to change the world!

Create your codebook

Download %TK_codebook from my author page, then learn to use it from my new book, The Data Detective’s Toolkit: Cutting-Edge Techniques and SAS Macros to Clean, Prepare, and Manage Data.

THE DATA DETECTIVE'S TOOLKIT | BUY IT NOW

2 Comments

Page 38: "The %TK_codebook macro will look for this data set named "custom_order" if you specify VAR_ORDER=CUSTOM.""

Line 695 of TK_cookbook.sas %if %upcase(&var_order)=CUSTOM_ORDER %then

Hi! I bought the book and found it very interesting. I was wondering if the dataset used for the examples is available somewhere. I think it will be useful to understand the macro better. Many thanks.

Daniele from Italy