Hybrid computers that marry CPUs and devices like GPUs and FPGAs are the fastest computers, but they are hard to program. This post explains how deep learning (DL) greatly simplifies programming hybrid computers.

Hybrid computers that marry CPUs and devices like GPUs and FPGAs are the fastest computers, but they are hard to program. This post explains how deep learning (DL) greatly simplifies programming hybrid computers.

The artificial intelligence and machine learning revolution is fueled by large amounts of data and incredibly fast machines. Those incredibly fast machines achieve their record speeds thanks to innovative architectures and devices like Graphics Processing Units (GPUs) and Field Programmable Array (FPGAs) devices that work in concert with traditional Central Processing Units (CPUs). This collaboration of different types of devices forms a hybrid architecture that introduces a lot of complexity in the coding and consequently is only performed by the costliest talents. This difficulty and high cost of software development on hybrid architecture practically puts the best performance out of the reach of most analytics. That is no longer true with Deep Learning for Numerical Applications (DL4NA) which allows data scientists with no knowledge of hybrid architectures to implement their models with deep neural networks and run them on the fastest hardware.

Data-Driven Programming

Every year scientists and researchers gather in a conference called Super Computing, or SC, to exchange their views, solutions, and problems in computational science. At SC17, there were no fewer than 22 presentations and keynotes that had something to do with machine learning (ML) and deep learning (DL). There were actually many more presentations about DL, because it is often the motivation for hybrid architectures architecture (more on this later). This is quite remarkable if you consider that the year before, there was a grand total of 2 DL presentations. In other words, it appears that we are strongly moving into the era of data-driven programming.

This type of evidence from the world of science can also be seen in the economy at large. The internet of things (IoT), for example, is an emerging new industry that exists only through the data that it can collect: without sensors and data, there would be no IoT. There are many more examples of data-intensive industries that regularly appear in the news, from self-driving cars to automatic translation to predictions of shoppers’ behavior.

From Tasks to GPUs

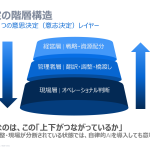

In the last 40 years, here at SAS, we have been looking at multiple paradigms for our analytics.

In the 80’s, we ran single-threaded analytics. That gave us some performance numbers that we didn’t quite like, so 20 years later we graduated to parallelism with multi-threaded and multi-process executions. We saw orders of magnitude of performance improvements by going parallel. That speed increase came at a cost: complexity of development. By following the principles of task-based development and by using a many-task computing (MTC) framework such as SAS Infrastructure for Risk Management, we could tame that complexity and be productive by focusing our attention on our problems rather than the mechanics of multi-threading and multi-processing.

The organization of our code into tasks has its limits in terms of parallelization. One of the major limitations is the number of CPU cores, which as of this writing is in the hundreds for a single machine, not in the thousands or in the millions. The general-purpose graphics processing unit (GPGPU or simply GPU), with its thousands of cores, addresses this limitation. Alas, the complex synchronization of multiple threads of execution using compute unified device architecture (CUDA) consumes a great deal of development resources. This programming complexity implies that CUDA is not the ideal tool for the data scientist or for the statistician.

Speeding Up Your Analytics with Machine Learning (Part 2) will introduce the use of DL to train a deep neural network (DNN) to further improve performance; and hybrid architectures.

The content of this post is an excerpt of Chapter 9 of my book, Deep Learning for Numerical Applications with SAS®, where you will find an introduction to deep learning concepts in SAS along with step-by-step techniques that allow you to easily reproduce the examples on your high-performance analytics systems.