I am writing this post with the satisfaction of having Implementing CDISC Using SAS: An End-to-End Guide, Second Edition completed and on the shelf. Once again, it was my pleasure to collaborate with Chris Holland, and I am glad that we had a chance to update the book with current software and standards. That leaves me thinking about where we are right now in terms of clinical trials standards and compliance, and I am a bit concerned.

I am writing this post with the satisfaction of having Implementing CDISC Using SAS: An End-to-End Guide, Second Edition completed and on the shelf. Once again, it was my pleasure to collaborate with Chris Holland, and I am glad that we had a chance to update the book with current software and standards. That leaves me thinking about where we are right now in terms of clinical trials standards and compliance, and I am a bit concerned.

Fifteen years ago, we had the birth of what we know as the CDISC SDTM, and ADaM was in its infancy. Now here we are, and the SDTM, ADaM, and DEFINE-XML submission data standards have matured and grown. Oh, how they have grown. When I teach ADaM, I am often amazed that students haven’t read all of the model documentation, but should I be? If you take the current basic ADaM documentation, which includes the model, implementation guide, time to event, OCCDS and examples document you are looking at reading 305 pages. Define-XML is a sprightly read at 98 pages, and the current SDTM documentation clocks in at a meaty 469 pages. For those keeping track at home, that is 872 pages of FDA submission data standards, and that doesn’t even include the new therapeutic area user guides (TAUGs). Include those and you’re likely at a nice round 1,000 pages of submission standards to understand.

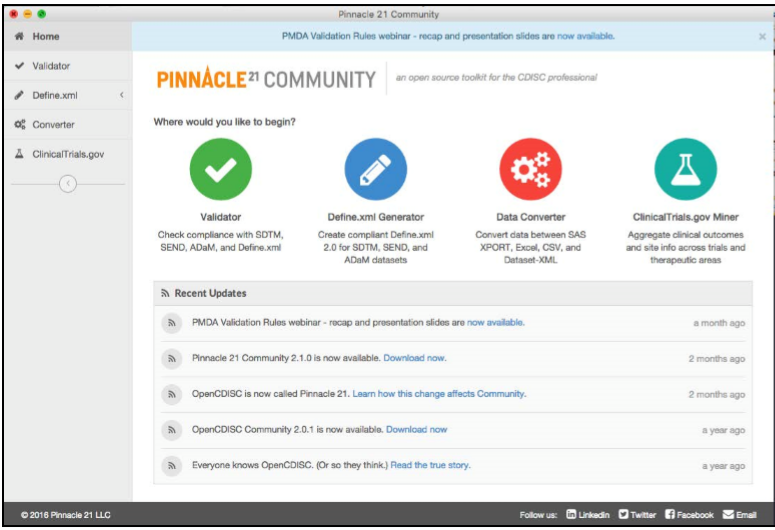

Those 1,000 pages of clinical data standards continues to grow, and so does their interpretation. Define-xml is fairly rigid, with the SDTM a bit less so, and ADaM even less rigid. This lack of rigidity leads to multiple interpretations of the standards across implementations in the industry. To add to that complication, we now have various regulatory requirements on the CDISC requirements. The FDA has a technical conformance guide that adds additional requirements to the CDISC standard requirements, and now they have a new technical rejection criteria document as well. There is also the PMDA CDISC submission requirements in Japan, as well as the additional checks found within the Pinnacle 21 validation tool. Add it all up and you have a lot more to understand beyond the1,000 pages of base standards.

As the standards teams continue to evolve the CDISC standards, and as we get evolving interpretations and additional requirements layered over the base standards, things are getting a tad complex. At times, it has me wondering if this increasing standards complexity is the way to go. It is worth noting that CDISC isn’t even the only clinical data standards game in town. As clinical research evolves to be based more on hospital electronic health records (EHRs), we can expect models such as HL7 v3 or FHIR to play a greater role. How this all works out with CDISC is yet to be seen as the CDISC and HL7 worlds are eventually truly bridged, and not just BRIDG’d.

We need an easy button for clinical data submissions. Have we created one yet?

6 Comments

Jack, loved the book and learned a lot from you and Chris. where can I download an excel template for metatdata such as SDTM variable metadata so I don't have to create a spreadsheet from scratch? Thank you.

Mike

Hello Sir,

Kindly can you tell me where to download the trialdesign.xlsx. I checked in the author blog it is NOT there.

Thanks for your help.

Suthakar T Iyer

Thanks Jack for all your programming help. Highly appreciated!!!

Thanks Jack for your SAS programming techniques shared in this book!

Congratulations on your second edition, Jack!

Jack, congratulations on the second edition of your book! It's a great contribution to the fascinating world of Clinical data processing.

I recently wrote a blog post on efficient technique for SDTM and ADaM data library management, and thought your readers might find it useful too: Modifying variable attributes in all datasets of a SAS library .