Virtual reality (VR), augmented reality (AR), eXtended reality (XR), mixed reality (MR), spatial augmented reality, hybrid reality. That's a lot of new technology and a lot of acronyms for a philosophical concept that has been pondered for millennia: what is reality?

In parallel, the Internet of Things (IoT) is digitizing the realities of workers everywhere. Put it all together with AI and analytics and you can make intelligent realities, which promise to add a layer of real-time intelligence to make workers better at mastering their realities.

While the XR family of AR, VR and MR head mounted displays (HMDs) are great options for intelligent reality, fancy new headgear isn't always required. My paper in the Industrial Internet Consortium's Journal of Innovation, "Intelligent Realities For Workers Using Augmented Reality, Virtual Reality and Beyond," talks about how more established tech like smart phones and desktop monitors can improve realities as well. As I'll describe at the AI in Financial Services event in Zürich on April 11th, workers in abstract domains like finance can also benefit from intelligent realities.

Defining realities

An intelligent reality is a technologically enhanced reality that improves human cognitive performance and judgement. It can make a worker better by displaying information from the Internet of Things in the physical reality. Since reality is real-time, streaming analytics from SAS Event Stream Processing is a crucial component of intelligent realities.

Consider a technician looking at a machine while wearing an AR HMD. He can see both the service history and prediction of future failures. This gives the worker a view on the fourth dimension of time, both backwards and forwards. Instead of having to take the machine apart, the worker can see an IoT-driven MR rendering projected on the outside casing. Additionally, he could also see a virtual rendering of the operations of the same type of machine at a distant location. Then, he can interface with both artificial and human remote experts about next steps, which could include the expert driving virtual overlays into the technician's view. As a wearable computer, the HMD brings distant resources in to the worker’s operational reality. He could interact with management systems without reaching for a smart phone or laptop.

The architecture of reality

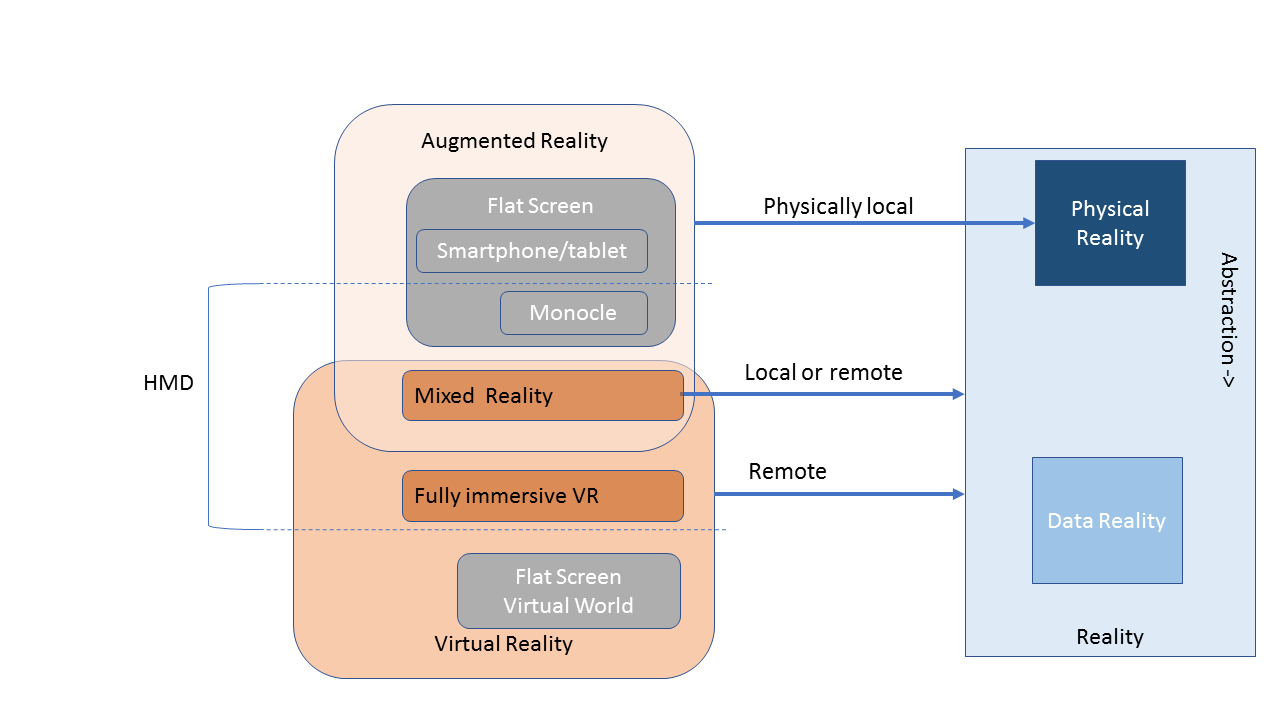

With so many new overlapping terms, it's easy to get lost in reality. The diagram below from my intelligent realities paper attempts to simplify the discussion. It also tries to de-emphasize the role of the new head gear when it comes to operationalizing analytics and AI.

Beginning on the right of the diagram: reality can be the proximate physical reality, or it could be an abstract reality. For instance, a machine in front of a technician is a physical reality, while the supply chain for that machine is abstract -- a data reality. The technician could see an IoT-driven overlay of the machine operations with a mixed reality device. He could also see the supply chain hovering above the machine.

Data realities can exist that are entirely abstract without any physical analogue. Consider a commodities market. Why visualize market activity with just a single stock ticker when our high def screens are capable of so much more?

The left side of the diagram illustrates the three key concepts of augmented reality, mixed reality and virtual reality. Augmented reality devices do not occupy the user's entire field of view. You are always able to see the proximate reality, so AR is always local to the proximate physical reality. But just because you can see the surrounding physical reality doesn't mean that it is important for the task at hand.

A fully immersive VR headset completely blocks out the physical reality. You are always remote and detached from physical reality. If you grab a sandwich, put it on your desk, and then put on a headset to do some VR, some joker may come by and steal your sandwich. This is one of the many reasons that VR is a bit weird in professional settings. Though very powerful, VR can be uncomfortable. Not to mention that poorly designed VR apps can make users nauseous. And some users just don't want to wear a headset for very long.

Mixed reality sits between AR and VR.It requires a stereoscopic AR HMD -- one that can give the user stereoscopic vision and the illusion of depth. Mixed reality can address some VR use cases. For instance, a 3D model of a building examined at arms length could be rendered in VR or MR. In that case, the reality of the building is remote. Alternatively, a digital overlay on a proximate machine is a local reality. Mixed reality can be either local or remote.

Head mounted displays are part of the picture, but not the entire picture. When someone says AR, they may mean something on a stereoscopic AR HMD, a monocle HMD like Google Glass, or a smartphone app Pokémon Go. VR, on the other hand, tends to mean a fully immersive experience with an HMD. But a VR asset could be rendered in MR or on a flat screen.

Fully immersive VR has unique capabilities. For example, turning your head is a powerful user input in VR. Not so on a flat screen. But at the high level, a virtual rendering of a distant factory can be valuable regardless of how it is rendered. Some remote experts might like VR. Others may prefer MR or a flat screen. A remote expert should be able to pick the form with which they are most comfortable.

Artificially intelligent realities

Artificial intelligence is also crucial for intelligent reality. Many XR devices ship with computer vision and speech recognition (both AI technologies). In addition, AI can help workers by making smart contextual selections of content to present based on the circumstance. The popular remote expert use case for AR, where a remote expert guides a field technician based on a video feed from the technicians AR headset? The expert doesn't have to be entirely human.

Looking at the opposite direction of data flow, XR devices are, themselves, "things" on the internet. HMDs are connected and loaded with sensors and cameras. Not only can they display information about the physical world, they can capture information about it as well. Each time a worker dons an XR headset, the device can digitize even more reality for analytics and AI applications, just like any other IoT thing.

Realizing intelligent realities

You can make intelligent realities with a variety of off-the-shelf technologies available today. And not just headsets. Smart phones can do AR. A desktop flat screen can render an IoT-driven view of a remote factory, and that may be more comfortable than VR. And using spatial AR, reality can become more intelligent with simple projected light or holograms -- no wearable or tote-able tech needed. Regardless of what reality tech you use, you can make a worker's reality smarter and better. When you improve workers' realities, you improve work. Take a look at my intelligent realities paper to learn more.