There’s more than one way to make a poor decision. Bad data, inappropriate assumptions and flawed logic are just three of the missteps you can take on your climb up the Ladder of Inference, a concept first developed by Chris Argyris, professor of business at Harvard, in 1974, and later popularized by Peter Senge in his 1990 book, “The Fifth Discipline”. If we’re not mindful of these mental pitfalls, we’re likely to use our automated business processes to simply make bad decisions faster.

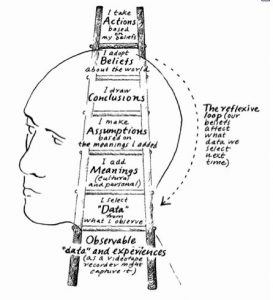

The Ladder of Inference, an oldie-but-goody, is likely familiar to you, although you may not have run across it in some time. A quick summary of the ladder’s seven rungs would be (starting at the bottom):

Observation: The world of observable data and experience

Observation: The world of observable data and experience- Filtering: The selection of a subset of this data for further processing

- Meaning: Assigning meaning / interpretation to the data, through semantics or culture

- Assumptions: Associated context, often from your Framework (below), of what you already know and the new meanings you’ve assigned

- Conclusions: Drawn based on the assumptions and meaning applied to the filtered data

- Framework: You alter, adjust or adapt your belief system / knowledge framework based on your conclusions

- Action: You take action based on the meaning of the data and your updated belief system

A simple example of the ladder in action might be: While you are walking the streets of a large city, you observe amongst the crowd a familiar face, which catches your attention (filter). Once identified (meaning) as a friend you haven’t seen in years, and based on your memory, you assume a mutual, lasting friendship and conclude that they would be pleased to see you again. Your now updated belief system moves this old friendship into the present and you walk across the street to introduce yourself (action).

That was a rather benign example. I hardly need to elaborate that there is much that can go wrong with this process, which was precisely the reason it was espoused by Argyris and Senge in the first place. One only needs to consider the present day nastiness we are seeing with respect to people observed, filtered and labeled based on their race, religion, nationality, clothing or language, the assumptions made and conclusions drawn, all in a rapid ascent up the Ladder to an often regrettable action.

One antidote to racing up the Ladder is to climb back down and take the process more deliberately, one rung at a time:

- What data was chosen and why? Was it rigorously selected?

- What are you assuming, and why? Are those assumptions valid?

- When you said "[your inference]," did you mean "[my interpretation of it]"?

- Run through your reasoning process again.

- What belief lead to that action? Was it well-founded?

- Why have you chosen this course of action? Are there other actions that should be considered?

One of the better real world examples I can think of to illustrate this point is the story of how the flawed Hubble Telescope primary mirror was allowed to be installed and launched even though tests showed that the curvature was incorrect. Several rungs of the Ladder were hastily jumped over as technicians assumed that, having gotten to the final stage, the mirror couldn’t possibly be incorrect – it had to be their own measurements instead, so nothing was said, and in the end it required a separate, expensive repair mission to install corrective optics to mitigate what otherwise would have been a billion dollar disaster and embarrassment.

Analytics interacts with the Ladder in two ways. The first is in a generally positive way with analytics as a tool to augment our decision process. Analytics can support better decisions by:

- Providing better filters, overcoming many of our flawed selection biases (e.g. confirmation, hindsight)

- Assigning better meanings, by distinguishing the significant from the noise (e.g. text analytics, data mining)

- Making your assumptions explicit, by coding them into the analytic model

- Drawing only the conclusions that are warranted by the data

However, the IoT, event stream processing, AI, and Analytics for Agency all create an entirely new path for making bad decisions, quickly, through our fully automated processes. The Ladder of Inference no longer pertains to just what goes on in our heads, but also to what goes on in our automated, rules-based, business models. When it comes to deploying automated rules-based models and systems at scale, there are two primary concerns:

- First, what biases, assumptions and belief system have you baked into your model, either deliberately or inadvertently? If your automated business process is taking actions based on data inputs, it is racing through its very own Ladder of Inference, and therefore prone to the same decision-making mistakes as humans.

- Speaking of humans, they likely still have an important role to play in your otherwise automated process. As I described in this post, “The Man who saved the World”, we tend to get into trouble, BIG trouble, when our process are too tightly coupled. A more loosely coupled process, perhaps with human monitoring or intervention at key inference rungs, can prevent a runaway calamity.

Kevin Slavin’s TED Talk on Algorithms provides several other great examples of automated processes run amok, including the one where two computers bid each other up into the millions of dollars on eBay for a $12.95 paperback book. You don’t want to be the one responsible for the next version of the Wall St. “flash crash” at your company. There is a continued role for the proper use of human intervention and exception handling to prevent situations like those.

The Ladder of Inference was conceived as a cautionary tale, a reminder of the many different paths we can take to poor decisions, actions and outcomes. As we continue to automate and scale our decision processes, as we inevitably will, let’s make certain that we are scaling GOOD decisions. Because when it comes to bad decisions, you can't make it up on volume.