The term “big data” is all the rage right now, however the term “big” is relative. At SAS we have been called on to do “big data” projects and more importantly “big analytics” projects for many years now. In fact, we are the pioneers of analytics on “big data.”

There is nothing special about the volume of data, variety, or velocity of big data. To quote one of my colleagues, “It is the value.” We are finding that the tough part of big data is the same problem that faces any analytic project: First you have to formulate a problem that you expect to be solved with analytics, next the data needs to be filtered, aggregated and structured in a way to yield some value from analysis, and finally the effort required to harvest value from big data is worth the investment.

What is special about “big data” today is that storage has gotten cheap enough, and the parallel processing techniques have matured to a point where now we have options to extract value form data that previously were not manageable. Take various forms of machine generated data, like GPS system output, RFID tags or web logs. By itself, this data isn’t very valuable or even interesting. It isn’t until you put structure to the data through parsing, filtering, sorting, joining and aggregation that the data begins to provide some insights into how it might be leveraged.

As Rick Wicklin points out “Obtaining the means and standard deviations of 100,000 variables is simple. Computing a complex regression model with 100,000 variables is much more challenging! … To truly appreciate the problem of big data one must consider not only the volume of data but also the computational complexity of the analysis.”

The many uses of Hadoop

Technologies like Hadoop have become all the rage recently. Hadoop is an open source Apache project that “is a framework that allows for the distributed processing of large data sets across clusters of computers using a simple programming mode.l”

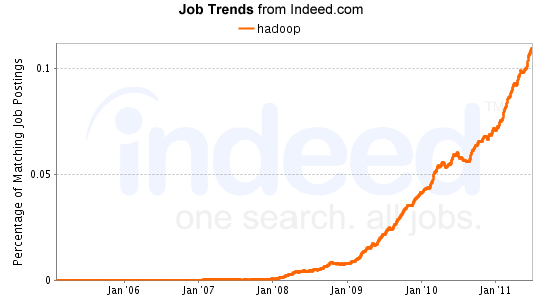

For many, Hadoop is used as a sandbox to dump data of questionable value that is too big and unwieldy or simply too costly to be stored in a traditional RDBMS. Instead, many just load it into Hadoop then aggregate, filter, and structure the data to see IF there is value that can be harvested from the data. For others, Hadoop is used as an alternative to relational databases for data warehousing. The momentum and interest in Hadoop can’t be overlooked, check out the job-trends for Hadoop.

What is SAS doing with Hadoop?

At SAS, we have a number of initiatives around Hadoop to enable SAS users to access, load, process, visualize and analyze data stored in Hadoop. In the coming months we’ll have more to share but here is a sneak peak of what is to come:

- SAS/Access interface to Hadoop – this will enable the SAS user to analyze data stored in Hadoop, it also opens up Hadoop data to processing from SAS client software like Data Integration Studio, Enterprise Guide,and Enterprise Miner. The access engine does more than just move data into and out of Hadoop; it also will enable processing to be “pushed-down” into Hadoop.

- SAS Data Integration Studio transformations – this is a new set of Hadoop transformations that will enable the DI Studio user to load and unload data to and from Hadoop, perform EL-T like processing with HiveQL and ET-L like processing with Pig Latin. Additionally, we are working on a Hadoop specific scoring transform that will enable models developed with Enterprise Miner to be deployed to Hadoop via DI Studio.

Check back here for more updates on these and other projects, and definitely leave a comment if you have questions or ideas about SAS and Hadoop.

21 Comments

How do we contact the author?

Kenny,

I think Mike is currently out of the office, but I'll ask him to contact you after the Labor Day Holiday.

-Alison

When will the SAS/Access interface to Hadoop be available?

I have the same question!

Pingback: Big Brother, Big Data and Big Analytics - SAS Voices

Will Hadoop be supported for comercial produicts like GreenPlum? Will SAS Access to GreenPlum be enhanced to support Hadoop? Are there any definite time lines yet?

Hello Syed, you can take advantage of Greenplum's external table interface to HDFS today with SAS/Access

I'm also interested in when this will be available. Is there any more info?

Hi Allison we expect to have more specific information to share with you in the coming months. Please watch this space and sas.com.

Pingback: Big data defined: It's more than Hadoop - Information Architect

Pingback: Big Hype requires solid Big Data tenets - Information Architect

Have we got an update on this topic? It's been months since the last post..

SAS strategists/product managers - would you please post an update here asap or confirm that there is no reportable progress at SAS on the topic of accessing Hadoop from SAS.

Hello David - thanks for your interest, please see my comment. Thanks, Mark.

I am also eagerly waiting to hear an update on the status of using Hadoop with SAS/Access.

Hello James - thanks for your interest, please see my comment. Thanks, Mark.

Sorry for the delay. We are heads down working on the Hadoop capabilities. These plans include the SAS/ACCESS Interface to Hadoop and the DI Studio transforms that Mike mentioned in his initial post. The overall roadmap goes well beyond this capability and will include elements of what we provide for other data sources – think in-database capability, pushing process into Hadoop, running SAS alongside or embedded with Hadoop, etc. These capabilities will be delivered in a phased approach with the SAS/ACCESS module leading the way. We will provide a more official announcement by the end of March.

Thanks,

Mark Troester

CIO/IT Thought Leader & Strategist

SAS

Pingback: Hurwitz on SAS & Big Data: Experience Matters - Information Architect

Interesting and very exciting developments to include hadoop in the mix for data analytics. I think this will definitely reduce (if not eliminate) any limitations of SAS when it comes to handling very big data (peta bytes) and come up with useful information. I am sure all the marketing companies out there are thrilled.

Can SAS Enterprise Miner work with Hadoop?

Great question, yes in fact it can work with and IN Hadoop. Using the High Performance Data Mining Procedures you can actually do in-Hadoop data transformations, model training and scoring. So for example you could train a neural network and compare it to a Random Forest and have the processes run in Hadoop.

http://www.sas.com/en_us/software/high-performance-analytics/data-mining.html