In my 25 years at SAS, I‘ve noticed the continued use of important algorithms, such as logistic regression and decision trees, which I’m sure will continue to be steady staples for data scientists. After all, they’re easy-to-use, interpretable algorithms. However, they’re not always the most accurate and stable classifiers. To overcome that challenge, the data science community continues to look for the next, best multi-use algorithm.

How hard could it be to create an algorithm that automatically understands key patterns and signals in your data? Deep learning is the current rage in the machine learning community, but is it just a fad? Or will major breakthroughs in machine learning be attributed to deep learning for many years to come?

Before we answer that question, let’s look back at some of the major machine learning developments, to see which generated fads and which stood the test of time. It seems appropriate to start with artificial neural networks from which deep learning evolved.

A brief history of machine learning algorithms and fads

In 1943, Warren McCulloch and Walter Pitts produced a model of the neuron that is still used today in artificial neural networking. This model essentially contains a summation over weighted inputs and an output function of the sum. Perceptron’s were later proposed by Frank Rosenblatt in 1958.

Neural networks took a big hit in 1969 when two scientists, Marvin Minsky and Seymour Papert showed that the “exclusive or” sometimes known as the “XOR” problem could not be solved with a neural network. The backlash almost killed neural networks, since neural networks could only classify patterns that are linearly separable in the input space.

In the mid 1980’s backpropagation was rediscovered which reinvigorated interest in artificial neural networks. Through backpropagation the neural network can adjust the model weights based on comparing the computed output to the actual desired output. They were good non-linear classifiers when trained on suitable data. Neural networks continued to hum along but had lost much of their excitement. At the time, analysts often didn’t have enough good data to train the network, and if the data was available, they didn’t have the computing power required to train sophisticated networks.

Support vector machines (SVMs) introduced by Bernhard Boser, Corinna Cortest and Vladimir Vapnik became widely popular in the 90’s and early 2000s. SVMs use a technique called the kernel trick to transform your data, and then based on these transformations the algorithm finds an optimal hyper plane boundary in new feature space between the possible outputs. Some folks claimed these “kernel machines” could address virtually any classification or regression problem. Most analysts at that time understood how SVMs worked but struggled with the right choice of kernel to use. Without that understanding, you have to know the true structure of the data as an input. SVMs can also be very slow to train on large data especially multi-level labels. SVMs are a very good algorithm that can generalize well but they’re definitely not a silver bullet.

As I mentioned in the intro, data miners (what we used to call data scientists) love decision trees. But they also understand that decision trees generally are not to be as accurate as other approaches, and small changes in the data can cause big changes in the tree (they are not robust). So what was the solution to these problems? Well if one tree is not good enough, why not grow hundreds or even 1000s of decision trees using bootstrap sampling and also perturb the input space for each tree and then combine the decision trees. Data miners became arborists relying on growing a whole forest of trees and then combining them into a single, more accurate model.

Random Forests (which grow a lot of deep trees at random) are very common initial models for Kaggle competitions and other machine learning applications. Another tree-based model named, gradient boosting is probably the best algorithm out of the box for accuracy. Many of my customers continue to rely on boosted trees for difficult problems.

Moving beyond the hype

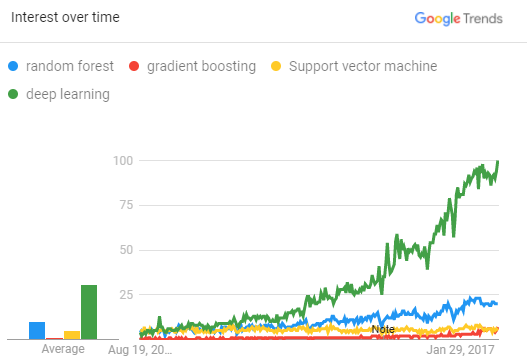

Why do we care about all this history? Is deep learning here to stay or it just another fad algorithm? The figure below from Google Trends shows the exponential growth of search traffic for deep learning in the past 5 years. There’s stable interest in the other algorithms, but deep learning is really hot. Take that, all of you neural networking doubters. The revenge of the connectionist is here!

Deep learning is essentially a neural network with many layers. Deep learning has taken off because organizations of all sizes have the opportunity to capture and mine more and bigger data, including unstructured data. It’s not just Amazon, SAS, Google and other large companies that have access to big data. It’s everywhere. Deep learning needs big data, and we finally have it. These deep networks also need improved computing power to train models on big data, and you now have the ability to train deep learning models on GPUs in reasonable timeframes at a fairly affordable cost.

Is there a down side to deep learning? Deep networks with five or more hidden layers commonly have millions if not billions of parameters to estimate. One of the risks when fitting big models is over fitting the training data. Although there are many techniques designed in machine learning to help prevent overfitting, such as pruning in decision trees, early stopping in neural networks and regularization in SVMs, the most simple and efficient method is to use more data.

On the other hand, even if you have lots of data, you still need really powerful computing power. Deep learning thrives on this combination; data is exactly what it needs to produce accurate models. More data and deep networks for learning often translate into more accurate models.

Plus, deep learning is very accurate when compared to other methods. Deep learning requires less feature engineering (you may have to, say, standardize a set of images) but they can then understand automatically how all of the features (explanatory inputs) interact to derive better model.

As one of my coworkers, Xiangqian Hu, says, there are three key reasons why deep learning is popular - and they all start with the letter B: big data, big models, and big computations. Big data requires big modelling and big modelling requires big computations (such as GPUs). Of course, the three Bs also create three challenges for deep learning. Big data is expensive to collect, label and be store. Big models are hard to optimize. Big computations are often expensive as well. At SAS, we're focusing on new ideas to overcome these challenges and make deep learning more accessible to everyone.

Deep learning applications

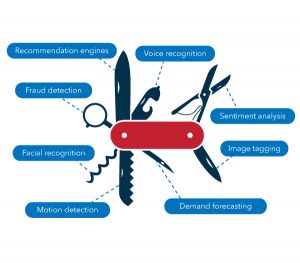

Ultimately, deep learning is popular because, much like a Swiss Army knife, it has many uses and can be applied to many applications, including:

- Speech to text – Deep recurrent networks, a type of deep learning, are really good at interpreting speech and responding accordingly. You can understand deep learning easily by looking at personal assistants. Just say, OK Google or Hey, Siri, before any question, and more often than not, your device will respond with the correct answer.

- Bots in the workplace can talk to you via text or speech, understand context using natural language interaction and then run deep learning to answer analytical questions, like who is likely to churn or what are expected sales for next year.

- Image classification - Deep learning is very accurate for computer vision. SAS is working with a customer to detect manufacturing defects in computer chips.

- Object detection from images and videos has improved tremendously due to deep convolutional networks, another type of deep learning. SAS is using convolutional networks to evaluate athlete performance on a field in real time and to track migration habits of endangered species through footprint analyses.

- Healthcare – Deep convolutional networks are being used to identify diseases from symptoms or X-rays, and to determine whether or not a tumor is cancerous.

- Robotics – As Bill Gates put it: "The AI dream is finally arriving." The predictions in this article, and most robot capabilities, owe a fair amount to deep learning.

- Banking – Deep forward networks are being used to detect fraud among the transaction data.

Could deep learning be just another fad? Perhaps, but right now deep learning is the best universal algorithm out there – period! So, sharpen your deep learning skills and keep deep learning in your pocket until something bigger and better comes along.