Editors note: This is the first in a series of articles.

According to the Global McKinsey Survey on the State of AI in 2021, the adoption of AI is continuing to grow at an unprecedented rate. Fifty-six percent of all respondents reported AI adoption – including machine learning (ML) – in at least one function, up from 50% in 2020.

Businesses are deploying ML models for everything from data exploration, prediction and learning business logic to more accurate decision making and policymaking. ML is also solving problems that have stumped traditional analytical approaches, such as those involving unstructured data from graphics, sound, video, computer vision and other high-dimensional, machine-generated sources.

But as organizations build and scale their use of models, governance challenges increase as well. Most notably, they struggle with:

-

•Managing data quality and exploding data volumes. Most large enterprises host their data in a mix of modern and legacy databases, data warehouses, and ERP and CRM services – both on-premises and in the cloud. Unless organizations have ongoing data management and quality systems supporting them, data scientists may inadvertently use inaccurate data to build models.

• Collaborating across business and IT departments. Model development requires multidisciplinary teams of data scientists, IT infrastructure and line-of-business experts across the organization working together. This can be a difficult task for many enterprises due to poor workflow management, skill gaps between roles, and unclear divisions of roles and responsibilities among stakeholders.

• Building models with existing programming skills. Learning a programming language can take years to perfect, so if developers can build models using the skills they already have, they can deploy new models faster. Modern machine learning services must empower developers to build using their language of choice and provide a low-code/no-code user interface that nontechnical employees can use to build models.

• Scaling models. Enterprises must have the ability to deploy models anywhere – in applications, in the cloud or on the edge. To ensure the best performance, models need to be deployed as lightweight as possible and have access to a scalable compute engine.

• Efficiently monitoring models. Once deployed, ML models will begin to drift and degrade over time due to external real-world factors. Data scientists must be able to monitor models for degradation, and quickly retrain and redeploy the models into production to ensure companies are returning maximum productivity.

• Using repeatable, traceable components. To minimize the time a given model is out of production during rescoring and training, models must be built using repeatable and traceable components. Without a component library and documented version history, there is no way to understand which components were used to build a model, which means it must be rebuilt from scratch.

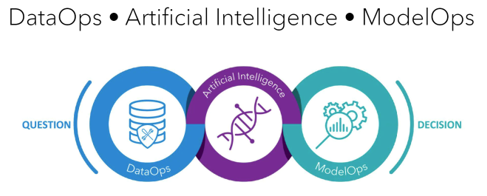

To help you address these challenges, SAS develops its services – and integrations in the Microsoft Cloud – with the analytics life cycle in mind. The analytics life cycle enables businesses to move seamlessly from questions to decisions by connecting DataOps, artificial intelligence and ModelOps in a continuous and deeply interrelated process (see Figure 1). Let us take a closer look at each of these elements:

-

• DataOps. Borrowing from agile software development practices, DataOps provides an agile approach to data access, quality, preparation and governance. It enables greater reliability, adaptability, speed and collaboration in your efforts to operationalize data and analytics workflows.

• Artificial intelligence. Data scientists use a combination of techniques to understand the data and build predictive models. They use statistics, machine learning, deep learning, natural language processing, computer vision, forecasting, optimization, and other techniques to answer real-world questions.

• ModelOps. ModelOps focuses on getting AI models through validation, testing and deployment phases as quickly as possible while ensuring quality results. It also focuses on ongoing monitoring, retraining and governance to ensure peak performance and transparent decisions.

So how can we apply the analytics life cycle to help us solve the challenges we listed above? To answer that, we will have to take a closer look at ModelOps.

Based on longstanding DevOps principles, the SAS ModelOps process allows you to move to validation, testing and deployment as quickly as possible while ensuring quality results. It enables you to manage and scale models to meet demand, and continuously monitor them to spot and fix early signs of degradation.

ModelOps also increases confidence in ML models while reducing risk through an efficient and highly automated governance process. This ensures high-quality analytics results and the realization of expected business value. At every step, ModelOps ensures that deployment-ready models are regularly cycled from the data science team to the IT operations team. And, when needed, model retraining occurs promptly based on feedback received during model monitoring.

-

• Managing data quality and exploding data volumes. ModelOps ensures that the data used to train models aligns with the operational data that will be used in production. Managing data in a data warehouse, such as Azure Synapse Analytics, helps you ingest data from multiple sources and perform all ELT/ETL steps, so data is ready to explore and model.

• Collaborating across business and IT departments. ModelOps empowers data scientists, IT infrastructure and line-of-business experts work in harmony thanks to a mutual understanding of their counterparts and ultimate end users.

• Building models with existing programming skills. Make it easier for everyone on your team to build models using their preferred programming language including SAS, Python and R, in addition to visual drag-and-drop tools for a faster building experience.

• Scaling models. Deploy your models anywhere in the Microsoft Cloud including applications, services, containers and edge devices.

• Efficiently monitoring models. Models are developed with a deployment mindset and deployed with a monitoring mindset so data scientists and analysts can monitor and quickly retrain models as they degrade.

• Using repeatable, traceable components. There are no black box models anymore because the business always knows the data it uses to train the model, monitors that model for efficacy, tracks the history of the code used in training the models, and uses automation for deployment and repeatability.

Next time you will learn how together, SAS and Microsoft empower your ModelOps steps.

To learn more about ModelOps and our partnership with Microsoft, see our whitepaper: ModelOps with SAS Viya on Azure.