One of the first and most important steps in analyzing data, whether for descriptive or inferential statistical tasks, is to check for possible errors in your data. In my book, Cody's Data Cleaning Techniques Using SAS, Third Edition, I describe a macro called %Auto_Outliers. This macro allows you to search for possible data errors in one or more variables with a simple macro call.

Example Statistics

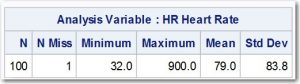

To demonstrate how useful and necessary it is to check your data before starting your analysis, take a look at the statistics on heart rate from a data set called Patients (in the Clean library) that contains an ID variable (Patno) and another variable representing heart rate (HR). This is one of the data sets I used in my book to demonstrate data cleaning techniques. Here is output from PROC MEANS:

The mean of 79 seems a bit high for normal adults, but the standard deviation is clearly too large. As you will see later in the example, there was one person with a heart rate of 90.0 but the value was entered as 900 by mistake (shown as the maximum value in the output). A severe outlier can have a strong effect on the mean but an even stronger effect on the standard deviation. If you recall, one step in computing a standard deviation is to subtract each value from the mean and square that difference. This causes an outlier to have a huge effect on the standard deviation.

Macro

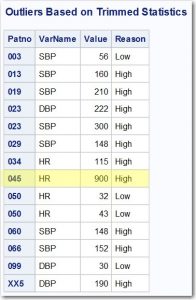

Let's run the %Auto_Outliers macro on this data set to check for possible outliers (that may or may not be errors).

Here is the call:

%Auto_Outliers(Dsn=Clean.Patients, Id=Patno, Var_List=HR SBP DBP, Trim=.1, N_Sd=2.5)

This macro call is looking for possible errors in three variables (HR, SBP, and DBP); however, we will only look at HR for this example. Setting the value of Trim equal to .1 specifies that you want to remove the top and bottom 10% of the data values before computing the mean and standard deviation. The value of N_Sd (number of standard deviations) specifies that you want to list any heart rate beyond 2.5 trimmed standard deviations from the mean.

Result

Here is the result:

After checking every value, it turned out that every value except the one for patient 003 (HR = 56) was a data error. Let's see the mean and standard deviation after these data points are removed.

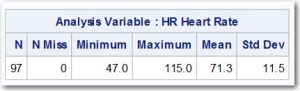

Notice the Mean is now 71.3 and the standard deviation is 11.5. You can see why it so important to check your data before performing any analysis.

You can download this macro and all the other macros in my data cleaning book by going to support.sas.com/cody. Scroll down to Cody's Data Cleaning Techniques Using SAS, and click on the link named "Example Code and Data." This will download a file containing all the programs, macros, and data files from the book. By the way, you can do this with any of my books published by SAS Press, and it is FREE!

Let me know if you have questions in the comments section, and may your data always be clean! To learn more about SAS Press, check out up-and-coming titles, and to receive exclusive discounts make sure to subscribe to the newsletter.