The DO Loop

Statistical programming in SAS with an emphasis on SAS/IML programs

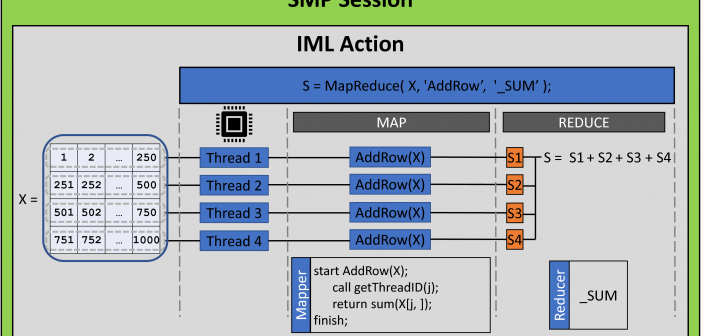

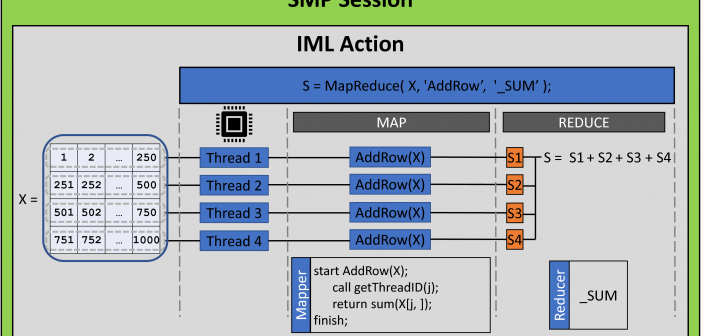

A previous article introduces the MAPREDUCE function in the iml action. (The iml action was introduced in Viya 3.5.) The MAPREDUCE function implements the map-reduce paradigm, which is a two-step process for distributing a computation to multiple threads. The example in the previous article adds a set of numbers by

The iml action in SAS Viya (introduced in Viya 3.5) provides a set of general programming tools that you can use to implement a custom parallel algorithm. This makes the iml action different than other Viya actions, which use distributed computations to solve specific problems in statistics, machine learning, and

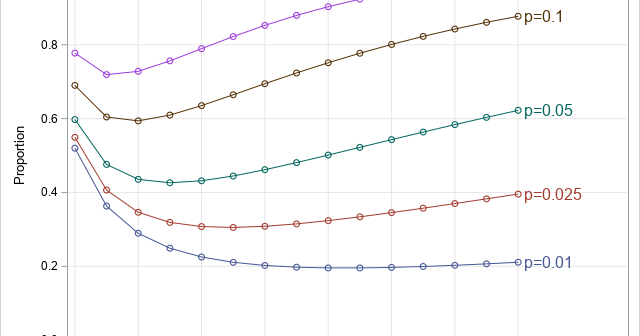

Testing people for coronavirus is a public health measure that reduces the spread of coronavirus. Dr. Anthony Fauci, a US infectious disease expert, recently mentioned the concept of "pool testing." The verb "to pool" means "to combine from different sources." In a USA Today article, Dr. Deborah Birx, the coordinator