Predictive models are a critical component for automated and augmented decision making. As this deployment pattern becomes more widely adopted, two competing priorities emerge. How can we deliver more models faster while being certain of accurate and consistent performance? The key to solving this dilemma is in the automated testing of models. Organisations are turning to a practice called ModelOps – which has many similarities to DevOps practices from software development– that allows for the rapid delivery and maintenance of high-quality analytic models.

What does ModelOps mean in practice?

As with DevOps, the idea behind ModelOps is to increase the efficiency and throughput of the model development, deployment and monitoring process through automation.

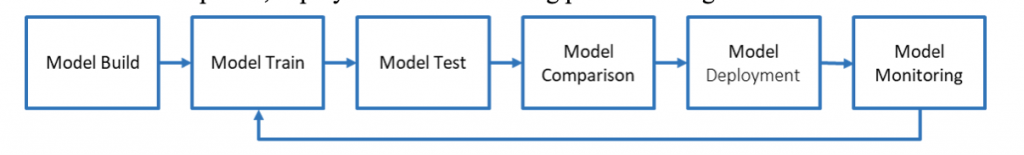

The main stages are:

- Model Build, where a data scientist designs the model to predict the target.

- Model Train, where the model is recalibrated against the latest data.

- Model Test, where the performance of the model is assessed.

- Model Comparison, an optional stage where the model is compared with other competing models to determine the most appropriate for production.

- Model Deployment, where the model is pushed into production and starts to add value to the organisation.

- Model Monitoring, where model performance is continually tracked and evaluated and decisions are made whether to retrain, rebuild or replace a model once performance decays below certain thresholds.

In the DevOps world, the process of testing and deployment into production is automated through tools such as Jenkins. These tools manage the execution of test plans and then only pass the code to deployment if the tests are successful. Similarly, automated testing and deployment is a critical component of ModelOps. Tools like Jenkins can also be used in ModelOps. Ideally, the model test can take place in a container, ensuring a reproducible test harness. Jenkins maintains the logs and associated metadata, and the pipeline will stop before deployment if there is a failure in the test phase.

What should model testing entail?

The first thing to understand about model testing is that the tests must reflect the type of model and its intended use. A test for a model with a binary outcome will be different than one predicting a continuous measure, or one for a forecast. You must also consider whether the predicted outcome is a rare event, such as fraud. In a rare event situation, the test plan needs to work despite the unbalanced nature of the data.

Test data

A model test is only ever as good as the test data it uses, so you should take care to ensure that the test data:

- Is representative of the population to which you are applying the model.

- Has not also been used to build the model – this is like marking your own homework!

- Is timely, i.e., it is appropriate to the time horizon the model will be applied to.

Where possible and appropriate, the test data might include other demographic information which is not used for model building, such as gender or race. This allows the test routine to ensure that the model does not inadvertently disadvantage or discriminate against some population groups.

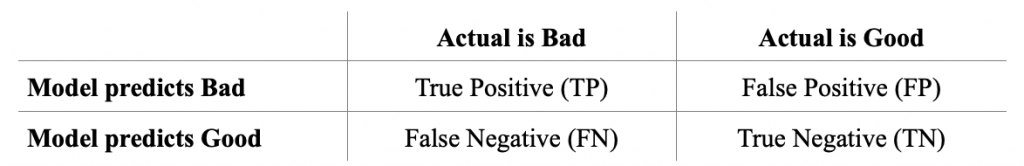

Worked example: A misclassification matrix for a loan decision model

Consider a model that is used to decide whether an applicant should be granted a loan from a bank. In this case, the bank will want to know whether the applicant is likely to default on the loan, or alternatively, that it is safe to lend them the money.

The model will have a binary output, with “bad” indicating a loan default and “good” indicating no default. The test data is run through a model scoring routine, and can then be summarised into a misclassification matrix as follows:

The bank would then need to decide where an appropriate balance is struck between false positives (where a potentially good loan offer is not made) and false negatives (where a loan is offered, and a default occurs). A profit/loss matrix may also be considered to measure the relative cost of these incorrect classifications. For example, if the cost per False Negative is £2,000 and the cost per False Positive is only £500, then you should minimise False Negatives.

Organisations will typically be interested in other model accuracy and goodness of fit metrics, including:

- Precision =TP/(TP+FP)

- Recall or Sensitivity =TP/(TP+FN)

- Specificity =TN/(FP+TN)

- Accuracy =(TP+TN)/(TP+FP+TN+FN)

A test program would, therefore, need to collect the latest model and the appropriate test data, score the test data, and then summarise the output to generate false positive and false negative rates and any other metrics of interest.

Learn more from this webinar about how to improve model management and workflow management by ensuring that your tools, processes and environments are as efficient as they can be.

Other model test statistics to consider

Gini coefficient: During model development, it is common to examine a Receiver Operating Characteristic (ROC) curve that compares recall or sensitivity (true positive rate) with specificity (false positive rate). While it is hard for a test program to examine a chart, the area under the curve can be used to derive the Gini Coefficient, which is a good measure of model performance and can be used as a stage gate to prevent an inaccurate model being deployed.

Interpretation of fit statistics for interval variables is harder because there is no defined measure of what “good” or “accurate” is. It is also hard to relate the value of the model to the business above a simplistic or rules-based approach without a large amount of historical data.

R-squared: The best approach is to use R-squared (or one of its derivations, such as Akaike's information criterion), which looks at the correlation between the actual and predicted values. However, you must exercise caution because R-squared is vulnerable to differences in data sizes (the more data you have, the higher the R-squared). It is also not always true that a high R-squared is a good thing. If it’s too high, then it might imply that the model is being built on indicators that exactly predict the outcome, so the model should be reevaluated carefully!

In general, the threshold between good and bad performance needs to be specific to both the model and test data. This threshold should be derived during the model build phase and applied with an upper and lower limit to check that the model hasn’t become suspiciously good.

_______________________________________________________________

The brilliance of PROC Assess in SAS Viya

A test plan has to align with the models, so it can’t be completely automated. SAS Viya takes much of the work out of generating fit statistics for supervised learning models using PROC Assess, which will generate a raft of statistics and can be applied across a number of by groups to ensure fairness in all demographics. The metrics returned depend on the model type and include misclassification, ROC and lift.

_______________________________________________________________

Model fairness

My colleague Colin Gray has discussed the potential for models to cause unfairness. You should take steps to ensure you deploy models equitably.

One way of achieving this is the FATE framework (Fair, Accountability, Transparency, Explainability) discussed by Iain Brown. But unfortunately, the implementation of related techniques such as LIME (Local Interpretable Model-agnostic Explanations) do not readily lend themselves to serve as a model stage gate.

Perhaps the best that can be done at this stage is to build simpler comparisons (such as misclassification matrices) across representative subgroups so that model deployment can be prevented where a particular gender or ethnicity, for example, is discriminated against.

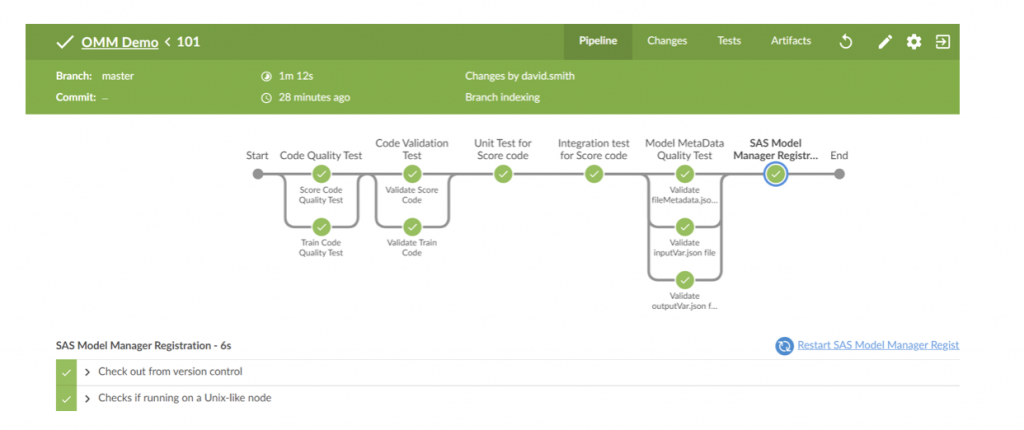

Testing as part of ModelOps automation

In a ModelOps environment such as a Jenkins Pipeline, if either threshold is breached, the test program will generate an error and the process will terminate, preventing an inappropriate deployment of the model. An example of this is shown in the figure below, where a Jenkins pipeline is managing the testing process before the model is registered into SAS Model Manager to be available for deployment.

The long view

Model testing needs to align the model being deployed and needs to ensure accuracy and fairness. Testing should be automated as part of ModelOps processes and should act as a stage gate to prevent the deployment of poor models into production. It also needs to reflect the business aims. Monitoring of model performance by tracking test scores and other KPIs should also continuously happen to determine how quickly a model deteriorates and when a retrain of the parameters, a full retrain or retirement of the model is required. Model testing is a necessary condition for AI decision making to be trusted, transparent and fair.