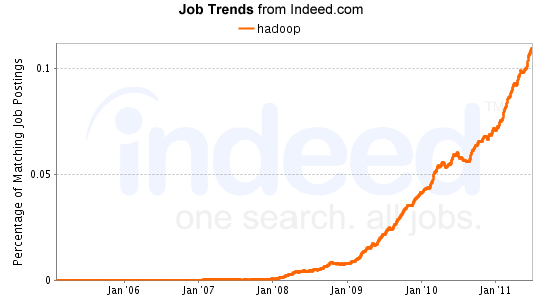

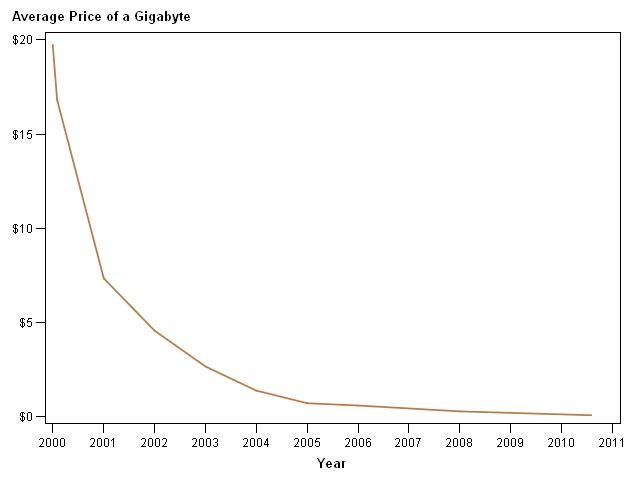

Ask any data warehouse architect what is driving the “big data” craze and he’ll tell you it has to do with the cost of storage and the advancements in distributed computing and most likely will mention Hadoop. Most enterprise data warehouses are constrained by cost and scalability of relational databases.