Generative AI has seen drastic improvement in image, video, audio, and text generation within the last few years. Humans, on the other hand, are still catching up on determining when it’s appropriate to use Generative AI and how to review the content generated by AI before sharing it with others. In September 2025, research found 40% of office workers received AI Slop in the last month.

AI Slop is AI-generated content that is poor-quality and low-effort. AI Slop can include research that cites made-up references, blogs with unnecessarily superfluous word choices, emails that are difficult to understand, slide decks that are mostly fluff, code with nonsensical design patterns, or images with strange visuals. In the workplace, individuals who receive AI Slop from their coworkers spend up to 2 hours fixing it. Individuals who receive AI Slop also report feeling annoyed, confused, and offended. An overreliance on AI tooling can not only frustrate your coworkers but can also erode critical thinking and reduce your own understanding.

AI Slop isn’t exclusively a workplace problem. AI Slop has been found in advertising, films, blogs, books, and social media. And one person’s AI Slop may be another's misinformation. For example, social media is rife with content where users argue in the comments about whether the post is AI Generated.

Like AI Slop, Deepfakes are AI-generated content, but Deepfakes are created to depict real or realistic people, events, or environments. Deepfakes can be created for entertainment but they are often used to spread misinformation and disinformation. Deepfakes can be used to increase engagement for a social media post or cause divisions between communities. And while some attempts at Deepfakes are clear AI Slop, others are harder to spot. Creators of Deepfakes are often not transparent in how the image, video, or audio was generated as it is often not in their best interest to do so.

Best practices for using Generative AI thoughtfully as an individual

Generative AI can be a powerful productivity tool when used thoughtfully. Many of us don’t pick up Generative AI or Copilot tools with the intention of frustrating our coworkers or spreading disinformation. When used correctly, it can save time, help brainstorm, reduce busy work, and visualize ideas. The most effective users of Generative AI are often using the content as a starting point, in brainstorming, as a proof-of-concept, or they have the expertise to manually review and edit the content before sharing. Additionally, they’re appropriately informing others when content as AI-generated.

For personal use, AI is a great starting point for research as it can compile information from several sources. Recent enhancements in the Microsoft 365 Copilot and the Google AI Overview include links to source material. This can help you validate the information provided by AI as well as provide additional relevant information. To expand your knowledge of the area, be sure to include additional sources of information.

Copilots can also be helpful for visualizing ideas or creating mock-ups. One great use case is for home decorating. You can take pictures of a room in your home and use Generative AI to add furniture or try different paint colors. It’s a great way to see how something may look before spending anything. In software development, product teams can visualize how a new feature or enhancement could look in their user interface. They can even create simple, clickable prototypes to gather feedback on the enhancement before development begins.

Before you start sharing your AI Generated content to others, you should review it, understand it, and adjust as necessary. How detailed your review is and how much you adjust depends on how important the content is and how much you care about the opinions of those who will see it. Printing throw-away decorations for a birthday party? You can get by just making sure the people have a normal number of fingers. But if you’re pushing code to the production server, you’re going to need a much more robust review.

With generated images, many of us can recognize when the image matches its subject and can make a judgment call on whether the image is acceptable or not. Authors who lean on AI to generate blogs read through the generated text and have enough experience in the area to determine if the content represents the subject area well. (Side note that my blogs are not AI generated because I personally enjoy writing, especially when I get to slip a meme into my posts).

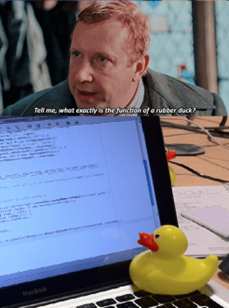

Some code is more important than others. When developing an app for yourself, manual testing and a review of the generated code is often enough. Alternatively, production software has very strong standards for a reason. One bad block of code can add security vulnerabilities, delete databases, and cause outages. Nonetheless, many programmers uses AI as a brainstorming tool. You can bounce ideas off of Copilots (like a rubber duck), get suggestions of things to try, or ask for feedback. For production software, AI generated code should be manually reviewed for accuracy and efficiency, robustly tested, and documented as generated by AI. AI can make programming accessible for many, but learning to program is still a worthwhile skill as it helps you understand the quality of AI-generated code.

Stop the Slop

In the workplace, AI Slop can create additional work for the coworkers who have to translate or clean up the poor work. Sending and posting AI Slop may cause others to think less of you. When applied without transparency, AI Slop can mislead or misinform others. If used as a replacement for learning, reliance on AI can reduce critical thinking skills.

If the personal, professional, or educational costs of AI Slop still haven’t convinced you, think of the poor AI models! AI Slop can end up in the training data for future generative models and lead to model collapse. Model collapse can lead to lower quality, less accurate, and less creative content generated by future models. Like the hammer, AI is a productive tool when wielded correctly but can be a destructive force when used carelessly.

If you have any tips or use cases for using Generative AI effectively, please share those in the comments! Or, if you want to commiserate and share the worst examples of AI Slop you’ve come across, that’s also welcome.