As earth completes its routine annual circle around the sun and a new (and hopefully better) year kicks in, it is a perfect occasion to reflect on the idiosyncrasy of time.

While it is customary to think that 3+2=5, it is only true in sequential world. In parallel world, however, 3+2=3. Think about it: if you have two SAS programs one of which runs 3 hours, and the second one runs 2 hours, their total duration will be 5 hours if you run them one after another sequentially, but it will take only 3 hours if you run them simultaneously, in parallel.

I am sure you remember those “filling up a swimming pool” math problems from elementary school. They clearly and convincingly demonstrate that two pipes will fill up a swimming pool faster than one. That’s the power of running water in parallel.

The same principle of parallel processing (or parallel computing) is applicable to SAS programs (or non-SAS programs) by running their different independent pieces in separate SAS sessions at the same time (in parallel). Divide and conquer.

You might be surprised at how easily this can be done, and at the same time how powerful it is. Let’s take a look.

SAS/CONNECT

SAS/CONNECT® is one of the oldest SAS products that was developed to enable SAS programs to run in multi-machine client/server environments. In its original incarnation SAS/CONNECT allowed only synchronous execution of the SAS remote sessions. That is when a remote session was started, the client session was suspended until processing by the server session had completed. That was client/server, but not parallel processing.

MP CONNECT

Starting with SAS 8 released in 1999, Multi-Process Connect (MP CONNECT) parallel processing functionality was added to SAS/CONNECT enabling you to execute multiple SAS sessions asynchronously. When a remote SAS session kicks off asynchronously, a portion of your SAS program is sent to the server session for execution and control is immediately returned to the client session. The client session can continue with its own processing or spawn one or more additional asynchronous remote server sessions.

Running programs in parallel on a single machine

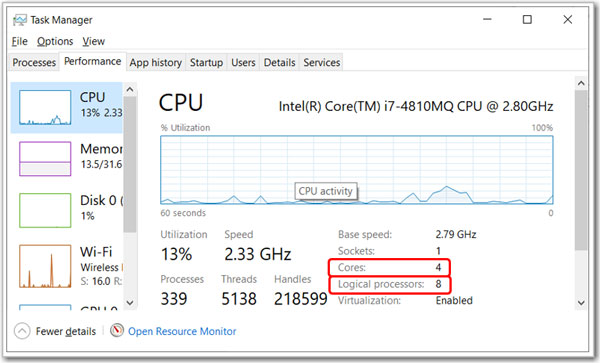

Sometimes, what comes across as new is just well forgotten old. They used to be Central Processing Units (CPU), but now they are called just processors. Nowadays, practically every single computer is a “multi-machine” (or to be precise “multi-processor”) device. Even your laptop. Just open Task Manager (Ctrl-Alt-Delete), click on the Performance tab and you will see how many physical processors (or cores) and logical processors your laptop has:

That means that this laptop can run eight independent SAS processes (sessions) at the same time. All you need to do is to say nicely “Dear Mr. & Mrs. SAS/CONNECT, my SAS program consists of several independent pieces. Would you please run each piece in its own SAS session, and run them all at the same time?” And believe me, SAS/CONNECT does not care how many logical processors you have, whether your logical processors are far away from each other “remote machines” or they are situated in a single laptop or even in a single chip.

Here is how you communicate your request to SAS/CONNECT in SAS language.

Spawning multiple SAS sessions using MP Connect

Suppose you have a SAS code that consists of several pieces – DATA or PROC steps that are independent of each other, i.e. they do not require to be run in a specific sequence. For example, each of the two pieces generates its own data set.

Then we can create these two data sets in two separate “remote” SAS sessions that run in parallel. Here is how you do this. (For illustration purposes, I just create two dummy data sets.)

options sascmd="sas"; /* Current datetime */ %let _start_dt = %sysfunc(datetime()); /* Prosess 1 */ signon task1; rsubmit task1 wait=no; libname SASDL 'C:\temp'; data SASDL.DATA_A (keep=str); length str $1000; do i=1 to 1150000; str = ''; do j=1 to 1000; str = cats(str,'A'); end; output; end; run; endrsubmit; /* Process 2 */ signon task2; rsubmit task2 wait=no; libname SASDL 'C:\temp'; data SASDL.DATA_B (keep=str); length str $1000; do i=1 to 750000; str = ''; do j=1 to 1000; str = cats(str,'B'); end; output; end; run; endrsubmit; waitfor _all_; signoff _all_; /* Print total duration */ data _null_; dur = datetime() - &_start_dt; put 30*'-' / ' TOTAL DURATION:' dur time13.2 / 30*'-'; run; |

In this code, the key elements are:

SASCMD= System Option - specifies the command that starts a server session on a multiprocessor computer.

SIGNON Statement - initiates a connection between a client session and a server session.

RSUBMIT Statement - marks the beginning of a block of statements that a client session submits to a server session for execution.

ENDRSUBMIT statement - marks the end of a block of statements that a client session submits to a server session for execution.

WAITFOR Statement - causes the client session to wait for the completion of one or more tasks (asynchronous RSUBMIT statements) that are in progress.

SIGNOFF Statement - ends the connection between a client session and a server session.

Parallel processing vs. threaded processing

There is a distinction between parallel processing described above and threaded processing (aka multithreading). Parallel processing is achieved by running several independent SAS sessions, each processing its own unit of SAS code.

Threaded processing, on the other hand, is achieved by developing special algorithms and implementing executable codes that run on multiple processors (threads) within the same SAS session. Many SAS PROCs are multi-threaded by design (e.g. SORT, SQL, MEANS/SUMMARY, TABULATE, REG, GLM, and others) and every single one can run multi-threaded.

Time savings achieved by parallel processing

Simplistically, total duration of several independent processes running in parallel is equal to the duration of the longest of these processes.

In the code example above, we have two single-threaded SAS DATA steps and we can take full advantage of the SAS MP CONNECT. This code spawns off two “remote” SAS sessions, each running its own DATA step. On my PC, SAS log showed that DATA_A step took 3 minutes to complete, while DATA_B step took 2 minutes to complete. However, total duration of these two tasks was 3 minutes, which is equal to the duration of the longest of the two processes. That is how we get 3 + 2 = 3.

Mathematically speaking, the duration of running multiple processes sequentially is Dseq = d1 + d2 + ... + dn, while the duration of running them in parallel is Dpar = max(d1, d2, ..., dn), where d1, d2, ..., dn are durations of the individual component processes.

It might not look too remarkable when we cut run time from 5 minutes to 3 minutes, but it becomes more significant for longer processes. For example, cutting run time from 5 hours to 3 hours saves 2 whole hours. That time saving can be made even more impressive if we can split our SAS code into more than two parallel processes.

Interestingly, when running in parallel, each step DATA_A and DATA_B takes slightly longer than when they run in a single session. If we run these two data steps in a single session sequentially, DATA_A step takes 2:45 minutes, and DATA_B step takes 1:45 minutes. That is because even though parallel SAS processes run on separate processors, they still share (and compete for) some other common computer resources such as RAM and hard drive.

If our parallel SAS processes each run multithreaded PROC, we may not yield meaningful time saving as each such PROC will employ multiple processors at the same time.

On the other hand, you can still accelerate your program performance by running it in parallel even on a single processor. That is because your spawned “remote” sessions might require different resources at different times: while one session using the processor, the other one might be doing input/output (I/O) operations thus eliminating the processor idle time.

For deeper discussion and understanding, you may consider delving into Amdahl's law, which provides theoretical background and estimation of potential time saving achievable by parallel computing on multiple processors.

Passing information to and from “remote” SAS sessions

Besides passing pieces of SAS code from client sessions to server sessions, MP CONNECT allows you to pass some other SAS objects.

Passing data library definitions

For example, if you have a data library defined in your client session, you may pass that library definition on to multiple server sessions without re-defining them in each server session.

Let’s say you have two data libraries defined in your client session:

libname SRCLIB oracle user=myusr1 password=mypwd1 path=mysrv1; libname TGTLIB '/sas/data/datastore1'; |

In order to make these data libraries available in the remote session all you need is to add inheritlib= option to the rsubmit statement:

rsubmit task1 wait=no inheritlib=(SRCLIB TGTLIB); |

This will allow libraries that are defined in the client session to be inherited by and available in the server session. As an option, each client libref can be associated with a libref that is named differently in the server session:

rsubmit task1 wait=no inheritlib=(SRCLIB=NEWSRC TGTLIB=NEWTGT); |

Passing macro variables from client to server session

%SYSLPUT Statement allows a client session to create a single macro variable in the server session or to copy a specified group of macro variables to the server session. Here is a general syntax of the %syslput statement:

%SYSLPUT _ALL_ | _AUTOMATIC_ | _GLOBAL_ | _LOCAL_ | _USER_

</LIKE=‘character-string’><REMOTE=server-ID>;

And here is an example of how to pass the value of a client-session-defined macro variable _start_dt to a remote session as macro variable rem_start_dt:

options sascmd="sas"; %let run_dt = %sysfunc(datetime()); signon task1; %syslput rem_run_dt=&run_dt / remote=task1; rsubmit task1 wait=no; %put &=rem_run_dt; endrsubmit; waitfor task1; signoff task1; |

Passing macro variables from server to client session

Similarly, %SYSRPUT Statement assigns a value from the server session to a macro variable in the client session. The general syntax of the %sysrput statement is one of the following:

- %SYSRPUTmacro-variable=value;

(macro-variable specifies the name of a macro variable in the client session.)

- %SYSRPUT_USER_ </LIKE=‘character-string’>;

(/LIKE=<‘character-string’ >specifies a subset of macro variables whose names match a user-specified character sequence, or pattern.)

Here is a code example that passes two macro variables, rem_start and rem_year from the remote session and outputs them to the SAS log in the client session:

options sascmd="sas"; signon task1; rsubmit task1 wait=no; %let start_dt = %sysfunc(datetime()); %sysrput rem_start=&start_dt; %sysrput rem_year=2021; endrsubmit; waitfor task1; signoff task1; %put &=rem_start &=rem_year; |

Summary

SAS’ Multi-Process Connect is a simple and efficient tool enabling parallel execution of independent programming units. Compared to sequential processing of time-intensive programs, it allows to substantially reduce overall duration of your program execution.

Additional resources

- SAS timer - the key to writing efficient SAS code

- SAS® Meets Big Iron: High Performance Computing in SAS Analytic Procedures

- Beating Gridlock: Parallel Programming with SAS® Grid Computing and SAS/CONNECT®

- Advanced Multithreading Techniques for Performance Improvement of SAS® Processes

See also: Using shell scripts for massively parallel processing

18 Comments

This is a wonderful article, thank you for taking the time to share it. And thanks for explaining it like a teacher would.

Thanks John, for your such a nice comment. And you are very welcome!

I experimented a bit with this pattern last year, using SAS Data Integration Studio (which encapsulates these techniques using its loop node), and hit a peak "parallelisation factor" of ~ 600 without trying very hard.

In the "ETL" space this is well trodden ground, but you have to take into account factors like I/O contention, how to orchestrate the results into one place without inflicting locks on yourself, and your platforms overall "bandwidth".

You can get really advanced if needs be, even getting into spinning up transient "worker servers" when you're on a Cloud platform.

But, it's really about "orchestration" in the end.

I can dig out the jobs I put together as a test harness to simulate this, and the Linux scripts I used to monitor exactly how many SAS processes I hit at peak.

(Be careful trying this on less than industrial strength kit - you can "melt" your laptop if you push things too far, and more or less DDOS it to death with SAS processes as I have a few times. I ended up hard rebooting my laptop and the whole machine basically became unresponsive when with no limits imposed.)

Thank you, Angus, for your insightful and constructive comment. With "parallelisation factor of ~ 600" you really pushed it to the limit. It would have been interesting to see your research on such extreme cases. Maybe SAS Global Forum paper is in order?

I understand that overloading a laptop can lead to a sort of computer paralysis / freeze, but "melting" is probably an overstatement. 🙂

Nice blog.

The blog mentions that when running in parallel, each step DATA_A and DATA_B takes slightly longer than when they run in a single session.

That doesn’t have to be the case and when that is the case it’s an indication there are resource limitations on the machine(s) and they

aren’t architected and/or tuned for optimal SAS performance. When you see this type of thing you may want to invest some time in

diagnosing the root cause since, in some cases, it can be not too hard, via tuning, to eliminate or reduce resource limitations.

A good place to start if you wanted to do some investigation would be the usage note found here https://support.sas.com/kb/42/197.html .

Or just reach out to your SAS account representative, my team at SAS does a lot of that type of thing.

Thank you, Glenn, for your comment and suggested resources. Obviously, you can optimize performance by fine-tuning and proper configuration to get the best out of it, but still with two processes running in parallel on a multi-processor single machine there will be some resources (memory, I/O, etc.) these two processes will be competing for, while there will be no such competition when they run one by one. Therefore, total duration will be cut slightly less than in half.

Hi Leonid!

Very interesting article (as always!). SAS/Connect is very useful for "making things parallel" in SAS!

It can be done with "simpler" means too. The SYSTASK can achieve the same result and requires only the BASE SAS on the board.

I did some demo tutorial about it sometime ago here:

https://pages.mini.pw.edu.pl/~jablonskib/SASpublic/Parallel-processing-in-BASE-SAS.sas

and discussed it at communities.sas.com post:

https://communities.sas.com/t5/SAS-Programming/SAS-Parrallel-Processing/m-p/699295#M213925

All the best

Bart

Thank you, Bart, for your feedback and sharing your code implementations. As always, there are many ways in SAS to solve a problem. Using SYSTASK to spawn multiple processes via OS commands running in a background mode is a viable option, especially in the SAS environments with SAS/CONNECT absent. But I wouldn't call it "simpler means". Using SAS/CONNECT you can have all your SAS code pieces in one place, while with SYSTASK you have greater overhead as you have to write your code out to external files to be run by OS command initiated by SYSTASK. To me using SAS/CONNECT provides simpler and cleaner solution (but obviously it comes at a cost of SAS/CONNECT license).

Still, the SYSTASK approach is quite practical. As matter of fact I am going to use it at one of our client's SAS installation that does not have SAS/CONNECT. For those who is interested, here are some other valuable resources on running SAS programs in parallel using just BASE SAS:

- Parallel Processing Your Way to Faster Software and a Big Fat Bonus: Demonstrations in Base SAS® by Troy Martin Hughes;

- Parallel Processing with Base SAS by Jim Barbour.

Thanks Leonid!

Great stuff! rsubmit is very powerful. I did a related presentation as well in which I use rsubmit to break up single datasets into parallel chunks and bring them back together again. A presentation on this can be found below:

https://www.youtube.com/watch?v=T3PxhJg8ReU

Sample code and a PowerPoint can be found on github:

https://github.com/stu-code/SAS-rsubmit-examples

Regards,

Stu

Thank you, Stu, for your feedback and great related presentations. I am sure a lot of SAS users will find them useful.

Thanks for sharing. MP Connect requiring SAS/CONNECT module, I was wondering if the same approach could be "refactored" for SAS/Base using DS2 Procedure so-called Threaded Processing, perhaps even further with Call Execute or dosubl(.) ? Another idea could be to take advantage of Lua multithreading capability (google tells me it's called "coroutines" with lua) within Proc Lua. Generally speaking, mixing 2 different heterogeous languages is rather a weak point costwise (corresponding technical debt for maintenance increasing at power 2). On the other hand, Lua is a mature language that demonstrates amazing capabilities and younger ones among us (this is an 'Old-school' speaking) might be less tempted to learn legacy techniques like MP Connect.

Thank you, Ronan, for your feedback. Obviously, there are multiple ways of implementing parallel processing, and you suggested a handful of great ideas. My goal for this post was rather modest: to educate many SAS users about parallel processing capabilities and powers.

That’s a very nice summary overview of SAS/Connect. While it’s been around for quite a while, many SAS developers have not been exposed to it.

I used to work with it a lot with it years ago, and just this week had to pull back that information for a project I am on, however my scenario was not MP/Connect near as much as remote processing and remote libraries.

Still, I wish I had seen this post on Monday instead of Wednesday! Nicely presented.

Thank you, Carl, for such a nice feedback. I see it as a dual SAS/CONNECT usage: multi-machine remote parallel processing as well as single machine multi-processor parallel processing.

Hi Leonid,

Thanks for writing this article and for touching so effectively on the salient points! SAS/Connect, MMP, is wonderfully powerful tool of the SAS 9 suite. It seems fewer and fewer people remember it as time moves on.

Session spawn overhead is not insignificant, so it is good to be sure sessions have LOTS of work to do. Otherwise the overhead may dilute the efficiency gains.

If one does not have access to multiple servers, SAS also supports SMP (almost like a form of threading, utilizing cores). Like MMP session spawn overhead is not insignificant, so it is good to be sure sessions have LOTS of work to do.

The CaryPD project utilizes SAS 9 SMP for parts of its heavy calculation needs. Specifically, as it watches and raises Alerts against Ankle Monitor feed readings (IoT?). 100s of millions, into the billions, of calculations are needed. SMP helps perform them in a reasonable time interval.

Thank you, Robert, for your feedback. The CaryPD project is a great example to highlight the value of parallel processing with SAS/CONNECT.

Leonid,

Happy New Year!

Wow; what an excellently-written and informative article this is!

I am going to forward it to several people who I believe will benefit from it.

Best wishes,

--Michael

Thank you, Michael. Welcome back! Happy New Year and best wishes to you too!