Hadoop has been called a game changer technology. Here’s why:

Hadoop has been called a game changer technology. Here’s why:

- DATA IS DIFFERENT: We now have to deal with both structured and unstructured data.

- NO LIMITS: We now deal with Terabyte or Petabyte data size and not just with old Megabyte.

- COMPLEXITY: We work with complex multi-server architectures and with a complete "zoo"!

- COSTS: We spend less money on hardware and storage.

As SAS technology is becoming more integrated with Hadoop, I noticed a lot of situations where SAS administrators need to work closely with Hadoop administrators, and in some situation substitute them.

It's becoming vital for SAS administrators to be familiar with Hadoop ecosystem, as I suggested in my previous article.

Ideally the administrator should be able to perform Hadoop admin tasks, run basic command on the clusters, and check some of the main indicators related to the health of the environment.

Any complex IT platform needs to always be available for users and functional in any of its subsystem because users normally ask for better and better performance.

On the other hand, we expect more users will start to use the platform and that will accommodate different workload request now, and more importantly, in the future.

Here are some simple examples based on traditional SAS administrator's tasks and their new versions with Hadoop:

1-SERVERS AND SERVICES AVAILABILITY

To check if your server/servers are running, you should logon on those servers (or ping it) and check processes with OS level commands or with third party tools (for SAS you can use SAS Environment Manager or sas.servers script on Unix)

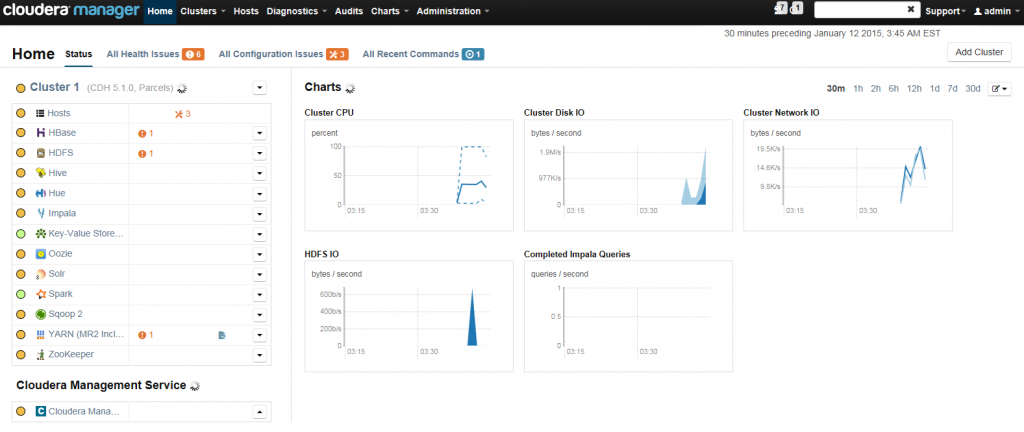

Now with Hadoop, the basic operations are the same but with larger scale. You have to check more and more servers/nodes using traditional OS commands or using Hadoop specific commands (HDFS, MapReduce, Puppet etc) or check services status from web interfaces like Cloudera Manager.

2-FILE SYSTEMS SPACE

SAS admins always need to monitor available disk space with different tools provided at operating system level or from a third-party software provider. (troubles start with DISK FULL!)

Hadoop HDFS is the new distributed file system, and you must use its commands for checking space and permissions. (NB: the total space is the sum of disk space of all the cluster's nodes) For example:

hadoop dfsadmin –report

hadoop fs -du –h

hadoop fsck

3-LOAD BALANCING AND WORKLOAD MANAGEMENT

Within large SAS deployment, SAS Grid Manager or other SAS technologies are used to distribute jobs and workload on different machines. A SAS admin is responsible for configuration and monitoring. Here’s a handy SAS Grid Computing reference. Normally Hadoop deployment consists of a very large number of machines (sometimes hundreds) and the resources and workload management is provided by YARN.SAS is part of this complex platform and can be integrated with YARN, as well as SAS 9.4 maintenance 3.

4-SYSTEM GROWTH

Adding or removing servers from a big SAS traditional deployment can be a very time consuming task, normally you need to add SAS metadata, install and configure software, and adjust configuration files.

With Hadoop, adjusting the number of nodes in a cluster is easy because the platform is designed for adding/removing resources natively.

Summing up, as a SAS administrator you need to understand the new environment you are working with, become familiar with Hadoop architecture/ecosystem and with the various new tools and technologies.

Watch this video to learn more about the top 5 Hadoop admin tasks.