While it appears to be a simple web site widget, the "Recommended by SAS" sidebar is made possible by an application of the full Analytics Life Cycle. This includes data collection and prep, model building and test, API wrappers with a gateway for monitoring, model deployment in containers with orchestration in Kubernetes, and model assessment using feedback from click actions on the recommendations. We built this by using a combination of SAS analytics and open source tools -- see the SAS Global Forum paper by my colleague, Jared Dean, for the full list of ingredients.

Jared and I have been working for over a year to bring this recommendation engine to life. We discussed it at SAS Global Forum 2018, and finally near the end of 2018 it went into production on communities.sas.com. The engine scores user visits for new recommendations thousands of times per day. The engine is updated each day with new data and a new scoring model.

Now that the recommendation engine is available, Jared and I met again in front of the camera. This time we discussed how the engine is working and the efforts required to get into production. Like many analytics projects, the hardest part of the journey was that "last mile," but we (and the entire company, actually) were very motivated to bring you a live example of SAS analytics in action.

You can watch the full video on YouTube, and find more details at communities.sas.com. The video is 17 minutes long -- longer than most "explainer"-type videos. But there was a lot to unpack here, and I think you'll agree there is much to learn from the experience. Not ready to binge on our video? I'll use the rest of this article to cover some highlights.

Good recommendations begin with clean data

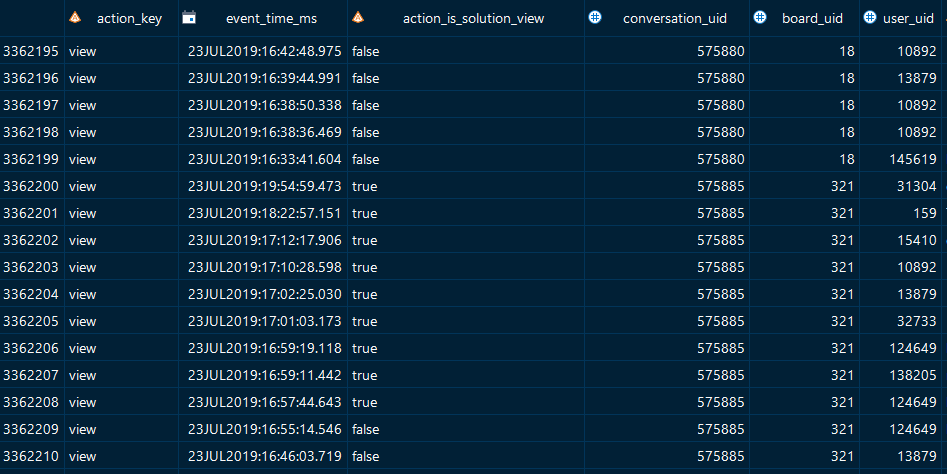

The approach of our recommendation engine is based upon your viewing behavior, especially as compared to the behavior of others in the community. With this approach, we don't need to capture much information about you personally, nor do we need information about the content you're reading. Rather, we just need the unique IDs (numbers) for each topic that is viewed, and the ID (again, a number) for the logged-in user who viewed it. One benefit of this approach is that we don't have to worry about surfacing any personal information in the recommendation API that we'll ultimately build. That makes the conversation with our IT and Legal colleagues much easier.

Our communities platform captures details about every action -- including page views -- that happens on the site. We use SAS and the community platform APIs to fetch this data every day so that we can build reports about community activity and health. We now save off a special subset of this data to feed our recommendation engine. Here's an example of the transactions we're using. It's millions of records, covering nearly 100,000 topics and nearly 150,000 active users.

Building user item recommendations with PROC FACTMAC

Starting with these records, Jared uses SAS DATA step to prep the data for further analysis and a pass through the algorithm he selected: factorization machines. As Jared explains in the video, this algorithm shines when the data are represented in sparse matrices. That's what we have here. We have thousands of topics and thousands of community members, and we have a record for each "view" action of a topic by a member. Most members have not viewed most of the topics, and most of the topics have not been viewed by most members. With today's data, that results in a 13 billion cell matrix, but with only 3.3 million view events. Traditional linear algebra methods don't scale to this type of application.

Jared uses PROC FACTMAC (part of SAS Visual Data Mining and Machine Learning) to create an analytics store (ASTORE) for fast scoring. Using the autotuning feature, the FACTMAC selects the best combination of values for factors and iterations. And Jared caps the run time to 3600 seconds (1 hour) -- because we do need this to run in a predictable time window for updating each day.

proc factmac data=mycas.weighted_factmac outmodel=mycas.factors_out; autotune maxtime=3600 objective=MSE TUNINGPARAMETERS=(nfactors(init=20) maxiter(init=200) learnstep(init=0.001) ) ; input user_uid conversation_uid /level=nominal; target rating /level=interval; savestate rstore=mycas.sascomm_rstore; run; |

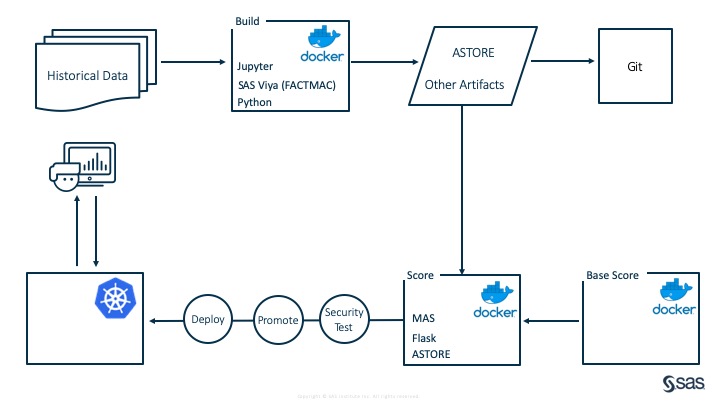

Using containers to build and containers to score

To update the model with new data each day and then deploy the scoring model as an ASTORE, Jared uses multiple SAS Viya environments. These SAS Viya environments need to "live" only for a short time -- for building the model and then for scoring data. We use Docker containers to spin these up as needed within the cloud environment hosted by SAS IT.

Jared makes the distinction between the "building container," which hosts the full stack of SAS Viya and everything that's needed to prep data and run FACTMAC, and the "scoring container", which contains just the ASTORE and enough code infrastructure (include the SAS Micro Analytics Service, or MAS) to score recommendations. This scoring container is lightweight and is actually run on multiple nodes so that our engine scales to lots of requests. And the fact that it does just the one thing -- score topics for user recommendations -- makes it an easier case for SAS IT to host as a service.

Monitoring API performance and alerting

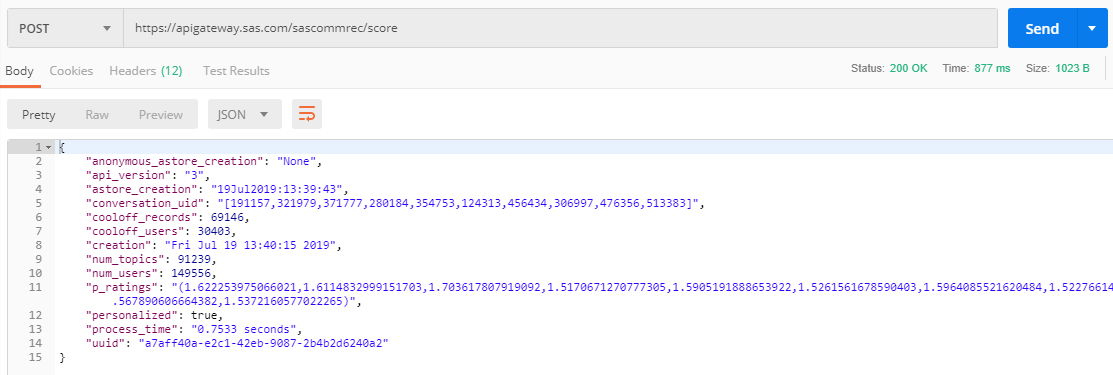

To access the scoring service, Jared built a simple API using a Python Flask app. The API accepts just one input: the user ID (a number). It returns a list of recommendations and scores. Here's my Postman snippet for testing the engine.

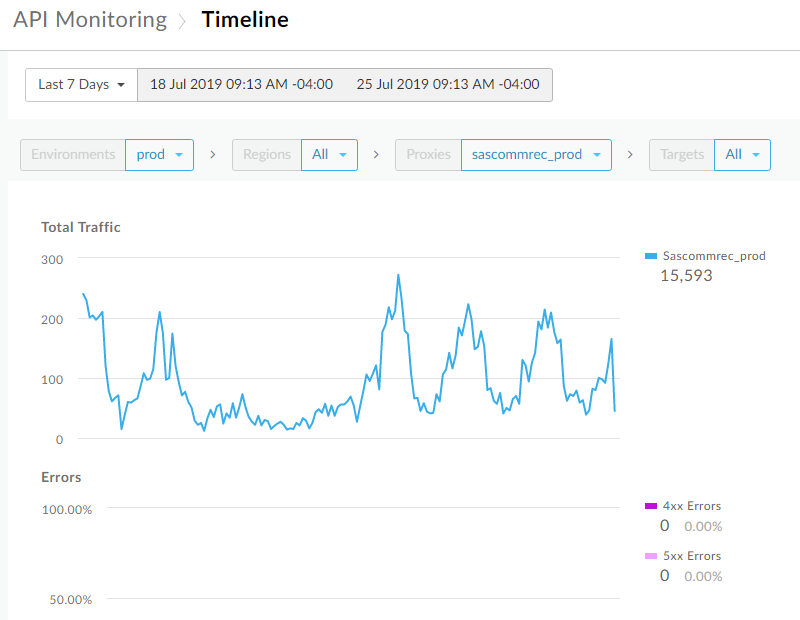

To provision this API as a hosted service that can be called from our community web site, we use an API gateway tool called Apigee. Apigee allows us to control access with API keys, and also monitors the performance of the API. Here's a sample performance report for the past 7 days.

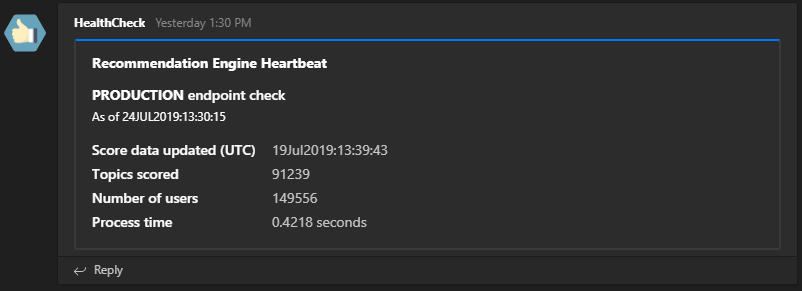

In addition to this dashboard for reporting, we have integrated proactive alerts into Microsoft Teams, the tool we use for collaboration on this project. I scheduled a SAS program that tests the recommendations API daily, and the program then posts to a Teams channel (using the Teams API) with the results. I want to share the specific steps for this Microsoft Teams integration -- that's a topic for another article. But I'll tell you this: the process is very similar to the technique I shared about publishing to a Slack channel with SAS.

Are visitors selecting recommended content?

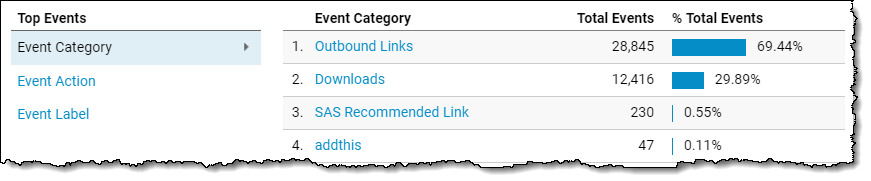

To make it easier to track recommendation clicks, we added special parameters to the recommended topics URLs to capture the clicks as Google Analytics "events." Here's what that data looks like within the Google Analytics web reporting tool:

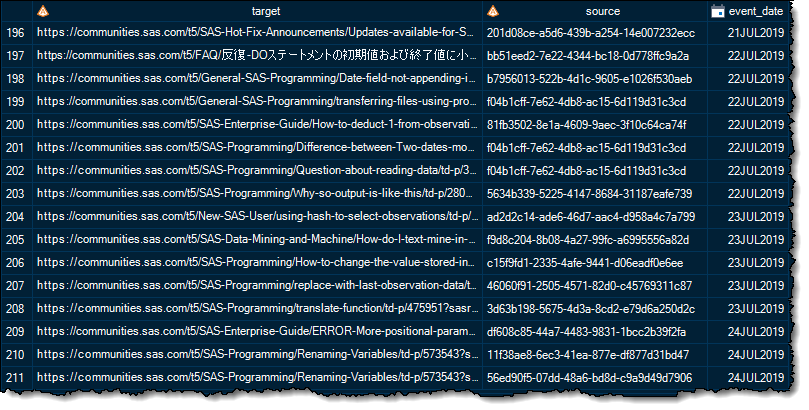

You might know that I use SAS with the Google Analytics API to collect web metrics. I've added a new use case for that trick, so now I collect data about the "SAS Recommended Click" events. Each click event contains the unique ID of the recommendation score that the engine generated. Here's what that raw data looks like when I collect it with SAS:

With the data in SAS, we can use that to monitor the health/success of the model in SAS Model Manager, and eventually to improve the algorithm.

Challenges and rewards

This project has been exciting from Day 1. When Jared and I saw the potential for using our own SAS Viya products to improve visitor experience on our communities, we committed ourselves to see it through. Like many analytics applications, this project required buy-in and cooperation from other stakeholders, especially SAS IT. Our friends in IT helped with the API gateway and it's their cloud infrastructure that hosts and orchestrates the containers for the production models. Putting models into production is often referred to as "the last mile" of an analytics project, and it can represent a difficult stretch. It helps when you have the proper tools to manage the scale and the risks.

We've all learned a lot in the process. We learned how to ask for services from IT and to present our case, with both benefits and risks. And we learned to mitigate those risks by applying security measures to our API, and by limiting the execution scope and data of the API container (which lives outside of our firewall).

Thanks to extensive preparation and planning, the engine has been running almost flawlessly for 8 months. You can experience it yourself by visiting SAS Support Communities and logging in with your SAS Profile. The recommendations that you see will be personal to you (whether they are good recommendations...that's another question). We have plans to expand the engine's use to anonymous visitors as well, which will significantly increase the traffic to our little API. Stay tuned!

4 Comments

Exiting stuff. Very nice post Chris, thank you!

Really great Chris. I used this a few times now in a few customer discussions. Great way to show ASTORE and containers being used w/ MS Teams. Thanks.

Pingback: Use cosine similarity to make recommendations - The DO Loop

Pingback: How to publish to a Microsoft Teams channel using SAS - The SAS Dummy