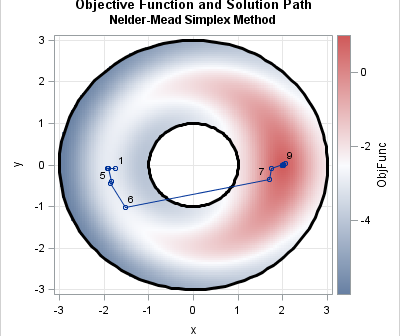

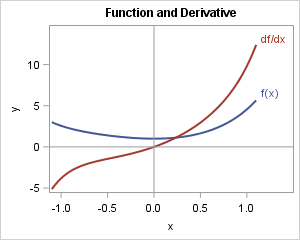

Many optimization problems in statistics and machine learning involve continuous parameters. For example, maximum likelihood estimation involves optimizing a log-likelihood function over a continuous domain, possibly with constraints. Recently, however, I had to solve an optimization problem for which the solution vector was a 0/1 binary variable. To solve the