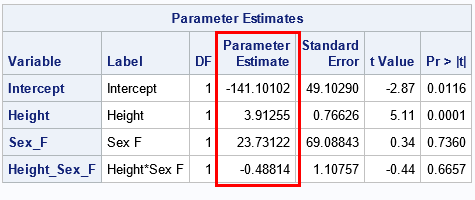

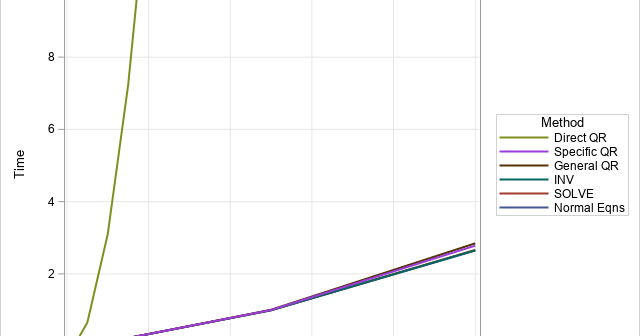

In a previous article, I discussed various ways to solve a least-square linear regression model. I discussed the SWEEP operator (used by many SAS regression routines), the LU-based methods (SOLVE and INV in SAS/IML), and the QR decomposition (CALL QR in SAS/IML). Each method computes the estimates for the regression