In statistical programming, we often assume that the data are stored in a matrix or a data set and can be read at will. For example, if you want to compute a sample mean or standard deviation, you simply pass a vector to a built-in function, and the software spits out the statistics. The function that computes the mean passes through the data once. The textbook formula for the standard deviation requires two passes through the data.

Modern computers are so fast, and RAM is so cheap and plentiful, that today's data analysts probably don't think twice about using multi-pass algorithms to compute statistics. But it didn't used to be that way!

This year SAS is turning 50 years old. In the 1970s, RAM was expensive. It was also small: A mainframe computer might have 64KB or less! Furthermore, if the data was on a physical media such as a tape, rewinding the tape to read the data again was a time-consuming operation. Consequently, many research papers in the 1960s and '70s focused on how to compute statistics with one pass through the data. One such paper was by Welford (1962, Technometrics), who showed a simple method for calculating corrected sums of squares and products. This one-pass method could be used to compute the mean, variance, and other central moments without using any arrays or extra memory.

An old proverb states, "everything old is new again," so perhaps it is not surprising that Welford's one-pass formula for the mean and standard deviation has applications in the modern era. One application is streaming data. If you want to compute statistics for a utility meter or a weather station that monitors conditions continuously, it would be inefficient to store all the data. It is better to compute statistics on-the-fly, if you can, and maybe store the hourly mean value.

Another modern application is for Monte Carlo simulations. Often, we guess how many iterations we need, run the simulation, and hope that the resulting estimate is accurate enough to use. Welford's method provides a way to compute the standard error while the simulation runs, which means that we can program logic to exit the simulation when the Monte Carlo estimate satisfies a predetermined level of accuracy.

This article discusses how to use Welford's one-pass formula to compute moments for streaming data. A subsequent article shows how to use this technique to monitor and stop a Monte Carlo simulation.

The traditional "offline" mean and variance

In the old days, algorithms were sometimes described as "online" or "offline." Since these terms are before the creation of the internet, they sound a little strange to the modern programmer. In computer science, an "offline" algorithm requires that the complete data are available before the algorithm is run. In contrast, an "online" algorithm processes its input serially, one datum at a time, and doesn't store the raw data. I prefer to call this type of algorithm a one-pass algorithm that requires little or no auxiliary storage.

The familiar "textbook" method for computing means and variances is an offline algorithm. To compute a mean, you sum the full set of N numbers then divide by N. For the variance, you pass through the data again, this time computing the sum of squared deviances (SSE) from the mean. You divide the SSE by N-1 to obtain the variance. If desired, take the square root to obtain the standard deviation. For example, the following SAS IML program shows the traditional offline algorithm:

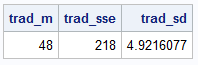

proc iml; y = {48, 51, 46, 37, 45, 50, 46, 51, 51, 55}; /* A traditional or "offline" calculation of the mean and standard deviation uses two passes through the data: One for the mean, and a second pass for the sum of squared deviations, which gives the variance or standard deviation. */ trad_m = mean(y); trad_sse = ssq(y - trad_m); trad_sd = std(y); print trad_m trad_sse trad_sd; /* sample mean = 48; StdDev ~ 5 */ |

This two-pass approach is perfectly fine for everyday data analysis.

It requires that the data vector y is known and fits in memory.

But it is possible to compute these statistics without using extra memory.

The next section shows how to compute the

mean and standard deviation by using a one-pass algorithm.

The Welford update formula for "online" data

Welford (1962) published a one-pass algorithm for computing the mean and variance.

This method was popularized when Knuth featured it in his seminal book,

The Art of Computer Programming, Volume II: Seminumerical Algorithms (1981).

The Welford method maintains two running totals: one for the mean, and another for the sum of squared differences.

For each new datum, the algorithm updates these running totals.

The following IML program implements Welford's algorithm by explicitly looping over the elements of the y vector:

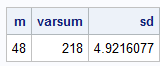

/* The Welford update formula: First, initialize the Welford variables for the one-pass mean and std dev */ m = 0; varsum = 0; /* Update the running totals for each new datum y[k]. */ do k = 1 to nrow(y); varsum = varsum + (k-1) * (y[k] - m)**2 / k; /* update the sum of squared differences */ if k > 1 then sd = sqrt(varsum/(k-1)); m = m + (y[k] - m) / k; /* update the mean */ end; print m varsum sd; |

In the program, the value of m on the right side of the mean-update formula

is the sample mean after seeing k-1 observations, so denote it by mk-1.

The formula overwrites m with the mean of k observations, so denote the left side as mk.

It's not hard to see why Welford's update formula is true.

You can rewrite the mean of k observations by

writing the sum of k observations as the k_th observation plus the sum of the earlier k-1 observations, as follows:

\(

\begin{aligned}

m_k &= \frac{1}{k} \sum_{i=1}^{k} x_i \\

&= \frac{1}{k} \left( x_k + \sum_{i=1}^{k-1} x_i \right) \\

&= \frac{1}{k} \left( x_k + \frac{k-1}{k-1} \sum_{i=1}^{k-1} x_i \right) \\

&= \frac{1}{k} \left( x_k + (k-1) m_{k-1} \right) \\

&= m_{k-1} + ( x_k - m_{k-1} ) / k

\end{aligned}

\)

The derivation of the formula for the variance is only slightly more complicated. You can find the derivation online.

Other useful one-pass methods

Since SAS is celebrating its 50th birthday, I should mention the importance of one-pass methods in early SAS procedures. In the 1970s, SAS was able to leverage one-pass methods to compute basic statistics, regression analyses, and multivariate analyses on data sets that had a huge number of observations. The regression and multivariate analyses were possible because SAS computes the uncorrected sum of squares and crossproducts (USSCP or X`X) matrix in one pass through the data.. From the USSCP matrix, you can use the SWEEP operator to obtain the centered SSCP, which then yields the covariance and correlation matrices. SAS used these and other one-pass methods to serve customers who needed to analyze Big Data quickly.

Summary

This article discusses the value of Welford's method for the mean and variance. Welford's method is an example of a one-pass formula. Today, memory is cheap and plentiful, so one-pass formulas are not as necessary as they were in the 1970s when SAS was founded. However, they are still useful. Modern sensors generate hundreds of kilobytes of data every minute, so it makes sense to compute statistics "on the fly" rather than storing all the raw data. In addition, the next article shows how you can use Welford's formulas in Monte Carlo simulations to monitor convergence.