This article compares several ways to find the elements that are common to multiple sets. I test which method is the fastest in the SAS/IML language. However, all algorithms are intrinsically fast, which raises an important question: when is it worth the time and effort to optimize an algorithm?

The idea for this topic came from reading a blog post by 'mikefc' at the "Cool but Useless" blog about the relative performance of various ways to intersect vectors in R. Mikefc used vectors that contained between 10,000 and 100,000 elements and whose intersection was only a few dozen elements. In this article, I increase the sizes of the vectors by an order of magnitude and time the performance of the intersection function in SAS/IML. I also ensure that the sets share a large set of common elements so that the intersection is sizeable.

The intersection of large sets

In general, finding the intersection between sets is a fast operation. In languages such as R, MATLAB, and SAS/IML, you can store the sets in vectors and use built-in functions to form the intersection. Because the intersection of two sets is always smaller than (or equal to) the size of the original sets, computing the "intersection of intersections" is usually faster than computing the intersection of the original (larger) sets.

The following SAS/IML program creates eight SAS/IML vectors that have between 200,000 and 550,000 elements. The elements in the vectors are positive integers, although they could also be character strings or non-integers. The vectors contain a large subset of common elements so that the intersection is sizeable. The vectors A, B, ..., H are created as follows:

proc iml; call randseed(123); NIntersect = 5e4; common = sample(1:NIntersect, NIntersect, "replace"); /* elements common to all sets */ source = 1:1e6; /* elements are positive integers less than 1 million */ BaseN = 5e5; /* approx magnitude of vectors (sets) */ A = sample(source, BaseN, "replace") || common; /* include common elements */ B = sample(source, 0.9*BaseN, "replace") || common; C = sample(source, 0.8*BaseN, "replace") || common; D = sample(source, 0.7*BaseN, "replace") || common; E = sample(source, 0.6*BaseN, "replace") || common; F = sample(source, 0.5*BaseN, "replace") || common; G = sample(source, 0.4*BaseN, "replace") || common; H = sample(source, 0.3*BaseN, "replace") || common; |

The intersection of these vectors contains about 31,600 elements. You can implement the following methods that find the intersection of these vectors:

- The XSECT function in SAS/IML accepts up to 15 arguments. You can therefore make a single call to find the intersection.

- If you have dozens or hundreds of sets to intersect, you can loop over the sets and call the XSECT function multiple times. (Recall that the VALUE function enables you to loop over the names of the sets and access the vectors by names.) For example, you can let W1 be the intersection of the first two sets, let W2 be the intersection of W1 with the third set, and so forth. Because the size of the intersection is typically smaller than the size of the sets, later intersections require less time to compute than earlier intersections. (Although I only implement pairwise intersections, you could conceivably intersect more than two sets at a time.)

- As 'mikefc' mentions, it is quicker to intersect small sets than to intersect large sets. Thus you can preprocess the names of the sets and sort them by size. This heuristic might make a difference when the set sizes vary greatly.

The following SAS/IML statements implement these three methods and compute the time required to intersect each:

/* 1. one call to the built-in method */ t0 = time(); w = xsect(a,b,c,d,e,f,g,h); tBuiltin = time() - t0; /* 2. loop over variable names and form pairwise intersections */ varName = "a":"h"; t0 = time(); w = value(varName[1]); do i = 2 to ncol(varName); w = xsect(w, value(varName[i])); /* compute pairwise intersection */ end; tPairwise = time() - t0; /* 3. Sort by size of sets, then loop */ varName = "a":"h"; t0 = time(); len = j(ncol(varName), 1); do i = 1 to ncol(varName); len[i] = ncol(value(varName[i])); /* number of elements in each set */ end; call sortndx(idx, len); /* sort smallest to largest */ sortName = varName[,idx]; w = value(sortName[1]); do i = 2 to ncol(sortName); w = xsect(w, value(sortName[i])); /* compute pairwise intersection */ end; tSort = time() - t0; print tBuiltin tPairwise tSort; |

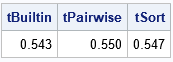

For this example data, each method takes about 0.5 seconds to find the intersection of eight sets that contain a total of 3,000,000 elements. If you rerun the analysis, the times will vary by a few hundredths of a second, so the times are essentially equal. ('mikefc' also discusses a few other methods, including a "tally method." The tally method is fast, but it relies on the elements being positive integers.)

Whether to optimize or not

There is a quote that I like: "In theory, there is no difference between theory and practice. But, in practice, there is." (Published by Walter J. Savitch, who claims to have overheard it at a computer science conference.) For the intersection problem, you can argue theoretically that pre-sorting by length is an inexpensive way to potentially obtain a boost in performance. However, in practice (for this example data), pre-sorting improves the performance by less than 1%. Furthermore, the absolute times for this operation are short, so saving a 1% of 0.5 seconds might not be worth the effort.

Even if you never compute the intersection of sets in SAS, there are two take-aways from this exercise:

- When you measure the performance of algorithms, use example data that is similar to the real data that you expect to encounter. The performance of this problem depends on the size of the sets, the number of sets, and the contents of the sets. The results that I show here are based on my choices for these parameters. Different parameters might yield different results.

- Always consider the total time for an algorithm before you start to optimize it. If your analysis takes 30 seconds, there is no need to optimize a step in the analysis that takes 0.5 seconds. No matter how well you optimize that step, it is not going to substantially shrink the total run time of your analysis.