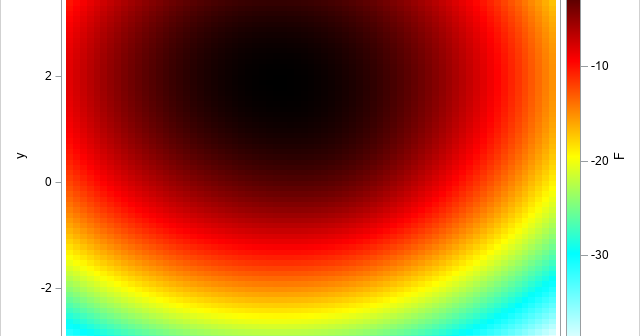

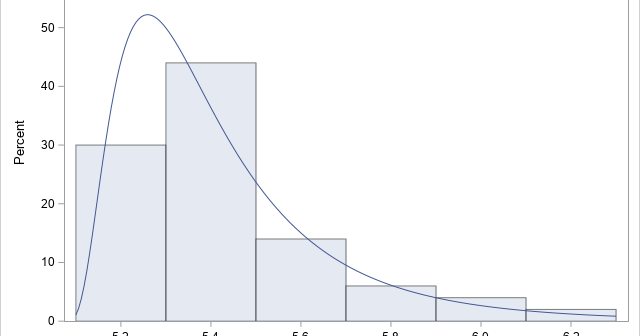

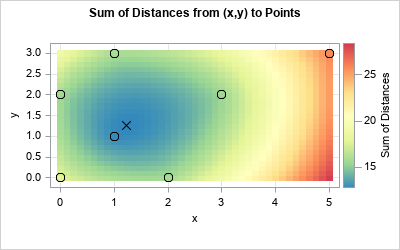

Given a set of N points in k-dimensional space, can you find the location that minimizes the sum of the distances to the points? The location that minimizes the distances is called the geometric median of the points. For univariate data, the "points" are merely a set of numbers \(\{p_1,