In my previous post, I shared how I’ve been working on a fascinating project with one of the world's largest pharmaceutical companies. The company is applying SAS Viya computer vision capabilities to an advanced medical device to help identify potential quality issues on the production line. By providing 100% visual inspection of these devices, the company will deliver on its commitment to deliver the highest quality and care to hundreds of millions of patients who rely on lifesaving medication each and every day.

In this post, I’d like to expand on how a team of experts from the production line and SAS data scientists identified key areas of interest on the device. The team defined a "golden image" to compare with every device image to see how closely they matched. We also defined a larger set of training images, which included 90% good images and 10% defective images. This "oversampling" is required because the actual number of devices affected by an issue is extremely low at less than 0.05% of the production. However, due to the hundreds of millions of devices manufactured each year, even such a small percentage means hundreds of thousands of defective devices. This can lead to the rejection of millions more to maintain the quality of the devices delivered to the patient.

After identifying the golden image and training data, we then used a wide range of data analysis techniques with the images to help identify the best approach to isolating the suspect devices. These included image processing, comparison and statistical analysis, artificial intelligence and deep learning.

Image processing and comparison and statistical analysis techniques

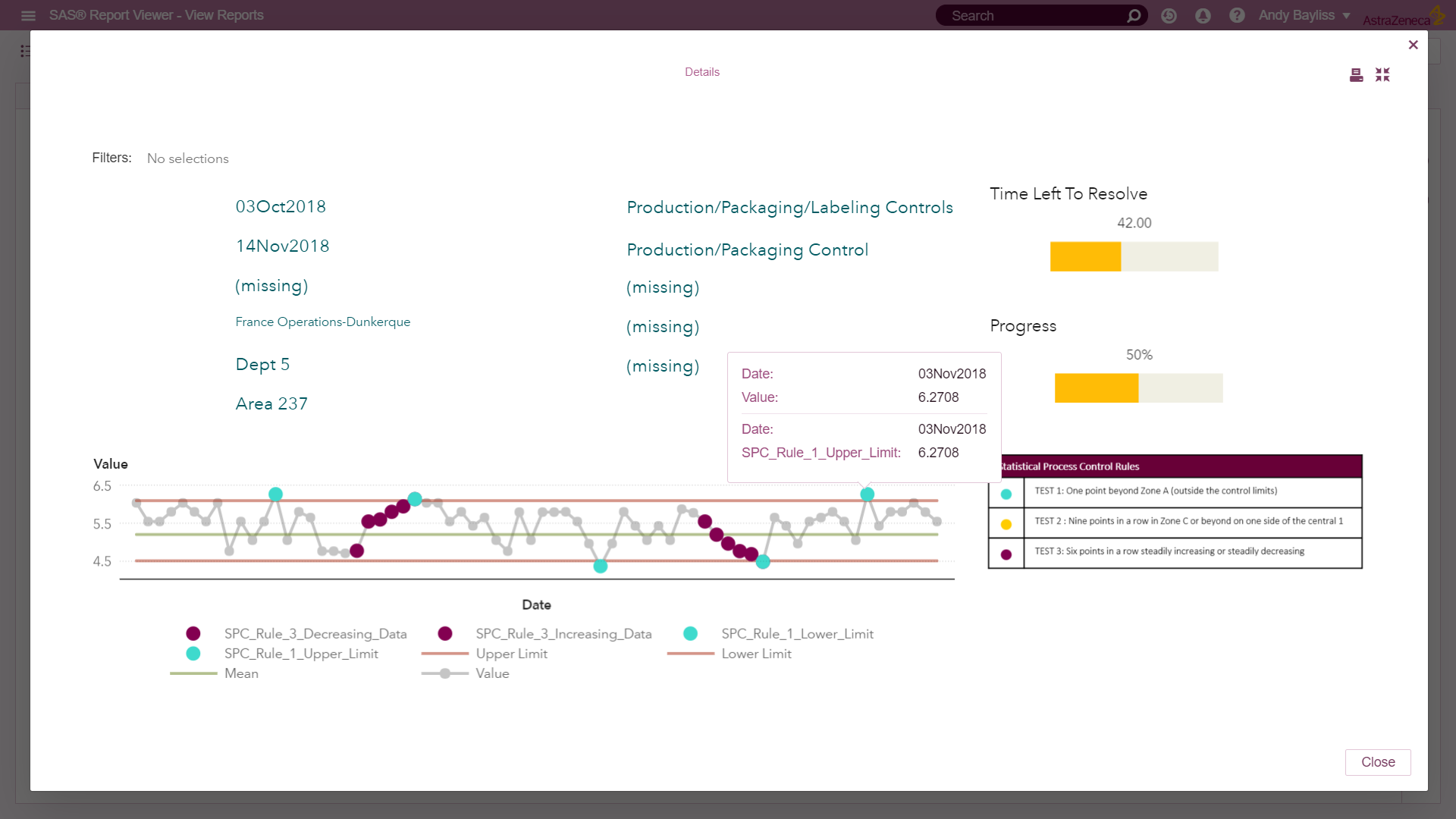

This first set of techniques relies on traditional statistical process control (SPC) to assess deviation from the normal based on carefully defined, statistically based performance limits. These techniques highlight where the gathered information deviates from, or has the potential to deviate from, these norms. By processing the images using SAS computer vision techniques, we extract a range of numerical measures from the images to provide overall image characteristics, such as overall brightness, number of edges detected, brightness, RGB colour intensity, etc. for each and every pixel. We can then process these measures in the same way manufacturing experts use measures such as length, weight or concentration to identify potential issues for investigation.

The golden image

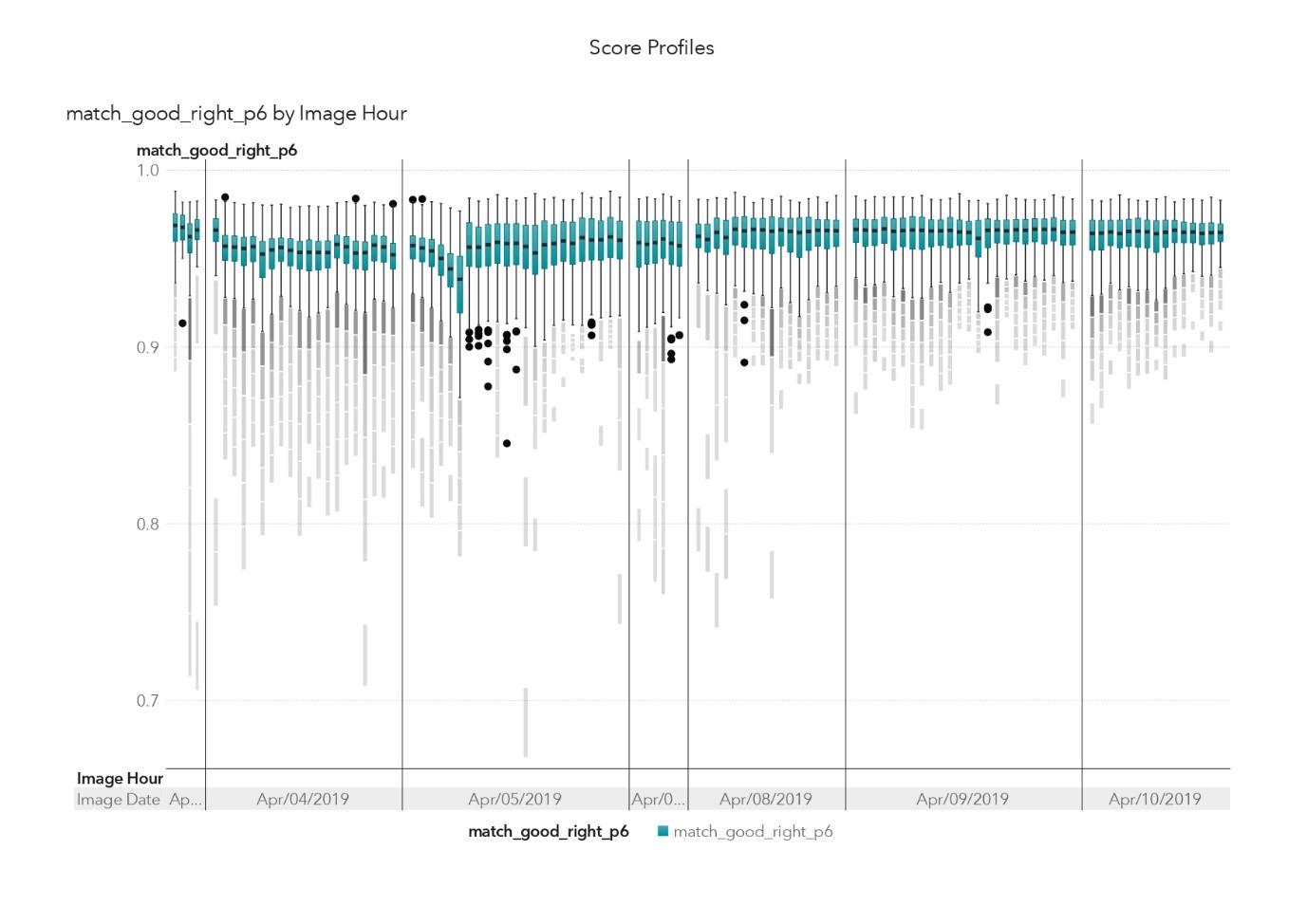

We can also extend these techniques by using an image matching process. Each and every image is compared to the golden image identified by the experts to check differences for the entire image or for a focused area at the subpixel level. This produces a "match score" that we can then use to assess how well the images match. For example, a score of 1.000 would be a perfect match, while a score of 0.950 might indicate a minor difference. A score of 0.900 might indicate a significant difference, and a score of 0.000 would indicate a total mismatch, possibly caused by a missing device on the production line.

Statistical process control analysis with SAS.

Statistical process control analysis with SAS.

Image analysis – blueberry muffin or Chihuahua?

The second, more advanced, set of techniques makes use of SAS artificial intelligence and deep learning techniques. Deep learning is a type of machine learning that trains a computer to perform humanlike tasks and is very effective at identifying images or making predictions. Instead of organizing data to run through predefined equations, deep learning relies on the training data to train the computer to learn by recognizing patterns. Rather than using just one golden image to develop a match score, we used multiple examples of good and bad images.

Image analysis projects typically require hundreds of thousands of images to train a model to recognise the object. There’s a great example of a deep learning model that has been trained to recognise dogs with 100,000 images – but still can’t differentiate between blueberry muffins and dogs, for example.

In our case, however, we have images from a high-resolution, fixed camera that produces extremely consistent pictures of the devices. Thus, we only needed a relatively small number of "good" and "bad" images. I’m not going to say how many, as more training images are always better. But suffice to say we only used a fraction of the available good images to train the model and generate an outstanding level of accuracy.

Champion and challenger

We used this approach to develop a range of different neural network architectures. The architecture is the internal structure of how the individual "nodes" in the network are linked. This allowed us to choose a "champion" and "challenger" model, which is a standard approach when developing any sort of predictive model. These models are then used to predict how likely it is that the assessed image is good or bad. It’s worth noting that these deep learning models were developed using a cloud-based server with over 4,000 GPUs, which delivered fast results. This enabled us to refine the models very quickly.

We then combined these two sets of techniques to rank the device images for assessment by the operator, using a server located next to the manufacturing production line. This system assessed every manufactured device, allowing the operators to focus their expert review on those devices most likely to be defective. They could then reject a much smaller number of devices, if and when they identified a potential issue.

Deep learning is a type of machine learning that trains a computer to perform humanlike tasks and is very effective at identifying images or making predictions. Click To TweetThis has been a really fascinating project to work on. And I’m really looking forward to repeating it with other organisations that want to introduce advanced analytics and machine learning to improve their manufacturing processes as part of their Manufacturing 4.0 programs.

If that’s you, then please contact me or reach out to your local SAS contact, who will be delighted to help.

Read How to Do Deep Learning With SAS

2 Comments

Great blog on an exceptional piece of work - thank you for writing this Andy!

Thanks Haidar, we could not have done this without a great SAS Team and our fantastic software and I'm looking forward to many similar projects in the future